Whereas roboticists have launched more and more subtle techniques over the previous a long time, educating these techniques to efficiently and reliably sort out new duties has usually proved difficult. A part of this coaching entails mapping high-dimensional knowledge, reminiscent of photos collected by on-board RGB cameras, to goal-oriented robotic actions.

Researchers at Imperial Faculty London and the Dyson Robotic Studying Lab lately launched Render and Diffuse (R&D), a technique that unifies low-level robotic actions and RBG photos utilizing digital 3D renders of a robotic system. This technique, launched in a paper revealed on the arXiv preprint server, may in the end facilitate the method of educating robots new abilities, decreasing the huge quantity of human demonstrations required by many present approaches.

“Our current paper was pushed by the objective of enabling people to show robots new abilities effectively, with out the necessity for intensive demonstrations,” mentioned Vitalis Vosylius, last yr Ph.D. scholar at Imperial Faculty London and lead creator. “Current methods are data-intensive and battle with spatial generalization, performing poorly when objects are positioned in another way from the demonstrations. It’s because predicting exact actions as a sequence of numbers from RGB photos is extraordinarily difficult when knowledge is restricted.”

Throughout an internship at Dyson Robotic Studying, Vosylius labored on a mission that culminated within the improvement of R&D. This mission aimed to simplify the educational drawback for robots, enabling them to extra effectively predict actions that may enable them to finish numerous duties.

In distinction with most robotic techniques, whereas studying new handbook abilities, people don’t carry out intensive calculations to find out how a lot they need to transfer their limbs. As an alternative, they sometimes attempt to think about how their fingers ought to transfer to sort out a selected process successfully.

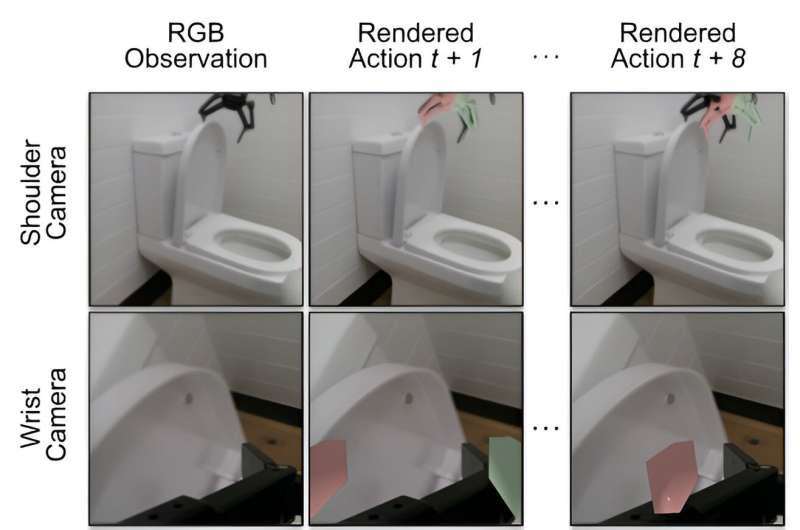

“Our technique, Render and Diffuse, permits robots to do one thing related: ‘think about’ their actions throughout the picture utilizing digital renders of their very own embodiment,” Vosylius defined. “Representing robotic actions and observations collectively as RGB photos allows us to show robots numerous duties with fewer demonstrations and achieve this with improved spatial generalization capabilities.”

For a robotic to study to finish a brand new process, it first must predict the actions it ought to carry out based mostly on the photographs captured by its sensors. The R&D technique basically permits robots to study this mapping between photos and actions extra effectively.

“As hinted by its title, our technique has two major parts,” Vosylius mentioned. “First, we use digital renders of the robotic, permitting the robotic to ‘think about’ its actions in the identical approach it sees the surroundings. We achieve this by rendering the robotic within the configuration it might find yourself in if it had been to take sure actions.

“Second, we use a discovered diffusion course of that iteratively refines these imagined actions, in the end leading to a sequence of actions the robotic must take to finish the duty.”

Utilizing extensively accessible 3D fashions of robots and rendering methods, R&D can vastly simplify the acquisition of latest abilities whereas additionally considerably decreasing coaching knowledge necessities. The researchers evaluated their technique in a sequence of simulations and located that it improved the generalization capabilities of robotic insurance policies.

Additionally they showcased their technique’s capabilities in successfully tackling six on a regular basis duties utilizing an actual robotic. These duties included placing down the bathroom seat, sweeping a cabinet, opening a field, inserting an apple in a drawer, and opening and shutting a drawer.

“The truth that utilizing digital renders of the robotic to characterize its actions results in elevated knowledge effectivity is basically thrilling,” Vosylius mentioned. “Because of this by cleverly representing robotic actions, we will considerably cut back the info required to coach robots, in the end decreasing the labor-intensive want to gather intensive quantities of demonstrations.”

Sooner or later, the strategy launched by this crew of researchers might be examined additional and utilized to different duties that robots may sort out. As well as, the researchers’ promising outcomes may encourage the event of comparable approaches to simplify the coaching of algorithms for robotics purposes.

“The power to characterize robotic actions inside photos opens thrilling potentialities for future analysis,” Vosylius added. “I’m significantly enthusiastic about combining this strategy with highly effective picture basis fashions educated on huge web knowledge. This might enable robots to leverage the final information captured by these fashions whereas nonetheless with the ability to purpose about low-level robotic actions.”

Extra data:

Vitalis Vosylius et al, Render and Diffuse: Aligning Picture and Motion Areas for Diffusion-based Behaviour Cloning, arXiv (2024). DOI: 10.48550/arxiv.2405.18196

arXiv

© 2024 Science X Community

Quotation:

A less complicated technique to show robots new abilities (2024, June 17)

retrieved 18 June 2024

from https://techxplore.com/information/2024-06-simpler-method-robots-skills.html

This doc is topic to copyright. Other than any honest dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is offered for data functions solely.