To finest help people in real-world settings, robots ought to be capable to constantly purchase helpful new expertise in dynamic and quickly altering environments. At present, nevertheless, most robots can solely deal with duties that they’ve been beforehand skilled on and might solely purchase new capabilities after additional coaching.

Researchers at College of Washington and Massachusetts Institute of Expertise (MIT) lately launched a brand new strategy that permits robots to study new expertise whereas navigating altering environments. This strategy, introduced on the seventh Convention on Robotic Studying (CoRL), makes use of reinforcement studying to coach robots utilizing human suggestions and data gathered whereas exploring their environment.

“The thought for this paper got here from one other work we revealed lately,” Max Balsells, co-author of the paper, informed Tech Xplore. The present paper is obtainable on the arXiv preprint server.

“In our earlier research, we explored use crowdsourced (doubtlessly inaccurate) human suggestions gathered from tons of of individuals over the world, to show a robotic carry out sure duties with out counting on additional info, as is the case in a lot of the earlier work on this subject.”

Whereas of their earlier research, Balsells and their colleagues attained promising outcomes, the strategy they proposed needed to be continually reset to show robots new expertise. In different phrases, every time the robotic tried to finish a process, its environment and settings would return to how they had been earlier than the trial.

“Having to reset the scene is an impediment if we would like robots to study any process with as little human effort as potential,” Balsells mentioned. “As a part of our current research, we thus got down to repair that subject, permitting robots to study in a altering setting, nonetheless simply from human suggestions, in addition to random and guided exploration.”

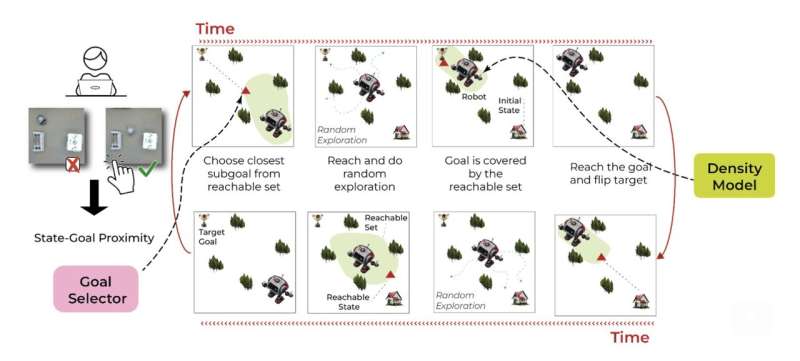

The brand new technique developed by Balsells and his colleagues has three key parts, dubbed the coverage, aim selector and the density mannequin, every supported by a distinct machine-learning approach. The primary mannequin primarily tries to find out what the robotic must do to get to a selected location.

“The aim of the coverage mannequin is to know which actions the robotic has to take to reach at a sure situation from the place it presently is,” Marcel Torne, co-author of the paper, defined. “The way in which this primary mannequin learns that’s by seeing how the setting modified after the robotic took an motion. For instance, by taking a look at the place the robotic or the objects of the room are after taking some actions.”

Basically, the primary mannequin is designed to establish the actions that the robotic might want to take to achieve a selected goal location or goal. In distinction, the second mannequin (i.e., the aim selector) guides the robotic whereas it’s nonetheless studying, speaking the second when it’s nearer to reaching a set aim.

“The target of the aim selector is to inform by which instances the robotic was nearer to reaching the duty,” Balsells mentioned. “That means, we will use this mannequin to information the robotic by commanding the eventualities that it has already seen, by which it was nearer to reaching the duty. From there, the robotic can simply do random actions to discover extra that a part of the setting. If we did not have this mannequin, the robotic would not do significant issues, making it very laborious for the primary mannequin to study something. This mannequin learns that from human suggestions.”

The staff’s strategy ensures that as a robotic strikes in its environment, it constantly relays eventualities it encounters to a selected web site. Crowdsourced human customers then flick thru these eventualities and the robotic’s corresponding actions, letting the mannequin know when the robotic is nearer to reaching a set aim.

“Lastly, the aim of the third mannequin (i.e., the density mannequin) is to know whether or not the robotic already is aware of get to a sure situation from the place it presently is,” Balsells mentioned. “This mannequin is vital to guarantee that the second mannequin is guiding the robotic to eventualities that the robotic can get to. This mannequin is skilled on information representing the development from completely different eventualities to the eventualities by which the robotic ended up.”

The third mannequin inside the researchers’ framework principally ensures that the second mannequin solely guides the robotic to accessible places that it is aware of attain. This promotes studying by means of exploration, whereas lowering the danger of incidents and errors.

“The aim selector guides the robotic to guarantee that it goes to fascinating locations,” Torne mentioned. “Notably, the coverage and density fashions study simply by taking a look at what occurs round, that’s, how the placement of the robotic and the objects change because the robotic interacts. Then again, the second mannequin is skilled utilizing human suggestions.”

Notably, the brand new strategy proposed by Balsells and his colleagues solely depends on human suggestions to information the robotic in its studying, fairly than to particularly show carry out duties. It thus doesn’t require intensive datasets containing footage of demonstrations and might promote versatile studying with fewer human efforts.

“Through the use of the third mannequin to know which eventualities the robotic can truly get to, we do not have to reset something, the robotic can study constantly even when some objects are not on the similar location,” Torne mentioned. “An important side of our work is that it permits anybody to show a robotic clear up a sure process simply by letting it run by itself whereas connecting it to the web, so that folks world wide inform it now and again by which moments it was nearer to reaching the duty.”

The strategy launched by this staff of researchers may inform the event of extra reinforcement learning-based frameworks that allow robots to enhance their expertise and study in dynamic real-world environments. Balsells, Torne and their colleagues now plan to increase their technique, offering the robotic some ‘primitives’ or primary tips on carry out particular expertise.

“For instance, proper now the robotic learns which motors it has to maneuver at each time, however we may program how the robotic may transfer to a sure level of a room, after which the robotic would not have to study that; it could simply have to know the place to maneuver to,” Balsells and Torne added.

“One other concept that we wish to discover in our subsequent research is the usage of huge pre-trained fashions already skilled for a bunch of robotics duties (e.g., ChatGPT for robotics), adapting them to particular duties in the true world utilizing our technique. This might enable anybody to simply and rapidly educate robots to realize new expertise, with out having to retrain them from scratch.”

Extra info:

Max Balsells et al, Autonomous Robotic Reinforcement Studying with Asynchronous Human Suggestions, arXiv (2023). DOI: 10.48550/arxiv.2310.20608

arXiv

© 2023 Science X Community

Quotation:

An strategy that permits robots to study in altering environments from human suggestions and exploration (2023, November 28)

retrieved 28 November 2023

from https://techxplore.com/information/2023-11-approach-robots-environments-human-feedback.html

This doc is topic to copyright. Other than any honest dealing for the aim of personal research or analysis, no

half could also be reproduced with out the written permission. The content material is offered for info functions solely.