In the present day, we’re happy to announce the preview of Amazon SageMaker Profiler, a functionality of Amazon SageMaker that gives an in depth view into the AWS compute sources provisioned throughout coaching deep studying fashions on SageMaker. With SageMaker Profiler, you possibly can observe all actions on CPUs and GPUs, comparable to CPU and GPU utilizations, kernel runs on GPUs, kernel launches on CPUs, sync operations, reminiscence operations throughout GPUs, latencies between kernel launches and corresponding runs, and information switch between CPUs and GPUs. On this put up, we stroll you thru the capabilities of SageMaker Profiler.

SageMaker Profiler gives Python modules for annotating PyTorch or TensorFlow coaching scripts and activating SageMaker Profiler. It additionally gives a person interface (UI) that visualizes the profile, a statistical abstract of profiled occasions, and the timeline of a coaching job for monitoring and understanding the time relationship of the occasions between GPUs and CPUs.

The necessity for profiling coaching jobs

With the rise of deep studying (DL), machine studying (ML) has turn into compute and information intensive, usually requiring multi-node, multi-GPU clusters. As state-of-the-art fashions develop in dimension within the order of trillions of parameters, their computational complexity and value additionally improve quickly. ML practitioners have to deal with frequent challenges of environment friendly useful resource utilization when coaching such massive fashions. That is significantly evident in massive language fashions (LLMs), which generally have billions of parameters and due to this fact require massive multi-node GPU clusters so as to practice them effectively.

When coaching these fashions on massive compute clusters, we are able to encounter compute useful resource optimization challenges comparable to I/O bottlenecks, kernel launch latencies, reminiscence limits, and low useful resource utilizations. If the coaching job configuration is just not optimized, these challenges may end up in inefficient {hardware} utilization and longer coaching instances or incomplete coaching runs, which improve the general prices and timelines for the venture.

Conditions

The next are the stipulations to begin utilizing SageMaker Profiler:

A SageMaker area in your AWS account – For directions on organising a site, see Onboard to Amazon SageMaker Area utilizing fast setup. You additionally want so as to add area person profiles for particular person customers to entry the SageMaker Profiler UI software. For extra info, see Add and take away SageMaker Area person profiles.

Permissions – The next checklist is the minimal set of permissions that needs to be assigned to the execution function for utilizing the SageMaker Profiler UI software:

sagemaker:CreateApp

sagemaker:DeleteApp

sagemaker:DescribeTrainingJob

sagemaker:SearchTrainingJobs

s3:GetObject

s3:ListBucket

Put together and run a coaching job with SageMaker Profiler

To start out capturing kernel runs on GPUs whereas the coaching job is working, modify your coaching script utilizing the SageMaker Profiler Python modules. Import the library and add the start_profiling() and stop_profiling() strategies to outline the start and the tip of profiling. You can even use non-obligatory customized annotations so as to add markers within the coaching script to visualise {hardware} actions throughout explicit operations in every step.

There are two approaches that you could take to profile your coaching scripts with SageMaker Profiler. The primary strategy is predicated on profiling full capabilities; the second strategy is predicated on profiling particular code strains in capabilities.

To profile by capabilities, use the context supervisor smppy.annotate to annotate full capabilities. The next instance script reveals the best way to implement the context supervisor to wrap the coaching loop and full capabilities in every iteration:

You can even use smppy.annotation_begin() and smppy.annotation_end() to annotate particular strains of code in capabilities. For extra info, seek advice from documentation.

Configure the SageMaker coaching job launcher

After you’re carried out annotating and organising the profiler initiation modules, save the coaching script and put together the SageMaker framework estimator for coaching utilizing the SageMaker Python SDK.

Arrange a profiler_config object utilizing the ProfilerConfig and Profiler modules as follows:

Create a SageMaker estimator with the profiler_config object created within the earlier step. The next code reveals an instance of making a PyTorch estimator:

If you wish to create a TensorFlow estimator, import sagemaker.tensorflow.TensorFlow as a substitute, and specify one of many TensorFlow variations supported by SageMaker Profiler. For extra details about supported frameworks and occasion varieties, see Supported frameworks.

Begin the coaching job by working the match technique:

Launch the SageMaker Profiler UI

When the coaching job is full, you possibly can launch the SageMaker Profiler UI to visualise and discover the profile of the coaching job. You’ll be able to entry the SageMaker Profiler UI software by way of the SageMaker Profiler touchdown web page on the SageMaker console or by way of the SageMaker area.

To launch the SageMaker Profiler UI software on the SageMaker console, full the next steps:

On the SageMaker console, select Profiler within the navigation pane.

Underneath Get began, choose the area during which you need to launch the SageMaker Profiler UI software.

In case your person profile solely belongs to 1 area, you’ll not see the choice for choosing a site.

Choose the person profile for which you need to launch the SageMaker Profiler UI software.

If there is no such thing as a person profile within the area, select Create person profile. For extra details about creating a brand new person profile, see Add and Take away Consumer Profiles.

Select Open Profiler.

You can even launch the SageMaker Profiler UI from the area particulars web page.

Achieve insights from the SageMaker Profiler

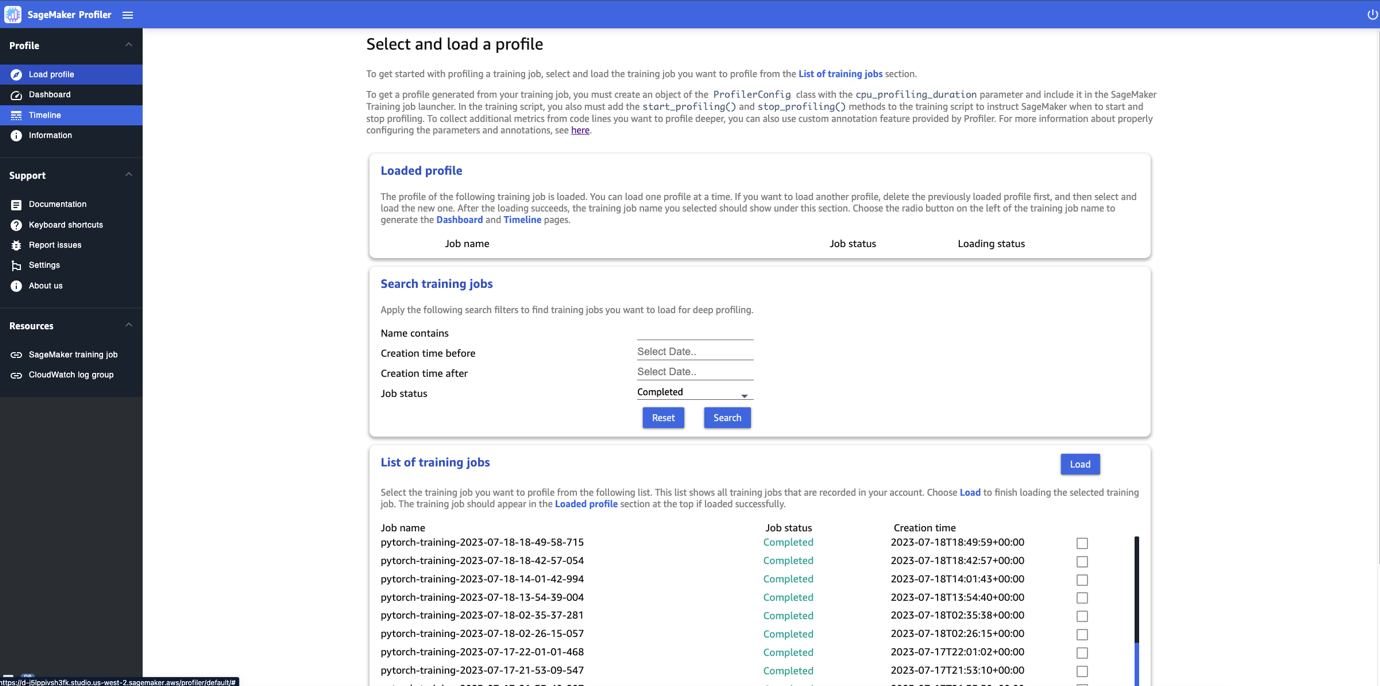

Whenever you open the SageMaker Profiler UI, the Choose and cargo a profile web page opens, as proven within the following screenshot.

You’ll be able to view a listing of all of the coaching jobs which have been submitted to SageMaker Profiler and seek for a selected coaching job by its title, creation time, and run standing (In Progress, Accomplished, Failed, Stopped, or Stopping). To load a profile, choose the coaching job you need to view and select Load. The job title ought to seem within the Loaded profile part on the prime.

Select the job title to generate the dashboard and timeline. Be aware that whenever you select the job, the UI routinely opens the dashboard. You’ll be able to load and visualize one profile at a time. To load one other profile, you will need to first unload the beforehand loaded profile. To unload a profile, select the trash bin icon within the Loaded profile part.

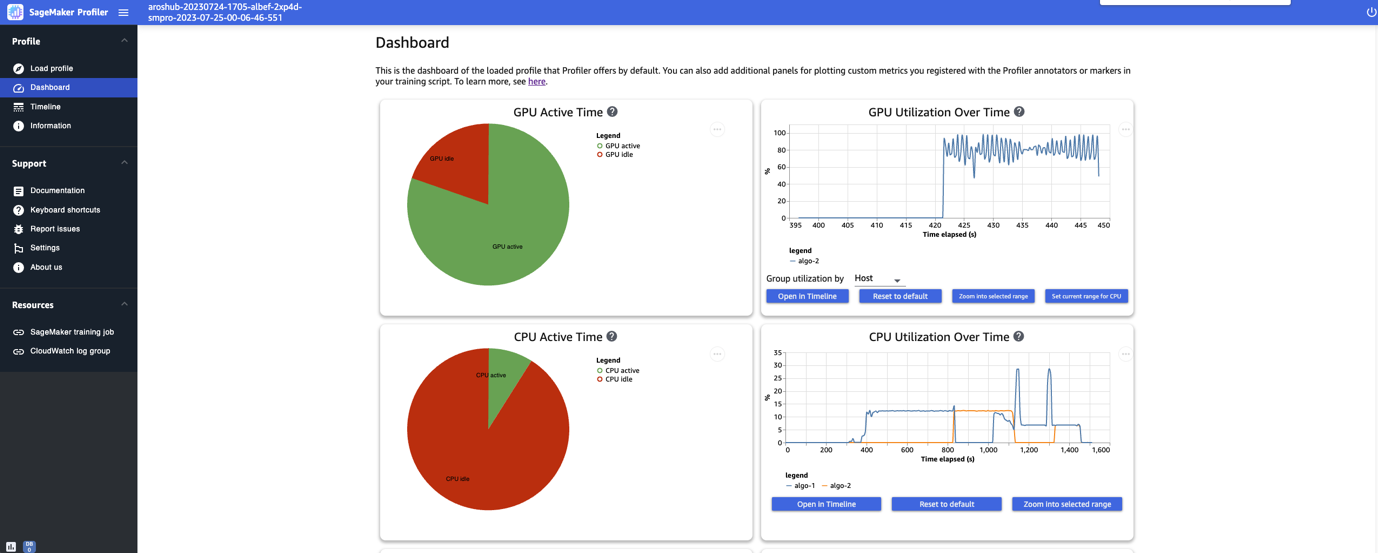

For this put up, we view the profile of an ALBEF coaching job on two ml.p4d.24xlarge situations.

After you end loading and deciding on the coaching job, the UI opens the Dashboard web page, as proven within the following screenshot.

You’ll be able to see the plots for key metrics, specifically the GPU energetic time, GPU utilization over time, CPU energetic time, and CPU utilization over time. The GPU energetic time pie chart reveals the share of GPU energetic time vs. GPU idle time, which allows us to verify if the GPUs are extra energetic than idle all through all the coaching job. The GPU utilization over time timeline graph reveals the common GPU utilization charge over time per node, aggregating all of the nodes in a single chart. You’ll be able to verify if the GPUs have an unbalanced workload, under-utilization points, bottlenecks, or idle points throughout sure time intervals. For extra particulars on deciphering these metrics, seek advice from documentation.

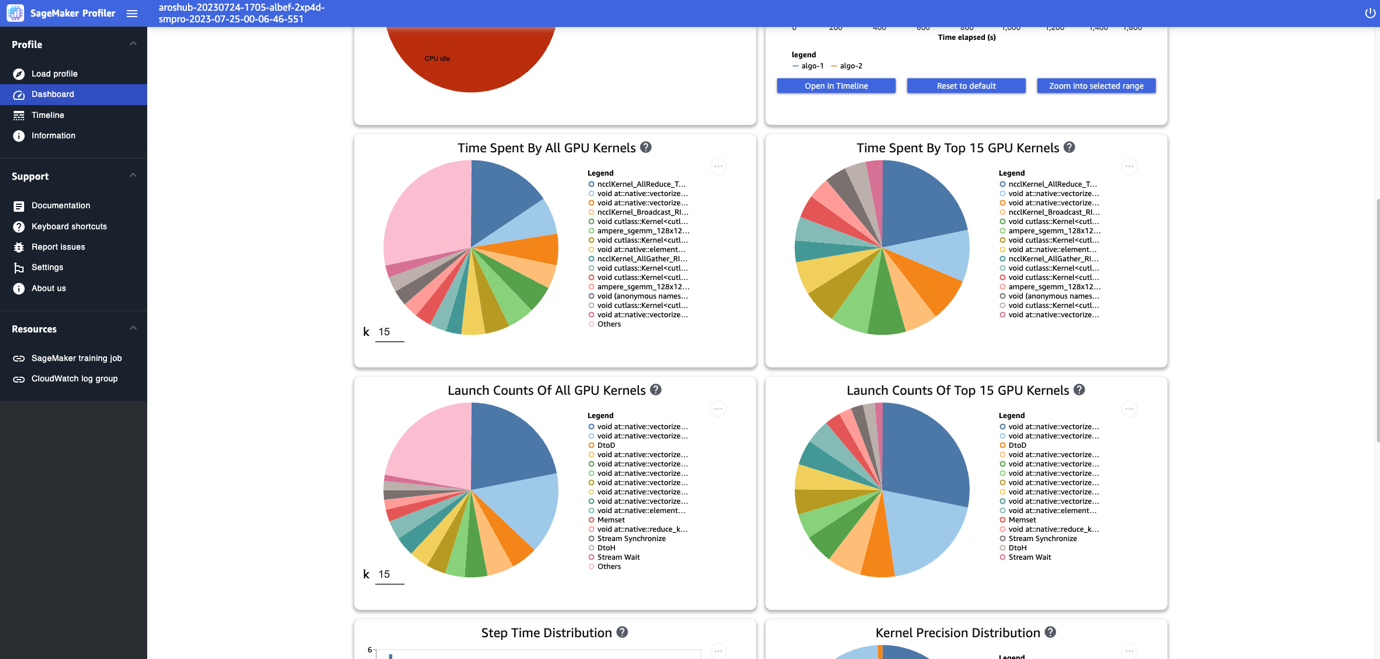

The dashboard gives you with extra plots, together with time spent by all GPU kernels, time spent by the highest 15 GPU kernels, launch counts of all GPU kernels, and launch counts of the highest 15 GPU kernels, as proven within the following screenshot.

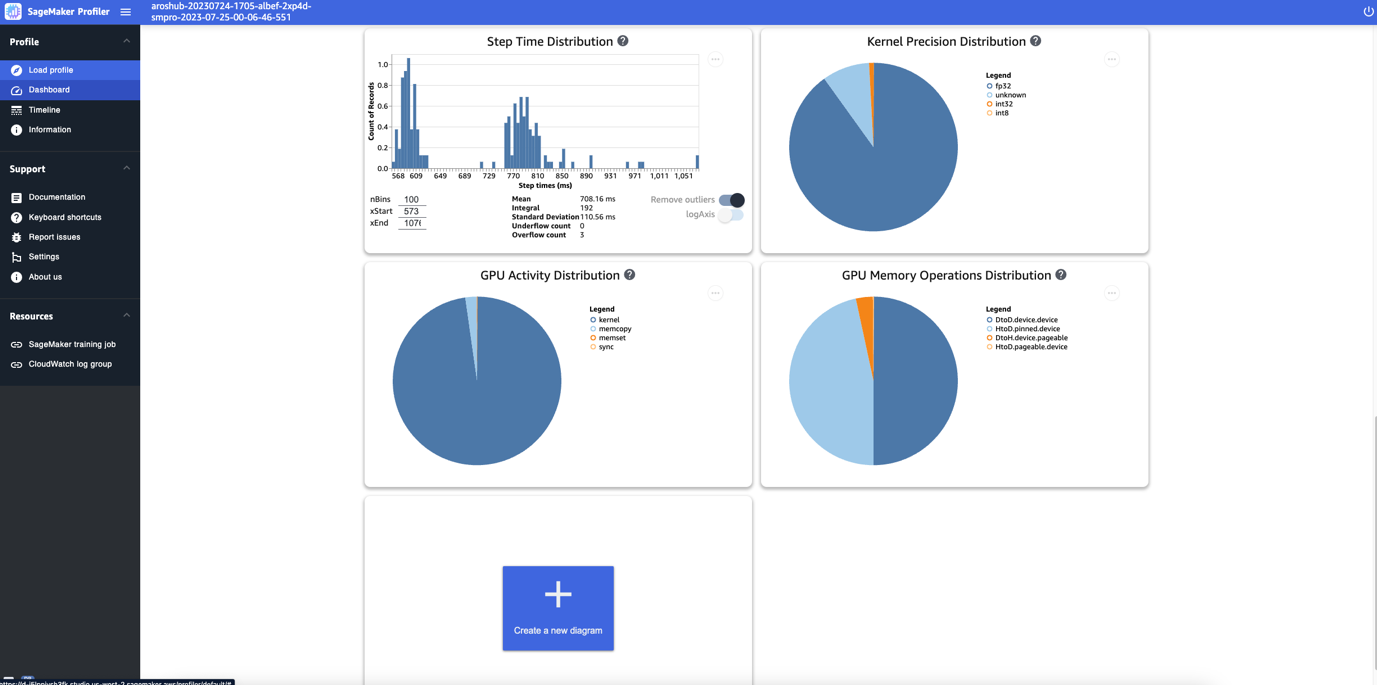

Lastly, the dashboard allows you to visualize extra metrics, such because the step time distribution, which is a histogram that reveals the distribution of step durations on GPUs, and the kernel precision distribution pie chart, which reveals the share of time spent on working kernels in several information varieties comparable to FP32, FP16, INT32, and INT8.

You can even acquire a pie chart on the GPU exercise distribution that reveals the share of time spent on GPU actions, comparable to working kernels, reminiscence (memcpy and memset), and synchronization (sync). You’ll be able to visualize the share of time spent on GPU reminiscence operations from the GPU reminiscence operations distribution pie chart.

You can even create your personal histograms primarily based on a customized metric that you simply annotated manually as described earlier on this put up. When including a customized annotation to a brand new histogram, choose or enter the title of the annotation you added within the coaching script.

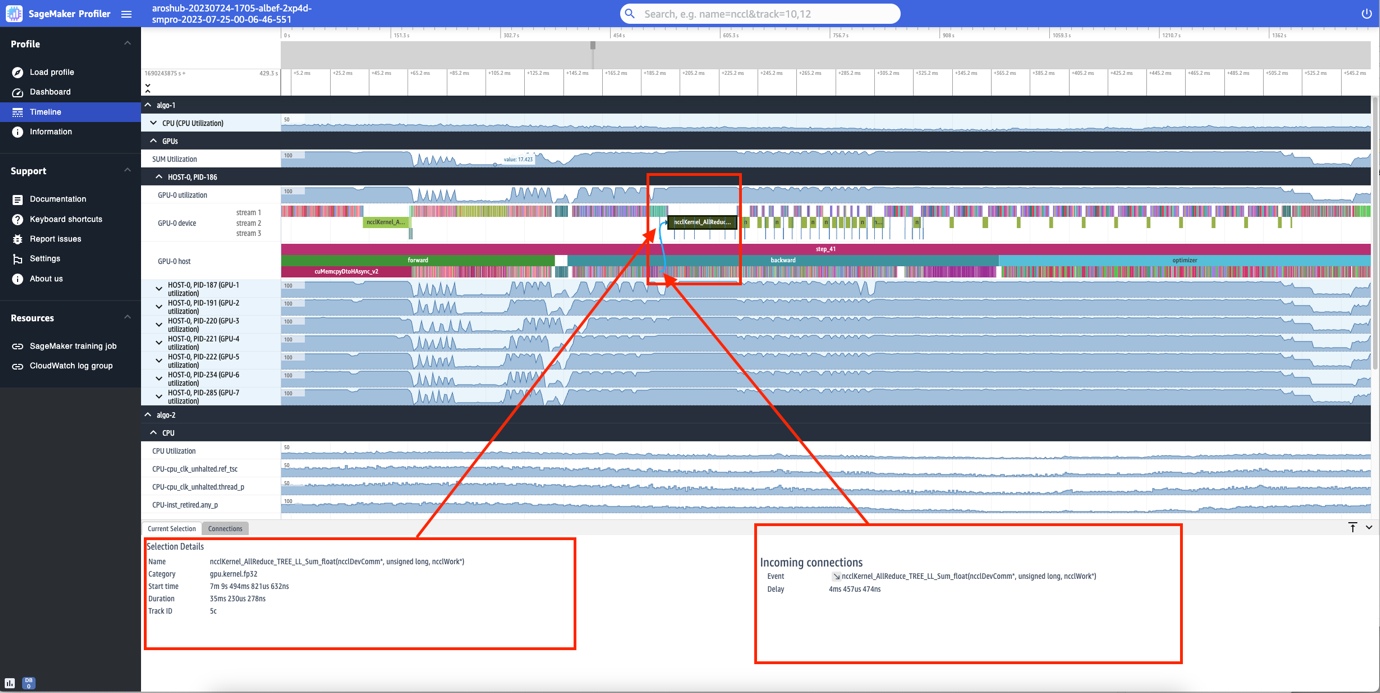

Timeline interface

The SageMaker Profiler UI additionally features a timeline interface, which gives you with an in depth view into the compute sources on the stage of operations and kernels scheduled on the CPUs and run on the GPUs. The timeline is organized in a tree construction, providing you with info from the host stage to the gadget stage, as proven within the following screenshot.

For every CPU, you possibly can observe the CPU efficiency counters, comparable to clk_unhalted_ref.tsc and itlb_misses.miss_causes_a_walk. For every GPU on the 2x p4d.24xlarge occasion, you possibly can see a number timeline and a tool timeline. Kernel launches are on the host timeline and kernel runs are on the gadget timeline.

You can even zoom in to the person steps. Within the following screenshot, we’ve got zoomed in to step_41. The timeline strip chosen within the following screenshot is the AllReduce operation, a vital communication and synchronization step in distributed coaching, run on GPU-0. Within the screenshot, word that the kernel launch within the GPU-0 host connects to the kernel run within the GPU-0 gadget stream 1, indicated with the arrow in cyan.

Availability and concerns

SageMaker Profiler is accessible in PyTorch (model 2.0.0 and 1.13.1) and TensorFlow (model 2.12.0 and a pair of.11.1). The next desk gives the hyperlinks to the supported AWS Deep Studying Containers for SageMaker.

Framework

Model

AWS DLC Picture URI

PyTorch

2.0.0

763104351884.dkr.ecr.<area>.amazonaws.com/pytorch-training:2.0.0-gpu-py310-cu118-ubuntu20.04-sagemaker

PyTorch

1.13.1

763104351884.dkr.ecr.<area>.amazonaws.com/pytorch-training:1.13.1-gpu-py39-cu117-ubuntu20.04-sagemaker

TensorFlow

2.12.0

763104351884.dkr.ecr.<area>.amazonaws.com/tensorflow-training:2.12.0-gpu-py310-cu118-ubuntu20.04-sagemaker

TensorFlow

2.11.1

763104351884.dkr.ecr.<area>.amazonaws.com/tensorflow-training:2.11.1-gpu-py39-cu112-ubuntu20.04-sagemaker

SageMaker Profiler is presently out there within the following Areas: US East (Ohio, N. Virginia), US West (Oregon), and Europe (Frankfurt, Eire).

SageMaker Profiler is accessible within the coaching occasion varieties ml.p4d.24xlarge, ml.p3dn.24xlarge, and ml.g4dn.12xlarge.

For the total checklist of supported frameworks and variations, seek advice from documentation.

SageMaker Profiler incurs fees after the SageMaker Free Tier or the free trial interval of the function ends. For extra info, see Amazon SageMaker Pricing.

Efficiency of SageMaker Profiler

We in contrast the overhead of SageMaker Profiler towards varied open-source profilers. The baseline used for the comparability was obtained from working the coaching job and not using a profiler.

Our key discovering revealed that SageMaker Profiler usually resulted in a shorter billable coaching period as a result of it had much less overhead time on the end-to-end coaching runs. It additionally generated much less profiling information (as much as 10 instances much less) compared towards open-source alternate options. The smaller profiling artifacts generated by SageMaker Profiler require much less storage, thereby additionally saving on prices.

Conclusion

SageMaker Profiler allows you to get detailed insights into the utilization of compute sources when coaching your deep studying fashions. This could allow you to resolve efficiency hotspots and bottlenecks to make sure environment friendly useful resource utilization that will in the end drive down coaching prices and scale back the general coaching period.

To get began with SageMaker Profiler, seek advice from documentation.

Concerning the Authors

Roy Allela is a Senior AI/ML Specialist Options Architect at AWS primarily based in Munich, Germany. Roy helps AWS clients—from small startups to massive enterprises—practice and deploy massive language fashions effectively on AWS. Roy is enthusiastic about computational optimization issues and enhancing the efficiency of AI workloads.

Roy Allela is a Senior AI/ML Specialist Options Architect at AWS primarily based in Munich, Germany. Roy helps AWS clients—from small startups to massive enterprises—practice and deploy massive language fashions effectively on AWS. Roy is enthusiastic about computational optimization issues and enhancing the efficiency of AI workloads.

Sushant Moon is a Information Scientist at AWS, India, specializing in guiding clients by way of their AI/ML endeavors. With a various background spanning retail, finance, and insurance coverage domains, he delivers modern and tailor-made options. Past his skilled life, Sushant finds rejuvenation in swimming and seeks inspiration from his travels to various locales.

Sushant Moon is a Information Scientist at AWS, India, specializing in guiding clients by way of their AI/ML endeavors. With a various background spanning retail, finance, and insurance coverage domains, he delivers modern and tailor-made options. Past his skilled life, Sushant finds rejuvenation in swimming and seeks inspiration from his travels to various locales.

Diksha Sharma is an AI/ML Specialist Options Architect within the Worldwide Specialist Group. She works with public sector clients to assist them architect environment friendly, safe, and scalable machine studying purposes together with generative AI options on AWS. In her spare time, Diksha likes to learn, paint, and spend time together with her household.

Diksha Sharma is an AI/ML Specialist Options Architect within the Worldwide Specialist Group. She works with public sector clients to assist them architect environment friendly, safe, and scalable machine studying purposes together with generative AI options on AWS. In her spare time, Diksha likes to learn, paint, and spend time together with her household.