Google Adverts infrastructure runs on an inner knowledge warehouse referred to as Napa. Billions of reporting queries, which energy important dashboards utilized by promoting shoppers to measure marketing campaign efficiency, run on tables saved in Napa. These tables comprise information of adverts efficiency which can be keyed utilizing explicit prospects and the marketing campaign identifiers with which they’re related. Keys are tokens which can be used each to affiliate an adverts file with a specific consumer and marketing campaign (e.g., customer_id, campaign_id) and for environment friendly retrieval. A file incorporates dozens of keys, so shoppers use reporting queries to specify keys wanted to filter the information to grasp adverts efficiency (e.g., by area, system and metrics corresponding to clicks, and so on.). What makes this drawback difficult is that the information is skewed since queries require various ranges of effort to be answered and have stringent latency expectations. Particularly, some queries require the usage of hundreds of thousands of information whereas others are answered with only a few.

To this finish, in “Progressive Partitioning for Parallelized Question Execution in Napa”, offered at VLDB 2023, we describe how the Napa knowledge warehouse determines the quantity of machine sources wanted to reply reporting queries whereas assembly strict latency targets. We introduce a brand new progressive question partitioning algorithm that may parallelize question execution within the presence of complicated knowledge skews to carry out persistently effectively in a matter of some milliseconds. Lastly, we exhibit how Napa permits Google Adverts infrastructure to serve billions of queries every single day.

Question processing challenges

When a consumer inputs a reporting question, the principle problem is to find out tips on how to parallelize the question successfully. Napa’s parallelization method breaks up the question into even sections which can be equally distributed throughout accessible machines, which then course of these in parallel to considerably scale back question latency. That is achieved by estimating the variety of information related to a specified key, and assigning roughly equal quantities of labor to machines. Nonetheless, this estimation shouldn’t be good since reviewing all information would require the identical effort as answering the question. A machine that processes considerably greater than others would end in run-time skews and poor efficiency. Every machine additionally must have adequate work since pointless parallelism results in underutilized infrastructure. Lastly, parallelization needs to be a per question resolution that should be executed near-perfectly billions of instances, or the question could miss the stringent latency necessities.

The reporting question instance beneath extracts the information denoted by keys (i.e., customer_id and campaign_id) after which computes an mixture (i.e., SUM(value)) from an advertiser desk. On this instance the variety of information is just too giant to course of on a single machine, so Napa wants to make use of a subsequent key (e.g., adgroup_id) to additional break up the gathering of information in order that equal distribution of labor is achieved. It is very important be aware that at petabyte scale, the dimensions of the information statistics wanted for parallelization could also be a number of terabytes. Which means the issue isn’t just about amassing monumental quantities of metadata, but additionally how it’s managed.

SELECT customer_id, campaign_id, SUM(value)

FROM advertiser_table

WHERE customer_id in (1, 7, …, x )

AND campaign_id in (10, 20, …, y)

GROUP BY customer_id, campaign_id;

This reporting question instance extracts information denoted by keys (i.e., customer_id and campaign_id) after which computes an mixture (i.e., SUM(value)) from an advertiser desk. The question effort is set by the keys’ included within the question. Keys belonging to shoppers with bigger campaigns could contact hundreds of thousands of information because the knowledge quantity immediately correlates with the dimensions of the adverts marketing campaign. This disparity of matching information based mostly on keys displays the skewness in knowledge, which makes question processing a difficult drawback.

An efficient resolution minimizes the quantity of metadata wanted, focuses effort totally on the skewed a part of the important thing area to partition knowledge effectively, and works effectively throughout the allotted time. For instance, if the question latency is a couple of hundred milliseconds, partitioning ought to take now not than tens of milliseconds. Lastly, a parallelization course of ought to decide when it is reached the absolute best partitioning that considers question latency expectations. To this finish, we have now developed a progressive partitioning algorithm that we describe later on this article.

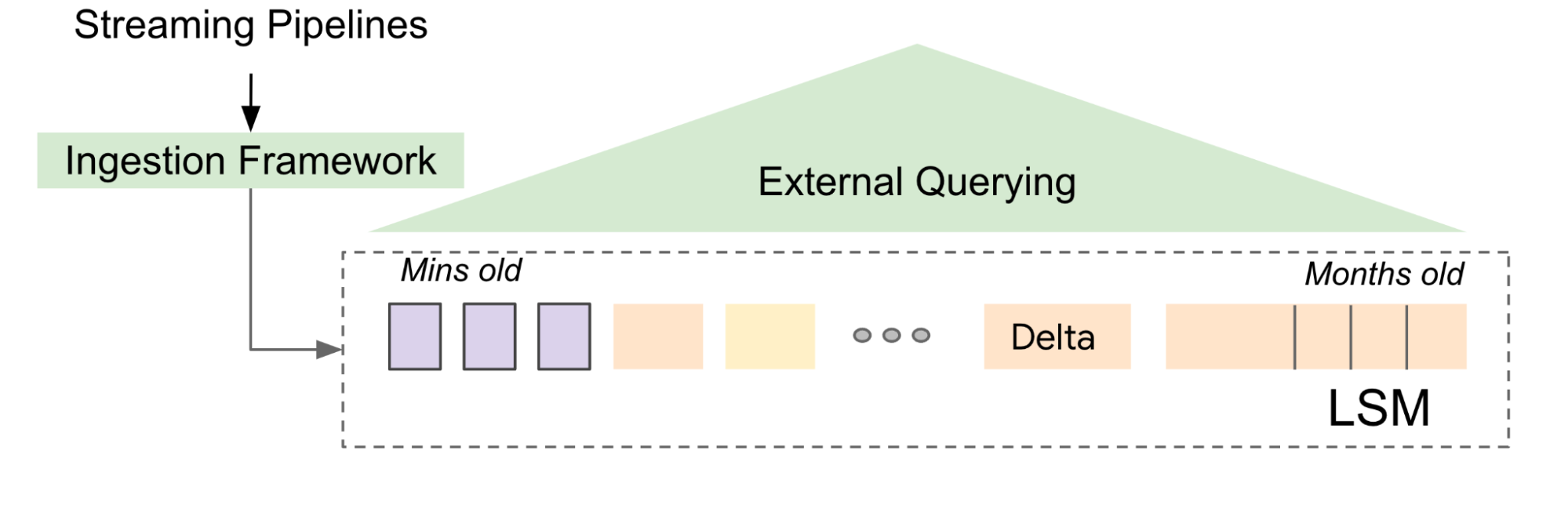

Managing the information deluge

Tables in Napa are continuously up to date, so we use log-structured merge forests (LSM tree) to prepare the deluge of desk updates. LSM is a forest of sorted knowledge that’s temporally organized with a B-tree index to help environment friendly key lookup queries. B-trees retailer abstract data of the sub-trees in a hierarchical method. Every B-tree node information the variety of entries current in every subtree, which aids within the parallelization of queries. LSM permits us to decouple the method of updating the tables from the mechanics of question serving within the sense that dwell queries go in opposition to a distinct model of the information, which is atomically up to date as soon as the following batch of ingest (referred to as delta) has been absolutely ready for querying.

The partitioning drawback

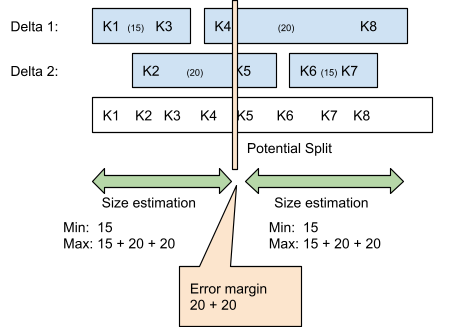

The info partitioning drawback in our context is that we have now a massively giant desk that’s represented as an LSM tree. Within the determine beneath, Delta 1 and a pair of every have their very own B-tree, and collectively signify 70 information. Napa breaks the information into two items, and assigns every bit to a distinct machine. The issue turns into a partitioning drawback of a forest of bushes and requires a tree-traversal algorithm that may shortly cut up the bushes into two equal components.

To keep away from visiting all of the nodes of the tree, we introduce the idea of “ok” partitioning. As we start chopping and partitioning the tree into two components, we preserve an estimate of how dangerous our present reply can be if we terminated the partitioning course of at that immediate. That is the yardstick of how shut we’re to the reply and is represented beneath by a complete error margin of 40 (at this level of execution, the 2 items are anticipated to be between 15 and 35 information in dimension, the uncertainty provides as much as 40). Every subsequent traversal step reduces the error estimate, and if the 2 items are roughly equal, it stops the partitioning course of. This course of continues till the specified error margin is reached, at which era we’re assured that the 2 items are roughly equal.

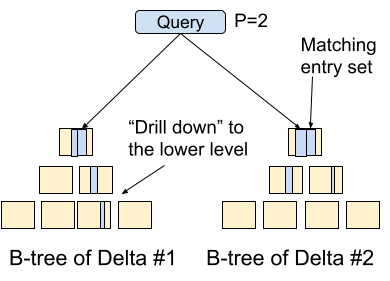

Progressive partitioning algorithm

Progressive partitioning encapsulates the notion of “ok” in that it makes a collection of strikes to scale back the error estimate. The enter is a set of B-trees and the aim is to chop the bushes into items of roughly equal dimension. The algorithm traverses one of many bushes (“drill down” within the determine) which leads to a discount of the error estimate. The algorithm is guided by statistics which can be saved with every node of the tree in order that it makes an knowledgeable set of strikes at every step. The problem right here is to resolve tips on how to direct effort in the absolute best method in order that the error sure reduces shortly within the fewest attainable steps. Progressive partitioning is conducive for our use-case because the longer the algorithm runs, the extra equal the items turn into. It additionally signifies that if the algorithm is stopped at any level, one nonetheless will get good partitioning, the place the standard corresponds to the time spent.

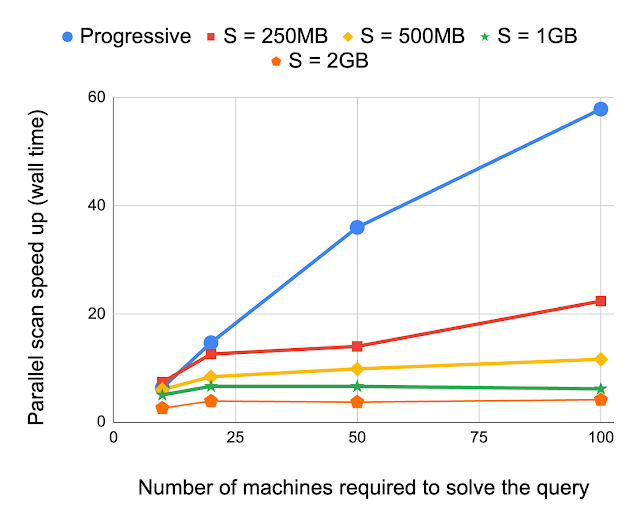

Prior work on this area makes use of a sampled desk to drive the partitioning course of, whereas the Napa method makes use of a B-tree. As talked about earlier, even only a pattern from a petabyte desk might be huge. A tree-based partitioning methodology can obtain partitioning rather more effectively than a sample-based method, which doesn’t use a tree group of the sampled information. We evaluate progressive partitioning with an alternate method, the place sampling of the desk at numerous resolutions (e.g., 1 file pattern each 250 MB and so forth) aids the partitioning of the question. Experimental outcomes present the relative speedup from progressive partitioning for queries requiring various numbers of machines. These outcomes exhibit that progressive partitioning is way quicker than current approaches and the speedup will increase as the dimensions of the question will increase.

Conclusion

Napa’s progressive partitioning algorithm effectively optimizes database queries, enabling Google Adverts to serve consumer reporting queries billions of instances every day. We be aware that tree traversal is a standard method that college students in introductory pc science programs use, but it additionally serves a important use-case at Google. We hope that this text will encourage our readers, because it demonstrates how easy methods and thoroughly designed knowledge constructions might be remarkably potent if used effectively. Try the paper and a current speak describing Napa to be taught extra.

Acknowledgements

This weblog publish describes a collaborative effort between Junichi Tatemura, Tao Zou, Jagan Sankaranarayanan, Yanlai Huang, Jim Chen, Yupu Zhang, Kevin Lai, Hao Zhang, Gokul Nath Babu Manoharan, Goetz Graefe, Divyakant Agrawal, Brad Adelberg, Shilpa Kolhar and Indrajit Roy.