Hearken to this text

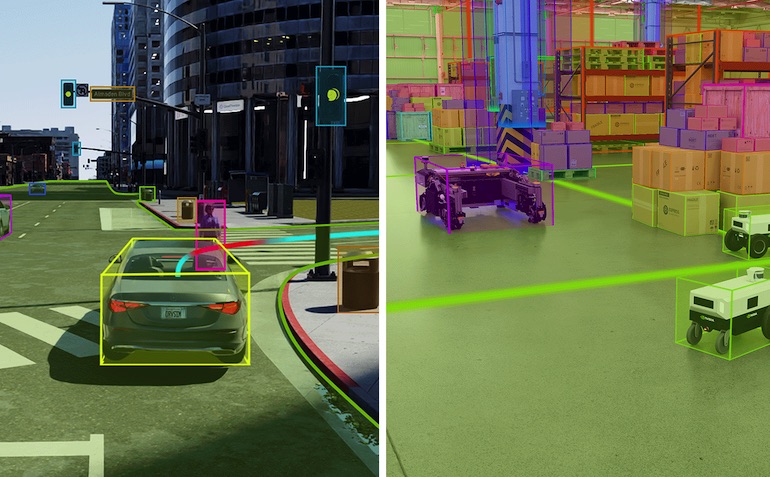

As proven at CVPR, Omniverse Cloud Sensor RTX microservices generate high-fidelity sensor simulation froman autonomous automobile (left) and an autonomous cell robotic (proper). Sources: NVIDIA, Fraunhofer IML (proper)

NVIDIA Corp. right now introduced NVIDIA Omniverse Cloud Sensor RTX, a set of microservices that allow bodily correct sensor simulation to speed up the event of all types of autonomous machines.

NVIDIA researchers are additionally presenting 50 analysis tasks round visible generative AI on the Laptop Imaginative and prescient and Sample Recognition, or CVPR, convention this week in Seattle. They embody new methods to create and interpret photographs, movies, and 3D environments. As well as, the corporate stated it has created its largest indoor artificial dataset with Omniverse for CVPR’s AI Metropolis Problem.

Sensors present industrial manipulators, cell robots, autonomous autos, humanoids, and sensible areas with the info they should comprehend the bodily world and make knowledgeable selections.

NVIDIA stated builders can use Omniverse Cloud Sensor RTX to check sensor notion and related AI software program in bodily correct, lifelike digital environments earlier than real-world deployment. This could improve security whereas saving time and prices, it stated.

“Creating secure and dependable autonomous machines powered by generative bodily AI requires coaching and testing in bodily primarily based digital worlds,” acknowledged Rev Lebaredian, vice chairman of Omniverse and simulation expertise at NVIDIA. “Omniverse Cloud Sensor RTX microservices will allow builders to simply construct large-scale digital twins of factories, cities and even Earth — serving to speed up the following wave of AI.”

Omniverse Cloud Sensor RTX helps simulation at scale

Constructed on the OpenUSD framework and powered by NVIDIA RTX ray-tracing and neural-rendering applied sciences, Omniverse Cloud Sensor RTX combines real-world knowledge from movies, cameras, radar, and lidar with artificial knowledge.

Omniverse Cloud Sensor RTX consists of software program software programming interfaces (APIs) to speed up the event of autonomous machines for any trade, NVIDIA stated.

Even for eventualities with restricted real-world knowledge, the microservices can simulate a broad vary of actions, claimed the corporate. It cited examples reminiscent of whether or not a robotic arm is working accurately, an airport baggage carousel is useful, a tree department is obstructing a roadway, a manufacturing unit conveyor belt is in movement, or a robotic or individual is close by.

Microservice to be accessible for AV improvement

CARLA, Foretellix, and MathWorks are among the many first software program builders with entry to Omniverse Cloud Sensor RTX for autonomous autos (AVs). The microservices may even allow sensor makers to validate and combine digital twins of their programs in digital environments, lowering the time wanted for bodily prototyping, stated NVIDIA.

Omniverse Cloud Sensor RTX will likely be usually accessible later this yr. NVIDIA famous that its announcement coincided with its first-place win on the Autonomous Grand Problem for Finish-to-Finish Driving at Scale at CVPR.

The NVIDIA researchers’ successful workflow might be replicated in high-fidelity simulated environments with Omniverse Cloud Sensor RTX. Builders can use it to check self-driving eventualities in bodily correct environments earlier than deploying AVs in the true world, stated the corporate.

Two of NVIDIA’s papers — one on the coaching dynamics of diffusion fashions and one other on high-definition maps for autonomous autos — are finalists for the Greatest Paper Awards at CVPR.

The corporate additionally stated its win for the Finish-to-Finish Driving at Scale observe demonstrates its use of generative AI for complete self-driving fashions. The successful submission outperformed greater than 450 entries worldwide and acquired CVPR’s Innovation Award.

Collectively, the work introduces synthetic intelligence fashions that might speed up the coaching of robots for manufacturing, allow artists to extra rapidly notice their visions, and assist healthcare employees course of radiology stories.

“Synthetic intelligence — and generative AI particularly — represents a pivotal technological development,” stated Jan Kautz, vice chairman of studying and notion analysis at NVIDIA. “At CVPR, NVIDIA Analysis is sharing how we’re pushing the boundaries of what’s attainable — from highly effective image-generation fashions that might supercharge skilled creators to autonomous driving software program that might assist allow next-generation self-driving automobiles.”

Basis mannequin eases object pose estimation

NVIDIA researchers at CVPR are additionally presenting FoundationPose, a basis mannequin for object pose estimation and monitoring that may be immediately utilized to new objects throughout inference, with out the necessity for fantastic tuning. The mannequin makes use of both a small set of reference photographs or a 3D illustration of an object to grasp its form. It set a brand new file on a benchmark for object pose estimation.

FoundationPose can then determine and observe how that object strikes and rotates in 3D throughout a video, even in poor lighting circumstances or complicated scenes with visible obstructions, defined NVIDIA.

Industrial robots may use FoundationPose to determine and observe the objects they work together with. Augmented actuality (AR) functions may additionally use it with AI to overlay visuals on a reside scene.

NeRFDeformer transforms knowledge from a single picture

NVIDIA’s analysis features a text-to-image mannequin that may be custom-made to depict a particular object or character, a brand new mannequin for object-pose estimation, a way to edit neural radiance fields (NeRFs), and a visible language mannequin that may perceive memes. Extra papers introduce domain-specific improvements for industries together with automotive, healthcare, and robotics.

A NeRF is an AI mannequin that may render a 3D scene primarily based on a sequence of 2D photographs taken from completely different positions within the surroundings. In robotics, NeRFs can generate immersive 3D renders of complicated real-world scenes, reminiscent of a cluttered room or a building web site.

Nevertheless, to make any modifications, builders would want to manually outline how the scene has reworked — or remake the NeRF solely.

Researchers from the College of Illinois Urbana-Champaign and NVIDIA have simplified the method with NeRFDeformer. The tactic can rework an current NeRF utilizing a single RGB-D picture, which is a mix of a traditional photograph and a depth map that captures how far every object in a scene is from the digicam.

Researchers have simplified the method of producing a 3D scene from 2D photographs utilizing NeRFs. Supply: NVIDIA

JeDi mannequin exhibits the best way to simplify picture creation at CVPR

Creators usually use diffusion fashions to generate particular photographs primarily based on textual content prompts. Prior analysis targeted on the consumer coaching a mannequin on a customized dataset, however the fine-tuning course of might be time-consuming and inaccessible to normal customers, stated NVIDIA.

JeDi, a paper by researchers from Johns Hopkins College, Toyota Technological Institute at Chicago, and NVIDIA, proposes a brand new approach that permits customers to personalize the output of a diffusion mannequin inside a few seconds utilizing reference photographs. The crew discovered that the mannequin outperforms current strategies.

NVIDIA added that JeDi might be mixed with retrieval-augmented technology, or RAG, to generate visuals particular to a database, reminiscent of a model’s product catalog.

JeDi is a brand new approach that permits customers to simply personalize the output of a diffusion mannequin inside a few seconds utilizing reference photographs, like an astronaut cat that may be positioned in numerous environments. Supply: NVIDIA

Visible language mannequin helps AI get the image

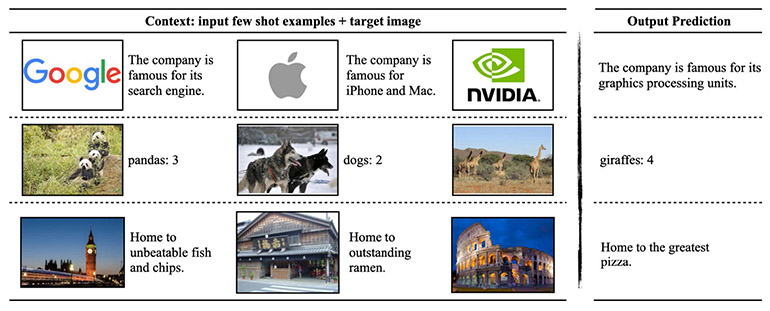

NVIDIA stated it has collaborated with the Massachusetts Institute of Know-how (MIT) to advance the cutting-edge for imaginative and prescient language fashions, that are generative AI fashions that may course of movies, photographs, and textual content. The companions developed VILA, a household of open-source visible language fashions that they stated outperforms prior neural networks on benchmarks that check how nicely AI fashions reply questions on photographs.

VILA’s pretraining course of offered enhanced world information, stronger in-context studying, and the flexibility to motive throughout a number of photographs, claimed the MIT and NVIDIA crew.

The VILA mannequin household might be optimized for inference utilizing the NVIDIA TensorRT-LLM open-source library and might be deployed on NVIDIA GPUs in knowledge facilities, workstations, and edge gadgets.

VILA can perceive memes and motive primarily based on a number of photographs or video frames. Supply: NVIDIA

Generative AI drives AV, sensible metropolis analysis at CVPR

NVIDIA Analysis has lots of of scientists and engineers worldwide, with groups targeted on subjects together with AI, pc graphics, pc imaginative and prescient, self-driving automobiles, and robotics. A dozen of the NVIDIA-authored CVPR papers give attention to autonomous automobile analysis.

“Producing and Leveraging On-line Map Uncertainty in Trajectory Prediction,” a paper authored by researchers from the College of Toronto and NVIDIA, has been chosen as one among 24 finalists for CVPR’s greatest paper award.

As well as, Sanja Fidler, vice chairman of AI analysis at NVIDIA, will current on imaginative and prescient language fashions on the Workshop on Autonomous Driving right now.

NVIDIA has contributed to the CVPR AI Metropolis Problem for the eighth consecutive yr to assist advance analysis and improvement for sensible cities and industrial automation. The problem’s datasets have been generated utilizing NVIDIA Omniverse, a platform of APIs, software program improvement kits (SDKs), and providers for constructing functions and workflows primarily based on Common Scene Description (OpenUSD).

AI Metropolis Problem artificial datasets span a number of environments generated by NVIDIA Omniverse, permitting lots of of groups to check AI fashions in bodily settings reminiscent of retail and warehouse environments to boost operational effectivity. Supply: NVIDIA

Concerning the writer

Concerning the writer

Isha Salian writes about deep studying, science and healthcare, amongst different subjects, as a part of NVIDIA’s company communications crew. She first joined the corporate as an intern in summer season 2015. Isha has a journalism M.A., in addition to undergraduate levels in communication and English, from Stanford.