We sometimes do not give it some thought whereas doing it, however strolling is an advanced activity. Managed by our nervous system, our bones, joints, muscle tissue, tendons, ligaments and different connective tissues (i.e., the musculoskeletal system) should transfer in coordination and reply to surprising adjustments or disturbances at various speeds in a extremely environment friendly method. Replicating this in robotic applied sciences isn’t any small feat.

Now, a analysis group from Tohoku College Graduate Faculty of Engineering has replicated human-like variable velocity strolling utilizing a musculoskeletal mannequin—one steered by a reflex management methodology reflective of the human nervous system. This breakthrough in biomechanics and robotics units a brand new benchmark in understanding human motion and paves the best way for revolutionary robotic applied sciences.

Particulars of their research have been printed within the journal PLoS Computational Biology.

“Our research has tackled the intricate problem of replicating environment friendly strolling at varied speeds—a cornerstone of the human strolling mechanism,” factors out Affiliate Professor Dai Owaki, and co-author of the research together with Shunsuke Koseki and Professor Mitsuhiro Hayashibe. “These insights are pivotal in pushing the boundaries for understanding human locomotion, adaptation, and effectivity.”

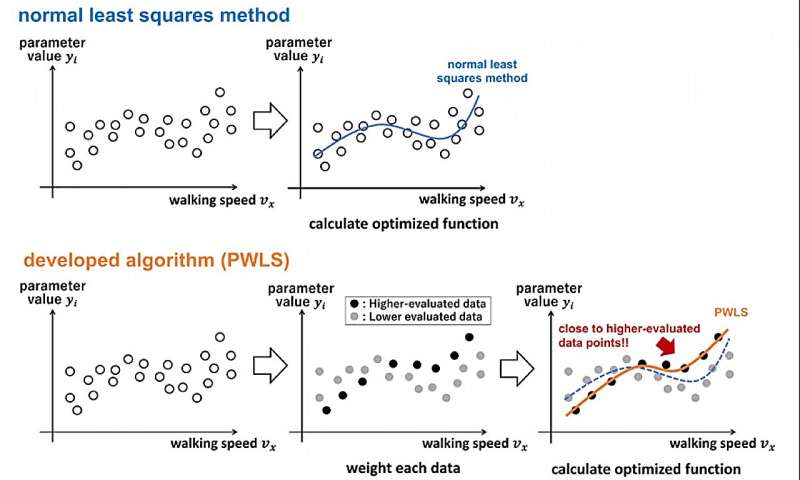

The achievement was due to an revolutionary algorithm. The algorithm developed past the traditional least squares methodology and helped devise a neural circuit mannequin optimized for vitality effectivity over numerous strolling speeds.

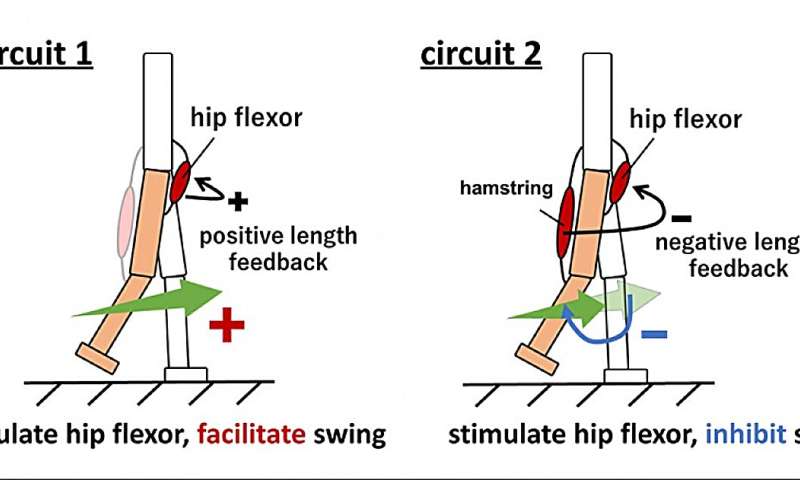

Intensive evaluation of those neural circuits, significantly these controlling the muscle tissue within the leg swing part, unveiled crucial parts of energy-saving strolling methods. These revelations improve our grasp of the advanced neural community mechanisms that underpin human gait and its effectiveness.

Owaki stresses that the information uncovered within the research will assist lay the groundwork for future technological developments.

“The profitable emulation of variable-speed strolling in a musculoskeletal mannequin, mixed with refined neural circuitry, marks a pivotal development in merging neuroscience, biomechanics, and robotics. It can revolutionize the design and improvement of high-performance bipedal robots, superior prosthetic limbs, and state-of-the-art- powered exoskeletons.”

Such developments might enhance mobility options for people with disabilities and advance robotic applied sciences utilized in on a regular basis life.

Trying forward, Owaki and his workforce hope to additional refine the reflex management framework to recreate a broader vary of human strolling speeds and actions. Additionally they plan to use the insights and algorithms from the research to create extra adaptive and energy-efficient prosthetics, powered fits, and bipedal robots. This consists of integrating the recognized neural circuits into these purposes to boost their performance and naturalness of motion.

Extra data:

Shunsuke Koseki et al, Figuring out important components for energy-efficient strolling management throughout a variety of velocities in reflex-based musculoskeletal methods, PLOS Computational Biology (2024). DOI: 10.1371/journal.pcbi.1011771

Tohoku College

Quotation:

Biomechanics mannequin that exhibits how people effectively stroll at assorted speeds might pave method for brand spanking new robotics (2024, January 22)

retrieved 22 January 2024

from https://techxplore.com/information/2024-01-biomechanics-humans-efficiently-varied-pave.html

This doc is topic to copyright. Other than any truthful dealing for the aim of personal research or analysis, no

half could also be reproduced with out the written permission. The content material is offered for data functions solely.