Amazon Redshift is the most well-liked cloud knowledge warehouse that’s utilized by tens of 1000’s of consumers to research exabytes of information each day. Many practitioners are extending these Redshift datasets at scale for machine studying (ML) utilizing Amazon SageMaker, a totally managed ML service, with necessities to develop options offline in a code means or low-code/no-code means, retailer featured knowledge from Amazon Redshift, and make this occur at scale in a manufacturing atmosphere.

On this submit, we present you three choices to arrange Redshift supply knowledge at scale in SageMaker, together with loading knowledge from Amazon Redshift, performing function engineering, and ingesting options into Amazon SageMaker Characteristic Retailer:

In case you’re an AWS Glue consumer and want to do the method interactively, contemplate choice A. In case you’re conversant in SageMaker and writing Spark code, choice B may very well be your selection. If you wish to do the method in a low-code/no-code means, you may comply with choice C.

Amazon Redshift makes use of SQL to research structured and semi-structured knowledge throughout knowledge warehouses, operational databases, and knowledge lakes, utilizing AWS-designed {hardware} and ML to ship the most effective price-performance at any scale.

SageMaker Studio is the primary totally built-in growth atmosphere (IDE) for ML. It supplies a single web-based visible interface the place you may carry out all ML growth steps, together with getting ready knowledge and constructing, coaching, and deploying fashions.

AWS Glue is a serverless knowledge integration service that makes it simple to find, put together, and mix knowledge for analytics, ML, and software growth. AWS Glue allows you to seamlessly acquire, rework, cleanse, and put together knowledge for storage in your knowledge lakes and knowledge pipelines utilizing quite a lot of capabilities, together with built-in transforms.

Resolution overview

The next diagram illustrates the answer structure for every choice.

Stipulations

To proceed with the examples on this submit, you want to create the required AWS sources. To do that, we offer an AWS CloudFormation template to create a stack that comprises the sources. If you create the stack, AWS creates a lot of sources in your account:

A SageMaker area, which incorporates an related Amazon Elastic File System (Amazon EFS) quantity

A listing of approved customers and quite a lot of safety, software, coverage, and Amazon Digital Personal Cloud (Amazon VPC) configurations

A Redshift cluster

A Redshift secret

An AWS Glue connection for Amazon Redshift

An AWS Lambda perform to arrange required sources, execution roles and insurance policies

Just be sure you don’t have already two SageMaker Studio domains within the Area the place you’re operating the CloudFormation template. That is the utmost allowed variety of domains in every supported Area.

Deploy the CloudFormation template

Full the next steps to deploy the CloudFormation template:

Save the CloudFormation template sm-redshift-demo-vpc-cfn-v1.yaml domestically.

On the AWS CloudFormation console, select Create stack.

For Put together template, choose Template is prepared.

For Template supply, choose Add a template file.

Select Select File and navigate to the situation in your pc the place the CloudFormation template was downloaded and select the file.

Enter a stack title, reminiscent of Demo-Redshift.

On the Configure stack choices web page, depart all the pieces as default and select Subsequent.

On the Evaluate web page, choose I acknowledge that AWS CloudFormation may create IAM sources with customized names and select Create stack.

You must see a brand new CloudFormation stack with the title Demo-Redshift being created. Watch for the standing of the stack to be CREATE_COMPLETE (roughly 7 minutes) earlier than transferring on. You may navigate to the stack’s Sources tab to verify what AWS sources had been created.

Launch SageMaker Studio

Full the next steps to launch your SageMaker Studio area:

On the SageMaker console, select Domains within the navigation pane.

Select the area you created as a part of the CloudFormation stack (SageMakerDemoDomain).

Select Launch and Studio.

This web page can take 1–2 minutes to load once you entry SageMaker Studio for the primary time, after which you’ll be redirected to a Residence tab.

Obtain the GitHub repository

Full the next steps to obtain the GitHub repo:

Within the SageMaker pocket book, on the File menu, select New and Terminal.

Within the terminal, enter the next command:

Now you can see the amazon-sagemaker-featurestore-redshift-integration folder in navigation pane of SageMaker Studio.

Arrange batch ingestion with the Spark connector

Full the next steps to arrange batch ingestion:

In SageMaker Studio, open the pocket book 1-uploadJar.ipynb underneath amazon-sagemaker-featurestore-redshift-integration.

In case you are prompted to decide on a kernel, select Information Science because the picture and Python 3 because the kernel, then select Choose.

For the next notebooks, select the identical picture and kernel besides the AWS Glue Interactive Classes pocket book (4a).

Run the cells by urgent Shift+Enter in every of the cells.

Whereas the code runs, an asterisk (*) seems between the sq. brackets. When the code is completed operating, the * might be changed with numbers. This motion can also be workable for all different notebooks.

Arrange the schema and cargo knowledge to Amazon Redshift

The subsequent step is to arrange the schema and cargo knowledge from Amazon Easy Storage Service (Amazon S3) to Amazon Redshift. To take action, run the pocket book 2-loadredshiftdata.ipynb.

Create function shops in SageMaker Characteristic Retailer

To create your function shops, run the pocket book 3-createFeatureStore.ipynb.

Carry out function engineering and ingest options into SageMaker Characteristic Retailer

On this part, we current the steps for all three choices to carry out function engineering and ingest processed options into SageMaker Characteristic Retailer.

Possibility A: Use SageMaker Studio with a serverless AWS Glue interactive session

Full the next steps for choice A:

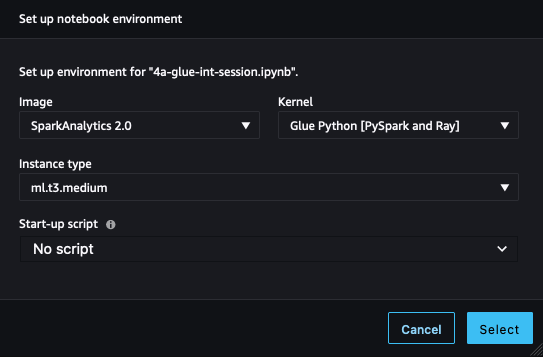

In SageMaker Studio, open the pocket book 4a-glue-int-session.ipynb.

In case you are prompted to decide on a kernel, select SparkAnalytics 2.0 because the picture and Glue Python [PySpark and Ray] because the kernel, then select Choose.

The atmosphere preparation course of could take a while to finish.

Possibility B: Use a SageMaker Processing job with Spark

On this choice, we use a SageMaker Processing job with a Spark script to load the unique dataset from Amazon Redshift, carry out function engineering, and ingest the information into SageMaker Characteristic Retailer. To take action, open the pocket book 4b-processing-rs-to-fs.ipynb in your SageMaker Studio atmosphere.

Right here we use RedshiftDatasetDefinition to retrieve the dataset from the Redshift cluster. RedshiftDatasetDefinition is one sort of enter of the processing job, which supplies a easy interface for practitioners to configure Redshift connection-related parameters reminiscent of identifier, database, desk, question string, and extra. You may simply set up your Redshift connection utilizing RedshiftDatasetDefinition with out sustaining a connection full time. We additionally use the SageMaker Characteristic Retailer Spark connector library within the processing job to hook up with SageMaker Characteristic Retailer in a distributed atmosphere. With this Spark connector, you may simply ingest knowledge to the function group’s on-line and offline retailer from a Spark DataFrame. Additionally, this connector comprises the performance to robotically load function definitions to assist with creating function teams. Above all, this resolution affords you a local Spark method to implement an end-to-end knowledge pipeline from Amazon Redshift to SageMaker. You may carry out any function engineering in a Spark context and ingest last options into SageMaker Characteristic Retailer in only one Spark undertaking.

To make use of the SageMaker Characteristic Retailer Spark connector, we prolong a pre-built SageMaker Spark container with sagemaker-feature-store-pyspark put in. Within the Spark script, use the system executable command to run pip set up, set up this library in your native atmosphere, and get the native path of the JAR file dependency. Within the processing job API, present this path to the parameter of submit_jars to the node of the Spark cluster that the processing job creates.

Within the Spark script for the processing job, we first learn the unique dataset information from Amazon S3, which quickly shops the unloaded dataset from Amazon Redshift as a medium. Then we carry out function engineering in a Spark means and use feature_store_pyspark to ingest knowledge into the offline function retailer.

For the processing job, we offer a ProcessingInput with a redshift_dataset_definition. Right here we construct a construction in line with the interface, offering Redshift connection-related configurations. You need to use query_string to filter your dataset by SQL and unload it to Amazon S3. See the next code:

It is advisable to wait 6–7 minutes for every processing job together with USER, PLACE, and RATING datasets.

For extra particulars about SageMaker Processing jobs, check with Course of knowledge.

For SageMaker native options for function processing from Amazon Redshift, you may also use Characteristic Processing in SageMaker Characteristic Retailer, which is for underlying infrastructure together with provisioning the compute environments and creating and sustaining SageMaker pipelines to load and ingest knowledge. You may solely focus in your function processor definitions that embody transformation features, the supply of Amazon Redshift, and the sink of SageMaker Characteristic Retailer. The scheduling, job administration, and different workloads in manufacturing are managed by SageMaker. Characteristic Processor pipelines are SageMaker pipelines, so the usual monitoring mechanisms and integrations can be found.

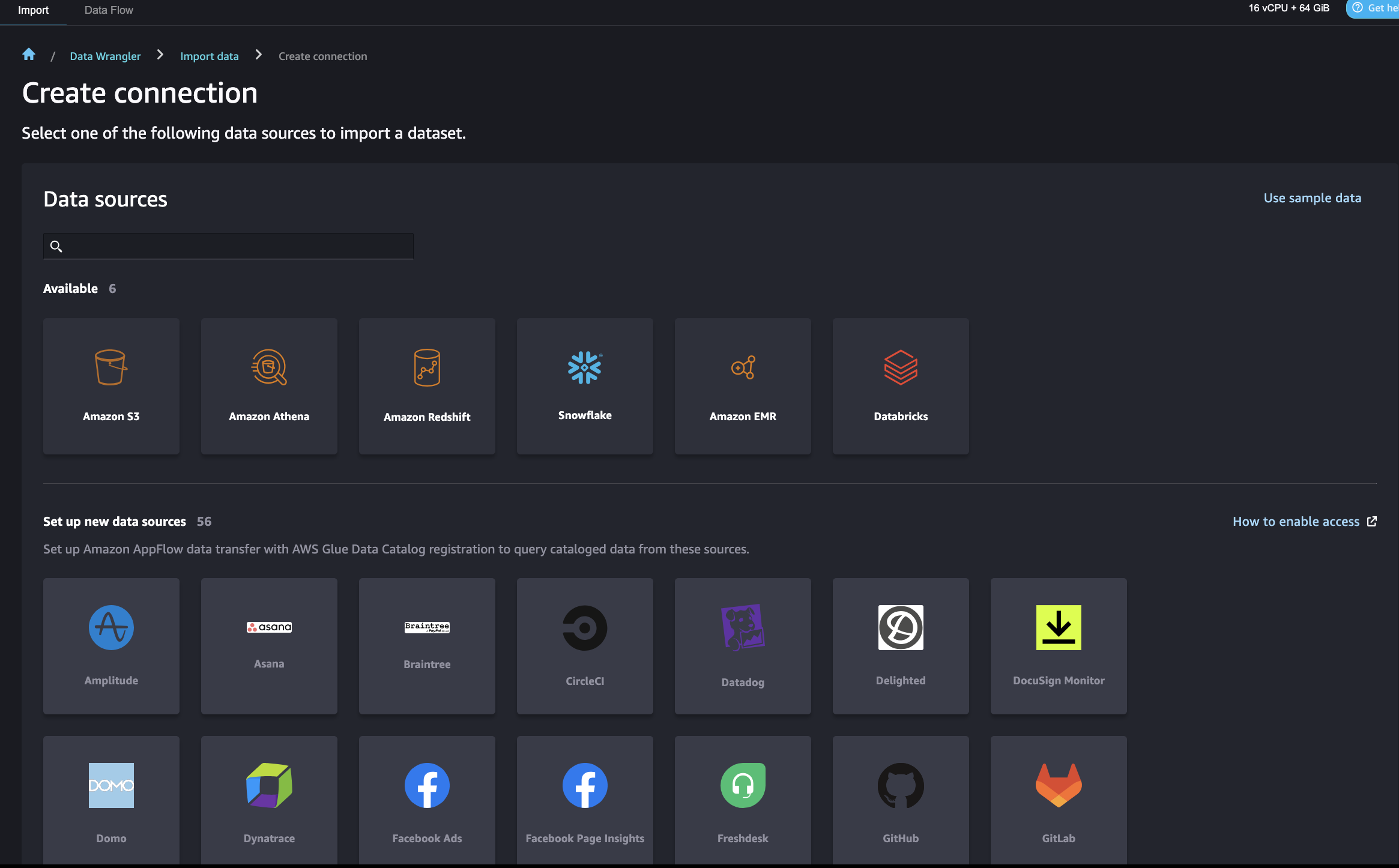

Possibility C: Use SageMaker Information Wrangler

SageMaker Information Wrangler permits you to import knowledge from varied knowledge sources together with Amazon Redshift for a low-code/no-code method to put together, rework, and featurize your knowledge. After you end knowledge preparation, you need to use SageMaker Information Wrangler to export options to SageMaker Characteristic Retailer.

There are some AWS Identification and Entry Administration (IAM) settings that permit SageMaker Information Wrangler to hook up with Amazon Redshift. First, create an IAM function (for instance, redshift-s3-dw-connect) that features an Amazon S3 entry coverage. For this submit, we connected the AmazonS3FullAccess coverage to the IAM function. In case you have restrictions of accessing a specified S3 bucket, you may outline it within the Amazon S3 entry coverage. We connected the IAM function to the Redshift cluster that we created earlier. Subsequent, create a coverage for SageMaker to entry Amazon Redshift by getting its cluster credentials, and connect the coverage to the SageMaker IAM function. The coverage appears to be like like the next code:

After this setup, SageMaker Information Wrangler permits you to question Amazon Redshift and output the outcomes into an S3 bucket. For directions to hook up with a Redshift cluster and question and import knowledge from Amazon Redshift to SageMaker Information Wrangler, check with Import knowledge from Amazon Redshift.

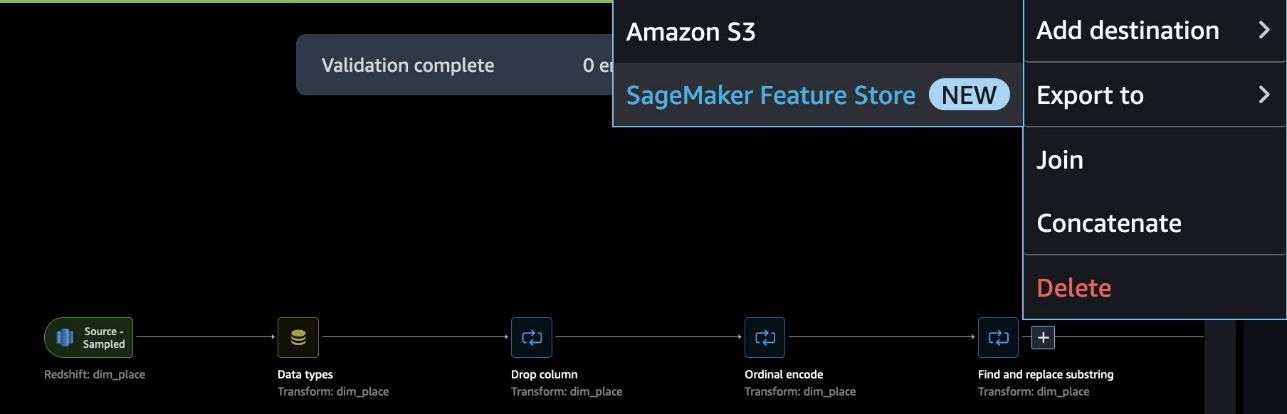

SageMaker Information Wrangler affords a choice of over 300 pre-built knowledge transformations for widespread use instances reminiscent of deleting duplicate rows, imputing lacking knowledge, one-hot encoding, and dealing with time sequence knowledge. You can too add customized transformations in pandas or PySpark. In our instance, we utilized some transformations reminiscent of drop column, knowledge sort enforcement, and ordinal encoding to the information.

When your knowledge stream is full, you may export it to SageMaker Characteristic Retailer. At this level, you want to create a function group: give the function group a reputation, choose each on-line and offline storage, present the title of a S3 bucket to make use of for the offline retailer, and supply a job that has SageMaker Characteristic Retailer entry. Lastly, you may create a job, which creates a SageMaker Processing job that runs the SageMaker Information Wrangler stream to ingest options from the Redshift knowledge supply to your function group.

Right here is one end-to-end knowledge stream within the state of affairs of PLACE function engineering.

Use SageMaker Characteristic Retailer for mannequin coaching and prediction

To make use of SageMaker Characteristic retailer for mannequin coaching and prediction, open the pocket book 5-classification-using-feature-groups.ipynb.

After the Redshift knowledge is remodeled into options and ingested into SageMaker Characteristic Retailer, the options can be found for search and discovery throughout groups of information scientists answerable for many unbiased ML fashions and use instances. These groups can use the options for modeling with out having to rebuild or rerun function engineering pipelines. Characteristic teams are managed and scaled independently, and might be reused and joined collectively whatever the upstream knowledge supply.

The subsequent step is to construct ML fashions utilizing options chosen from one or a number of function teams. You determine which function teams to make use of in your fashions. There are two choices to create an ML dataset from function teams, each using the SageMaker Python SDK:

Use the SageMaker Characteristic Retailer DatasetBuilder API – The SageMaker Characteristic Retailer DatasetBuilder API permits knowledge scientists create ML datasets from a number of function teams within the offline retailer. You need to use the API to create a dataset from a single or a number of function teams, and output it as a CSV file or a pandas DataFrame. See the next instance code:

Run SQL queries utilizing the athena_query perform within the FeatureGroup API – Another choice is to make use of the auto-built AWS Glue Information Catalog for the FeatureGroup API. The FeatureGroup API consists of an Athena_query perform that creates an AthenaQuery occasion to run user-defined SQL question strings. Then you definately run the Athena question and set up the question consequence right into a pandas DataFrame. This feature permits you to specify extra sophisticated SQL queries to extract info from a function group. See the next instance code:

Subsequent, we are able to merge the queried knowledge from totally different function teams into our last dataset for mannequin coaching and testing. For this submit, we use batch rework for mannequin inference. Batch rework permits you to get mannequin inferene on a bulk of information in Amazon S3, and its inference result’s saved in Amazon S3 as effectively. For particulars on mannequin coaching and inference, check with the pocket book 5-classification-using-feature-groups.ipynb.

Run a be a part of question on prediction ends in Amazon Redshift

Lastly, we question the inference consequence and be a part of it with unique consumer profiles in Amazon Redshift. To do that, we use Amazon Redshift Spectrum to affix batch prediction ends in Amazon S3 with the unique Redshift knowledge. For particulars, check with the pocket book run 6-read-results-in-redshift.ipynb.

Clear up

On this part, we offer the steps to wash up the sources created as a part of this submit to keep away from ongoing prices.

Shut down SageMaker Apps

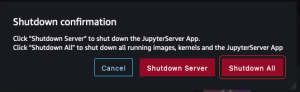

Full the next steps to close down your sources:

In SageMaker Studio, on the File menu, select Shut Down.

Within the Shutdown affirmation dialog, select Shutdown All to proceed.

After you get the “Server stopped” message, you may shut this tab.

Delete the apps

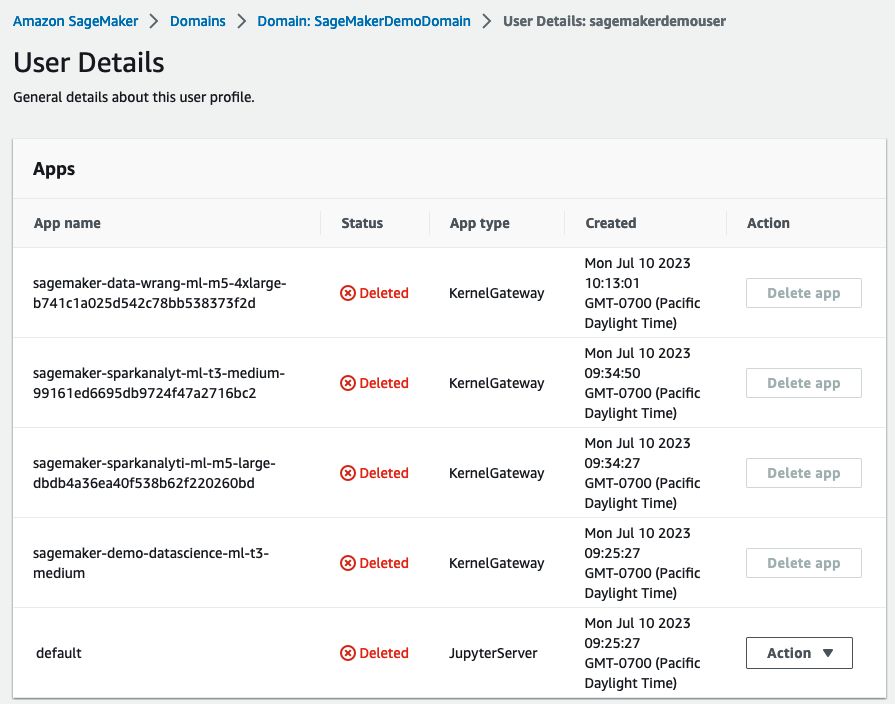

Full the next steps to delete your apps:

On the SageMaker console, within the navigation pane, select Domains.

On the Domains web page, select SageMakerDemoDomain.

On the area particulars web page, underneath Consumer profiles, select the consumer sagemakerdemouser.

Within the Apps part, within the Motion column, select Delete app for any lively apps.

Make sure that the Standing column says Deleted for all of the apps.

Delete the EFS storage quantity related along with your SageMaker area

Find your EFS quantity on the SageMaker console and delete it. For directions, check with Handle Your Amazon EFS Storage Quantity in SageMaker Studio.

Delete default S3 buckets for SageMaker

Delete the default S3 buckets (sagemaker-<region-code>-<acct-id>) for SageMaker In case you are not utilizing SageMaker in that Area.

Delete the CloudFormation stack

Delete the CloudFormation stack in your AWS account in order to wash up all associated sources.

Conclusion

On this submit, we demonstrated an end-to-end knowledge and ML stream from a Redshift knowledge warehouse to SageMaker. You may simply use AWS native integration of purpose-built engines to undergo the information journey seamlessly. Try the AWS Weblog for extra practices about constructing ML options from a contemporary knowledge warehouse.

Concerning the Authors

Akhilesh Dube, a Senior Analytics Options Architect at AWS, possesses greater than 20 years of experience in working with databases and analytics merchandise. His major function includes collaborating with enterprise shoppers to design sturdy knowledge analytics options whereas providing complete technical steerage on a variety of AWS Analytics and AI/ML providers.

Akhilesh Dube, a Senior Analytics Options Architect at AWS, possesses greater than 20 years of experience in working with databases and analytics merchandise. His major function includes collaborating with enterprise shoppers to design sturdy knowledge analytics options whereas providing complete technical steerage on a variety of AWS Analytics and AI/ML providers.

Ren Guo is a Senior Information Specialist Options Architect within the domains of generative AI, analytics, and conventional AI/ML at AWS, Larger China Area.

Ren Guo is a Senior Information Specialist Options Architect within the domains of generative AI, analytics, and conventional AI/ML at AWS, Larger China Area.

Sherry Ding is a Senior AI/ML Specialist Options Architect. She has intensive expertise in machine studying with a PhD diploma in Pc Science. She primarily works with Public Sector clients on varied AI/ML-related enterprise challenges, serving to them speed up their machine studying journey on the AWS Cloud. When not serving to clients, she enjoys outside actions.

Sherry Ding is a Senior AI/ML Specialist Options Architect. She has intensive expertise in machine studying with a PhD diploma in Pc Science. She primarily works with Public Sector clients on varied AI/ML-related enterprise challenges, serving to them speed up their machine studying journey on the AWS Cloud. When not serving to clients, she enjoys outside actions.

Mark Roy is a Principal Machine Studying Architect for AWS, serving to clients design and construct AI/ML options. Mark’s work covers a variety of ML use instances, with a major curiosity in pc imaginative and prescient, deep studying, and scaling ML throughout the enterprise. He has helped corporations in lots of industries, together with insurance coverage, monetary providers, media and leisure, healthcare, utilities, and manufacturing. Mark holds six AWS Certifications, together with the ML Specialty Certification. Previous to becoming a member of AWS, Mark was an architect, developer, and expertise chief for over 25 years, together with 19 years in monetary providers.

Mark Roy is a Principal Machine Studying Architect for AWS, serving to clients design and construct AI/ML options. Mark’s work covers a variety of ML use instances, with a major curiosity in pc imaginative and prescient, deep studying, and scaling ML throughout the enterprise. He has helped corporations in lots of industries, together with insurance coverage, monetary providers, media and leisure, healthcare, utilities, and manufacturing. Mark holds six AWS Certifications, together with the ML Specialty Certification. Previous to becoming a member of AWS, Mark was an architect, developer, and expertise chief for over 25 years, together with 19 years in monetary providers.