This weblog is co-written with Josh Reini, Shayak Sen and Anupam Datta from TruEra

Amazon SageMaker JumpStart offers quite a lot of pretrained basis fashions reminiscent of Llama-2 and Mistal 7B that may be shortly deployed to an endpoint. These basis fashions carry out nicely with generative duties, from crafting textual content and summaries, answering questions, to producing photos and movies. Regardless of the good generalization capabilities of those fashions, there are sometimes use circumstances the place these fashions must be tailored to new duties or domains. One approach to floor this want is by evaluating the mannequin towards a curated floor fact dataset. After the necessity to adapt the inspiration mannequin is evident, you need to use a set of methods to hold that out. A well-liked strategy is to fine-tune the mannequin utilizing a dataset that’s tailor-made to the use case. Fantastic-tuning can enhance the inspiration mannequin and its efficacy can once more be measured towards the bottom fact dataset. This pocket book exhibits the right way to fine-tune fashions with SageMaker JumpStart.

One problem with this strategy is that curated floor fact datasets are costly to create. On this submit, we handle this problem by augmenting this workflow with a framework for extensible, automated evaluations. We begin off with a baseline basis mannequin from SageMaker JumpStart and consider it with TruLens, an open supply library for evaluating and monitoring massive language mannequin (LLM) apps. After we establish the necessity for adaptation, we are able to use fine-tuning in SageMaker JumpStart and make sure enchancment with TruLens.

TruLens evaluations use an abstraction of suggestions features. These features may be carried out in a number of methods, together with BERT-style fashions, appropriately prompted LLMs, and extra. TruLens’ integration with Amazon Bedrock means that you can run evaluations utilizing LLMs out there from Amazon Bedrock. The reliability of the Amazon Bedrock infrastructure is especially precious to be used in performing evaluations throughout growth and manufacturing.

This submit serves as each an introduction to TruEra’s place within the trendy LLM app stack and a hands-on information to utilizing Amazon SageMaker and TruEra to deploy, fine-tune, and iterate on LLM apps. Right here is the entire pocket book with code samples to indicate efficiency analysis utilizing TruLens

TruEra within the LLM app stack

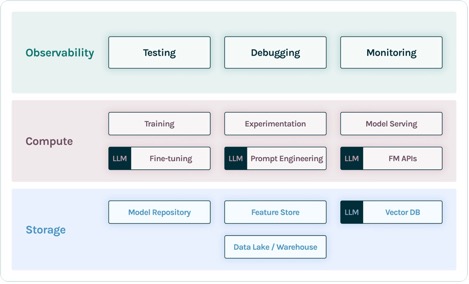

TruEra lives on the observability layer of LLM apps. Though new parts have labored their approach into the compute layer (fine-tuning, immediate engineering, mannequin APIs) and storage layer (vector databases), the necessity for observability stays. This want spans from growth to manufacturing and requires interconnected capabilities for testing, debugging, and manufacturing monitoring, as illustrated within the following determine.

In growth, you need to use open supply TruLens to shortly consider, debug, and iterate in your LLM apps in your atmosphere. A complete suite of analysis metrics, together with each LLM-based and conventional metrics out there in TruLens, means that you can measure your app towards standards required for transferring your utility to manufacturing.

In manufacturing, these logs and analysis metrics may be processed at scale with TruEra manufacturing monitoring. By connecting manufacturing monitoring with testing and debugging, dips in efficiency reminiscent of hallucination, security, safety, and extra may be recognized and corrected.

Deploy basis fashions in SageMaker

You’ll be able to deploy basis fashions reminiscent of Llama-2 in SageMaker with simply two traces of Python code:

Invoke the mannequin endpoint

After deployment, you may invoke the deployed mannequin endpoint by first making a payload containing your inputs and mannequin parameters:

Then you may merely cross this payload to the endpoint’s predict technique. Be aware that you should cross the attribute to simply accept the end-user license settlement every time you invoke the mannequin:

Consider efficiency with TruLens

Now you need to use TruLens to arrange your analysis. TruLens is an observability software, providing an extensible set of suggestions features to trace and consider LLM-powered apps. Suggestions features are important right here in verifying the absence of hallucination within the app. These suggestions features are carried out by utilizing off-the-shelf fashions from suppliers reminiscent of Amazon Bedrock. Amazon Bedrock fashions are a bonus right here due to their verified high quality and reliability. You’ll be able to arrange the supplier with TruLens by way of the next code:

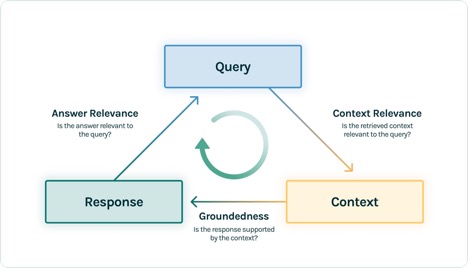

On this instance, we use three suggestions features: reply relevance, context relevance, and groundedness. These evaluations have shortly grow to be the usual for hallucination detection in context-enabled query answering purposes and are particularly helpful for unsupervised purposes, which cowl the overwhelming majority of right this moment’s LLM purposes.

Let’s undergo every of those suggestions features to grasp how they’ll profit us.

Context relevance

Context is a crucial enter to the standard of our utility’s responses, and it may be helpful to programmatically make sure that the context offered is related to the enter question. That is crucial as a result of this context can be utilized by the LLM to kind a solution, so any irrelevant data within the context might be weaved right into a hallucination. TruLens allows you to consider context relevance by utilizing the construction of the serialized document:

As a result of the context offered to LLMs is essentially the most consequential step of a Retrieval Augmented Technology (RAG) pipeline, context relevance is crucial for understanding the standard of retrievals. Working with clients throughout sectors, we’ve seen quite a lot of failure modes recognized utilizing this analysis, reminiscent of incomplete context, extraneous irrelevant context, and even lack of ample context out there. By figuring out the character of those failure modes, our customers are capable of adapt their indexing (reminiscent of embedding mannequin and chunking) and retrieval methods (reminiscent of sentence windowing and automerging) to mitigate these points.

Groundedness

After the context is retrieved, it’s then fashioned into a solution by an LLM. LLMs are sometimes liable to stray from the details offered, exaggerating or increasing to a correct-sounding reply. To confirm the groundedness of the applying, it is best to separate the response into separate statements and independently seek for proof that helps every inside the retrieved context.

Points with groundedness can typically be a downstream impact of context relevance. When the LLM lacks ample context to kind an evidence-based response, it’s extra prone to hallucinate in its try to generate a believable response. Even in circumstances the place full and related context is offered, the LLM can fall into points with groundedness. Significantly, this has performed out in purposes the place the LLM responds in a specific model or is getting used to finish a process it’s not nicely fitted to. Groundedness evaluations enable TruLens customers to interrupt down LLM responses declare by declare to grasp the place the LLM is most frequently hallucinating. Doing so has proven to be significantly helpful for illuminating the best way ahead in eliminating hallucination via model-side modifications (reminiscent of prompting, mannequin selection, and mannequin parameters).

Reply relevance

Lastly, the response nonetheless must helpfully reply the unique query. You’ll be able to confirm this by evaluating the relevance of the ultimate response to the person enter:

By reaching passable evaluations for this triad, you can also make a nuanced assertion about your utility’s correctness; this utility is verified to be hallucination free as much as the restrict of its information base. In different phrases, if the vector database accommodates solely correct data, then the solutions offered by the context-enabled query answering app are additionally correct.

Floor fact analysis

Along with these suggestions features for detecting hallucination, now we have a take a look at dataset, DataBricks-Dolly-15k, that allows us so as to add floor fact similarity as a fourth analysis metric. See the next code:

Construct the applying

After you will have arrange your evaluators, you may construct your utility. On this instance, we use a context-enabled QA utility. On this utility, present the instruction and context to the completion engine:

After you will have created the app and suggestions features, it’s simple to create a wrapped utility with TruLens. This wrapped utility, which we title base_recorder, will log and consider the applying every time it’s known as:

Outcomes with base Llama-2

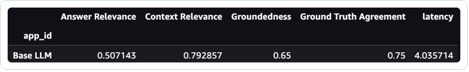

After you will have run the applying on every document within the take a look at dataset, you may view the leads to your SageMaker pocket book with tru.get_leaderboard(). The next screenshot exhibits the outcomes of the analysis. Reply relevance is alarmingly low, indicating that the mannequin is struggling to constantly comply with the directions offered.

Fantastic-tune Llama-2 utilizing SageMaker Jumpstart

Steps to wonderful tune Llama-2 mannequin utilizing SageMaker Jumpstart are additionally offered on this pocket book.

To arrange for fine-tuning, you first have to obtain the coaching set and setup a template for directions

Then, add each the dataset and directions to an Amazon Easy Storage Service (Amazon S3) bucket for coaching:

To fine-tune in SageMaker, you need to use the SageMaker JumpStart Estimator. We largely use default hyperparameters right here, besides we set instruction tuning to true:

After you will have skilled the mannequin, you may deploy it and create your utility simply as you probably did earlier than:

Consider the fine-tuned mannequin

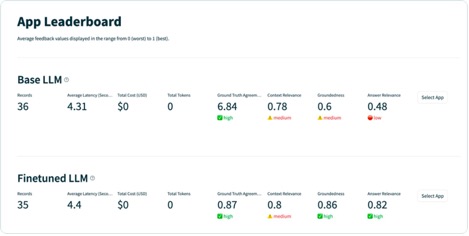

You’ll be able to run the mannequin once more in your take a look at set and examine the outcomes, this time compared to the bottom Llama-2:

The brand new, fine-tuned Llama-2 mannequin has massively improved on reply relevance and groundedness, together with similarity to the bottom fact take a look at set. This huge enchancment in high quality comes on the expense of a slight improve in latency. This improve in latency is a direct results of the fine-tuning rising the dimensions of the mannequin.

Not solely are you able to view these leads to the pocket book, however you can even discover the leads to the TruLens UI by operating tru.run_dashboard(). Doing so can present the identical aggregated outcomes on the leaderboard web page, but in addition provides you the flexibility to dive deeper into problematic information and establish failure modes of the applying.

To grasp the development to the app on a document stage, you may transfer to the evaluations web page and study the suggestions scores on a extra granular stage.

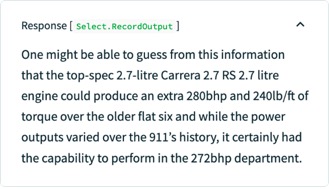

For instance, when you ask the bottom LLM the query “What’s the strongest Porsche flat six engine,” the mannequin hallucinates the next.

Moreover, you may study the programmatic analysis of this document to grasp the applying’s efficiency towards every of the suggestions features you will have outlined. By inspecting the groundedness suggestions leads to TruLens, you may see an in depth breakdown of the proof out there to assist every declare being made by the LLM.

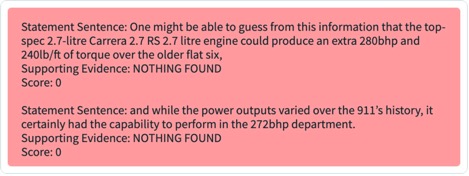

In case you export the identical document on your fine-tuned LLM in TruLens, you may see that fine-tuning with SageMaker JumpStart dramatically improved the groundedness of the response.

Through the use of an automatic analysis workflow with TruLens, you may measure your utility throughout a wider set of metrics to higher perceive its efficiency. Importantly, you at the moment are capable of perceive this efficiency dynamically for any use case—even these the place you haven’t collected floor fact.

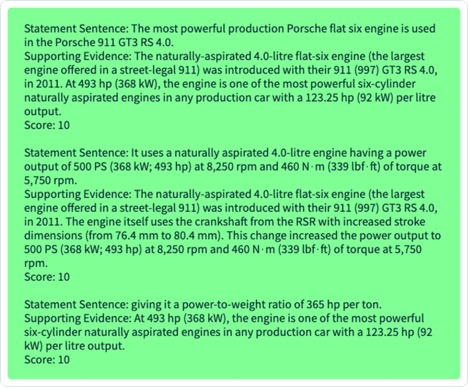

How TruLens works

After you will have prototyped your LLM utility, you may combine TruLens (proven earlier) to instrument its name stack. After the decision stack is instrumented, it may then be logged on every run to a logging database dwelling in your atmosphere.

Along with the instrumentation and logging capabilities, analysis is a core element of worth for TruLens customers. These evaluations are carried out in TruLens by suggestions features to run on prime of your instrumented name stack, and in flip name upon exterior mannequin suppliers to provide the suggestions itself.

After suggestions inference, the suggestions outcomes are written to the logging database, from which you’ll be able to run the TruLens dashboard. The TruLens dashboard, operating in your atmosphere, means that you can discover, iterate, and debug your LLM app.

At scale, these logs and evaluations may be pushed to TruEra for manufacturing observability that may course of tens of millions of observations a minute. Through the use of the TruEra Observability Platform, you may quickly detect hallucination and different efficiency points, and zoom in to a single document in seconds with built-in diagnostics. Shifting to a diagnostics viewpoint means that you can simply establish and mitigate failure modes on your LLM app reminiscent of hallucination, poor retrieval high quality, questions of safety, and extra.

Consider for sincere, innocent, and useful responses

By reaching passable evaluations for this triad, you may attain a better diploma of confidence within the truthfulness of responses it offers. Past truthfulness, TruLens has broad assist for the evaluations wanted to grasp your LLM’s efficiency on the axis of “Sincere, Innocent, and Useful.” Our customers have benefited tremendously from the flexibility to establish not solely hallucination as we mentioned earlier, but in addition points with security, safety, language match, coherence, and extra. These are all messy, real-world issues that LLM app builders face, and may be recognized out of the field with TruLens.

Conclusion

This submit mentioned how one can speed up the productionisation of AI purposes and use basis fashions in your group. With SageMaker JumpStart, Amazon Bedrock, and TruEra, you may deploy, fine-tune, and iterate on basis fashions on your LLM utility. Checkout this hyperlink to search out out extra about TruEra and check out the pocket book your self.

Concerning the authors

Josh Reini is a core contributor to open-source TruLens and the founding Developer Relations Information Scientist at TruEra the place he’s chargeable for training initiatives and nurturing a thriving neighborhood of AI High quality practitioners.

Josh Reini is a core contributor to open-source TruLens and the founding Developer Relations Information Scientist at TruEra the place he’s chargeable for training initiatives and nurturing a thriving neighborhood of AI High quality practitioners.

Shayak Sen is the CTO & Co-Founding father of TruEra. Shayak is targeted on constructing methods and main analysis to make machine studying methods extra explainable, privateness compliant, and truthful.

Shayak Sen is the CTO & Co-Founding father of TruEra. Shayak is targeted on constructing methods and main analysis to make machine studying methods extra explainable, privateness compliant, and truthful.

Anupam Datta is Co-Founder, President, and Chief Scientist of TruEra. Earlier than TruEra, he spent 15 years on the school at Carnegie Mellon College (2007-22), most not too long ago as a tenured Professor of Electrical & Pc Engineering and Pc Science.

Anupam Datta is Co-Founder, President, and Chief Scientist of TruEra. Earlier than TruEra, he spent 15 years on the school at Carnegie Mellon College (2007-22), most not too long ago as a tenured Professor of Electrical & Pc Engineering and Pc Science.

Vivek Gangasani is a AI/ML Startup Options Architect for Generative AI startups at AWS. He helps rising GenAI startups construct modern options utilizing AWS providers and accelerated compute. At present, he’s targeted on creating methods for fine-tuning and optimizing the inference efficiency of Massive Language Fashions. In his free time, Vivek enjoys mountain climbing, watching motion pictures and making an attempt totally different cuisines.

Vivek Gangasani is a AI/ML Startup Options Architect for Generative AI startups at AWS. He helps rising GenAI startups construct modern options utilizing AWS providers and accelerated compute. At present, he’s targeted on creating methods for fine-tuning and optimizing the inference efficiency of Massive Language Fashions. In his free time, Vivek enjoys mountain climbing, watching motion pictures and making an attempt totally different cuisines.