At the moment, generative AI fashions cowl quite a lot of duties from textual content summarization, Q&A, and picture and video technology. To enhance the standard of output, approaches like n-short studying, Immediate engineering, Retrieval Augmented Technology (RAG) and advantageous tuning are used. Superb-tuning lets you alter these generative AI fashions to attain improved efficiency in your domain-specific duties.

With Amazon SageMaker, now you possibly can run a SageMaker coaching job just by annotating your Python code with @distant decorator. The SageMaker Python SDK mechanically interprets your current workspace setting, and any related information processing code and datasets, into an SageMaker coaching job that runs on the coaching platform. This has the benefit of writing the code in a extra pure, object-oriented means, and nonetheless makes use of SageMaker capabilities to run coaching jobs on a distant cluster with minimal adjustments.

On this submit, we showcase the right way to fine-tune a Falcon-7B Basis Fashions (FM) utilizing @distant decorator from SageMaker Python SDK. It additionally makes use of Hugging Face’s parameter-efficient fine-tuning (PEFT) library and quantization strategies by means of bitsandbytes to assist fine-tuning. The code introduced on this weblog can be used to fine-tune different FMs, resembling Llama-2 13b.

The total precision representations of this mannequin might need challenges to suit into reminiscence on a single and even a number of Graphic Processing Models (GPUs) — or might even want a much bigger occasion. Therefore, with a view to fine-tune this mannequin with out rising price, we use the approach referred to as Quantized LLMs with Low-Rank Adapters (QLoRA). QLoRA is an environment friendly fine-tuning method that reduces reminiscence utilization of LLMs whereas sustaining superb efficiency.

Benefits of utilizing @distant decorator

Earlier than going additional, let’s perceive how distant decorator improves developer productiveness whereas working with SageMaker:

@distant decorator triggers a coaching job instantly utilizing native python code, with out the specific invocation of SageMaker Estimators and SageMaker enter channels

Low barrier for entry for builders coaching fashions on SageMaker.

No want to change Built-in improvement environments (IDEs). Proceed writing code in your selection of IDE and invoke SageMaker coaching jobs.

No have to study containers. Proceed offering dependencies in a necessities.txt and provide that to distant decorator.

Stipulations

An AWS account is required with an AWS Identification and Entry Administration (AWS IAM) function that has permissions to handle assets created as a part of the answer. For particulars, consult with Creating an AWS account.

On this submit, we use Amazon SageMaker Studio with the Information Science 3.0 picture and a ml.t3.medium quick launch occasion. Nonetheless, you should use any built-in improvement setting (IDE) of your selection. You simply have to arrange your AWS Command Line Interface (AWS CLI) credentials accurately. For extra data, consult with Configure the AWS CLI.

For fine-tuning, the Falcon-7B, an ml.g5.12xlarge occasion is used on this submit. Please guarantee enough capability for this occasion in AWS account.

You want to clone this Github repository for replicating the answer demonstrated on this submit.

Resolution overview

Set up pre-requisites to advantageous tuning the Falcon-7B mannequin

Arrange distant decorator configurations

Preprocess the dataset containing AWS providers FAQs

Superb-tune Falcon-7B on AWS providers FAQs

Take a look at the fine-tune fashions on pattern questions associated to AWS providers

1. Set up conditions to advantageous tuning the Falcon-7B mannequin

Launch the pocket book falcon-7b-qlora-remote-decorator_qa.ipynb in SageMaker Studio by choosing the Picture as Information Science and Kernel as Python 3. Set up all of the required libraries talked about within the necessities.txt. Few of the libraries must be put in on the pocket book occasion itself. Carry out different operations wanted for dataset processing and triggering a SageMaker coaching job.

2. Setup distant decorator configurations

Create a configuration file the place all of the configurations associated to Amazon SageMaker coaching job are specified. This file is learn by @distant decorator whereas operating the coaching job. This file accommodates settings like dependencies, coaching picture, occasion, and the execution function for use for coaching job. For an in depth reference of all of the settings supported by config file, try Configuring and utilizing defaults with the SageMaker Python SDK.

It’s not obligatory to make use of the config.yaml file with a view to work with the @distant decorator. That is only a cleaner method to provide all configurations to the @distant decorator. This retains SageMaker and AWS associated parameters exterior of code with a one time effort for establishing the config file used throughout the staff members. All of the configurations may be provided instantly within the decorator arguments, however that reduces readability and maintainability of adjustments in the long term. Additionally, the configuration file might be created by an administrator and shared with all of the customers in an setting.

Preprocess the dataset containing AWS providers FAQs

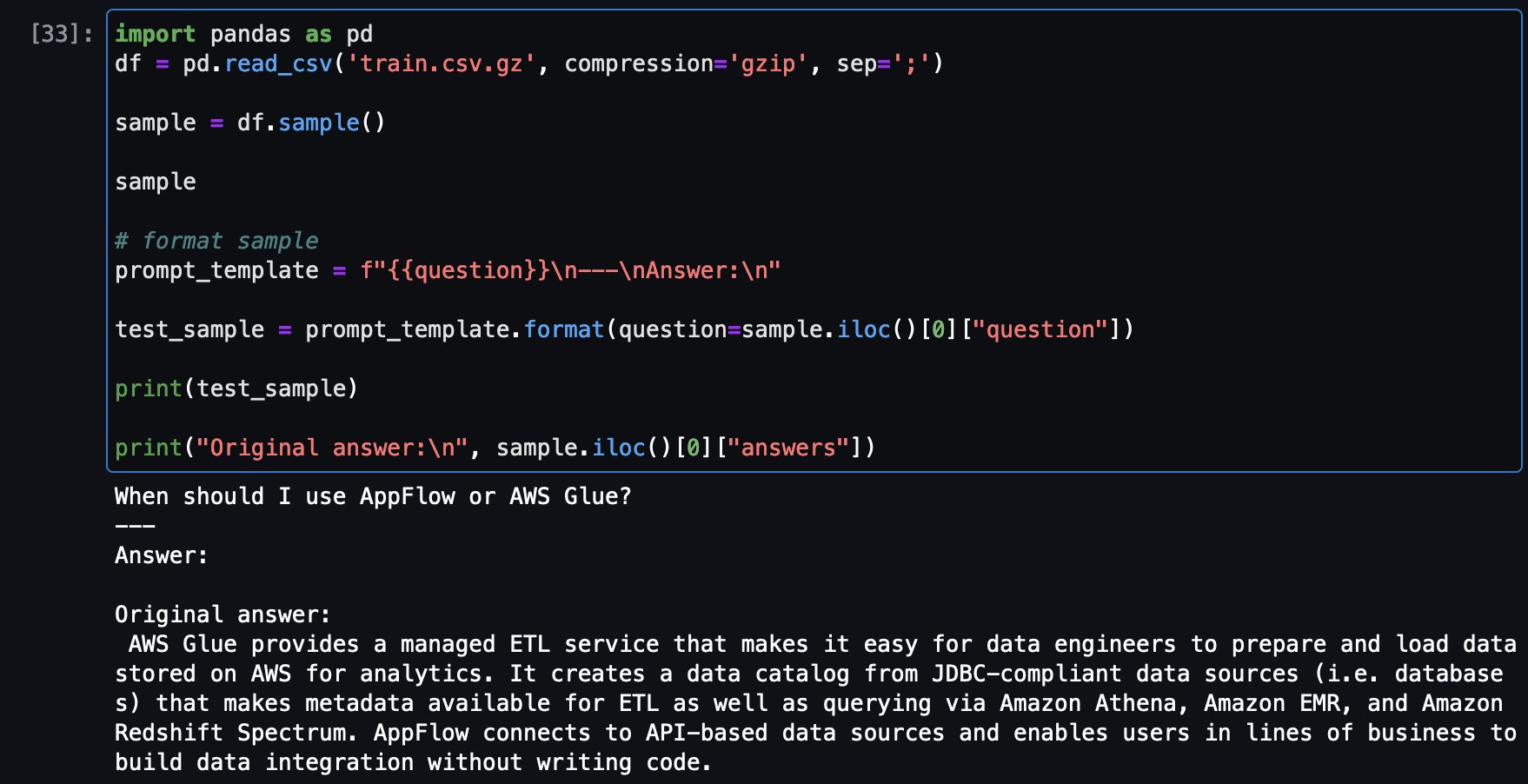

Subsequent step is to load and preprocess the dataset to make it prepared for coaching job. First, allow us to take a look on the dataset:

It reveals FAQ for one of many AWS providers. Along with QLoRA, bitsanbytes is used to transform to 4-bit precision to quantize frozen LLM to 4-bit and fix LoRA adapters on it.

Create a immediate template to transform every FAQ pattern to a immediate format:

Subsequent step is to transform the inputs (textual content) to token IDs. That is performed by a Hugging Face Transformers Tokenizer.

Now merely use the prompt_template operate to transform all of the FAQ to immediate format and arrange practice and take a look at datasets.

4. Superb tune Falcon-7B on AWS providers FAQs

Now you possibly can put together the coaching script and outline the coaching operate train_fn and put @distant decorator on the operate.

The coaching operate does the next:

tokenizes and chunks the dataset

arrange BitsAndBytesConfig, which specifies the mannequin must be loaded in 4-bit however whereas computation must be transformed to bfloat16.

Load the mannequin

Discover goal modules and replace the required matrices through the use of the utility methodology find_all_linear_names

Create LoRA configurations that specify rating of replace matrices (s), scaling issue (lora_alpha), the modules to use the LoRA replace matrices (target_modules), dropout chance for Lora layers(lora_dropout), task_type, and so on.

Begin the coaching and analysis

And invoke the train_fn()

The tuning job can be operating on the Amazon SageMaker coaching cluster. Await tuning job to complete.

5. Take a look at the advantageous tune fashions on pattern questions associated to AWS providers

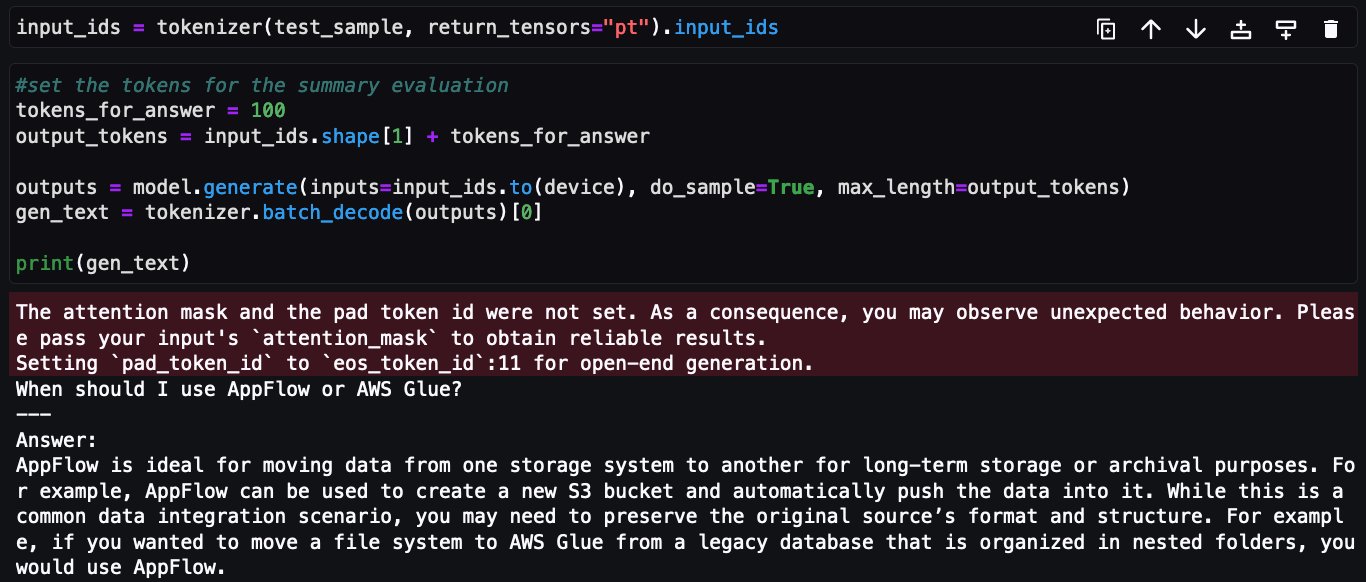

Now, it’s time to run some assessments on the mannequin. First, allow us to load the mannequin:

Now load a pattern query from the coaching dataset to see the unique reply after which ask the identical query from the tuned mannequin to see the reply as compared.

Here’s a pattern a query from coaching set and the unique reply:

Now, identical query being requested to tuned Falcon-7B mannequin:

This concludes the implementation of advantageous tuning Falcon-7B on AWS providers FAQ dataset utilizing @distant decorator from Amazon SageMaker Python SDK.

Cleansing up

Full the next steps to scrub up your assets:

Shut down the Amazon SageMaker Studio situations to keep away from incurring further prices.

Clear up your Amazon Elastic File System (Amazon EFS) listing by clearing the Hugging Face cache listing:

Conclusion

On this submit, we confirmed you the right way to successfully use the @distant decorator’s capabilities to fine-tune Falcon-7B mannequin utilizing QLoRA, Hugging Face PEFT with bitsandbtyes with out making use of vital adjustments within the coaching pocket book, and used Amazon SageMaker capabilities to run coaching jobs on a distant cluster.

All of the code proven as a part of this submit to fine-tune Falcon-7B is obtainable within the GitHub repository. The repository additionally accommodates pocket book displaying the right way to fine-tune Llama-13B.

As a subsequent step, we encourage you to take a look at the @distant decorator performance and Python SDK API and use it in your selection of setting and IDE. Further examples can be found within the amazon-sagemaker-examples repository to get you began rapidly. You may as well try the next posts:

Concerning the Authors

Bruno Pistone is an AI/ML Specialist Options Architect for AWS primarily based in Milan. He works with giant clients serving to them to deeply perceive their technical wants and design AI and Machine Studying options that make the perfect use of the AWS Cloud and the Amazon Machine Studying stack. His experience embody: Machine Studying finish to finish, Machine Studying Industrialization, and Generative AI. He enjoys spending time along with his buddies and exploring new locations, in addition to travelling to new locations.

Bruno Pistone is an AI/ML Specialist Options Architect for AWS primarily based in Milan. He works with giant clients serving to them to deeply perceive their technical wants and design AI and Machine Studying options that make the perfect use of the AWS Cloud and the Amazon Machine Studying stack. His experience embody: Machine Studying finish to finish, Machine Studying Industrialization, and Generative AI. He enjoys spending time along with his buddies and exploring new locations, in addition to travelling to new locations.

Vikesh Pandey is a Machine Studying Specialist Options Architect at AWS, serving to clients from monetary industries design and construct options on generative AI and ML. Outdoors of labor, Vikesh enjoys making an attempt out completely different cuisines and taking part in outside sports activities.

Vikesh Pandey is a Machine Studying Specialist Options Architect at AWS, serving to clients from monetary industries design and construct options on generative AI and ML. Outdoors of labor, Vikesh enjoys making an attempt out completely different cuisines and taking part in outside sports activities.