This put up is co-authored by Anatoly Khomenko, Machine Studying Engineer, and Abdenour Bezzouh, Chief Know-how Officer at Expertise.com.

Based in 2011, Expertise.com is likely one of the world’s largest sources of employment. The corporate combines paid job listings from their shoppers with public job listings right into a single searchable platform. With over 30 million jobs listed in additional than 75 international locations, Expertise.com serves jobs throughout many languages, industries, and distribution channels. The result’s a platform that matches thousands and thousands of job seekers with accessible jobs.

Expertise.com’s mission is to centralize all jobs accessible on the internet to assist job seekers discover their finest match whereas offering them with the perfect search expertise. Its focus is on relevancy, as a result of the order of the really helpful jobs is vitally necessary to point out the roles most pertinent to customers’ pursuits. The efficiency of Expertise.com’s matching algorithm is paramount to the success of the enterprise and a key contributor to their customers’ expertise. It’s difficult to foretell which jobs are pertinent to a job seeker based mostly on the restricted quantity of knowledge supplied, often contained to a couple key phrases and a location.

Given this mission, Expertise.com and AWS joined forces to create a job advice engine utilizing state-of-the-art pure language processing (NLP) and deep studying mannequin coaching strategies with Amazon SageMaker to supply an unmatched expertise for job seekers. This put up reveals our joint method to designing a job advice system, together with characteristic engineering, deep studying mannequin structure design, hyperparameter optimization, and mannequin analysis that ensures the reliability and effectiveness of our answer for each job seekers and employers. The system is developed by a group of devoted utilized machine studying (ML) scientists, ML engineers, and material consultants in collaboration between AWS and Expertise.com.

The advice system has pushed an 8.6% enhance in clickthrough price (CTR) in on-line A/B testing towards a earlier XGBoost-based answer, serving to join thousands and thousands of Expertise.com’s customers to higher jobs.

Overview of answer

An summary of the system is illustrated within the following determine. The system takes a person’s search question as enter and outputs a ranked record of jobs so as of pertinence. Job pertinence is measured by the clicking likelihood (the likelihood of a job seeker clicking on a job for extra info).

The system consists of 4 predominant elements:

Mannequin structure – The core of this job advice engine is a deep learning-based Triple Tower Pointwise mannequin, which features a question encoder that encodes person search queries, a doc encoder that encodes the job descriptions, and an interplay encoder that processes the previous user-job interplay options. The outputs of the three towers are concatenated and handed via a classification head to foretell the job’s click on possibilities. By coaching this mannequin on search queries, job specifics, and historic person interplay knowledge from Expertise.com, this method gives personalised and extremely related job suggestions to job seekers.

Characteristic engineering – We carry out two units of characteristic engineering to extract beneficial info from enter knowledge and feed it into the corresponding towers within the mannequin. The 2 units are commonplace characteristic engineering and fine-tuned Sentence-BERT (SBERT) embeddings. We use the usual engineered options as enter into the interplay encoder and feed the SBERT derived embedding into the question encoder and doc encoder.

Mannequin optimization and tuning – We make the most of superior coaching methodologies to coach, take a look at, and deploy the system with SageMaker. This consists of SageMaker Distributed Information Parallel (DDP) coaching, SageMaker Computerized Mannequin Tuning (AMT), studying price scheduling, and early stopping to enhance mannequin efficiency and coaching velocity. Utilizing the DDP coaching framework helped velocity up our mannequin coaching to roughly eight occasions sooner.

Mannequin analysis – We conduct each offline and on-line analysis. We consider the mannequin efficiency with Space Underneath the Curve (AUC) and Imply Common Precision at Ok (mAP@Ok) in offline analysis. Throughout on-line A/B testing, we consider the CTR enhancements.

Within the following sections, we current the main points of those 4 elements.

Deep studying mannequin structure design

We design a Triple Tower Deep Pointwise (TTDP) mannequin utilizing a triple-tower deep studying structure and the pointwise pair modeling method. The triple-tower structure gives three parallel deep neural networks, with every tower processing a set of options independently. This design sample permits the mannequin to study distinct representations from completely different sources of knowledge. After the representations from all three towers are obtained, they’re concatenated and handed via a classification head to make the ultimate prediction (0–1) on the clicking likelihood (a pointwise modeling setup).

The three towers are named based mostly on the data they course of: the question encoder processes the person search question, the doc encoder processes the candidate job’s documentational contents together with the job title and firm title, and the interplay encoder makes use of related options extracted from previous person interactions and historical past (mentioned extra within the subsequent part).

Every of those towers performs an important position in studying tips on how to suggest jobs:

Question encoder – The question encoder takes within the SBERT embeddings derived from the person’s job search question. We improve the embeddings via an SBERT mannequin we fine-tuned. This encoder processes and understands the person’s job search intent, together with particulars and nuances captured by our domain-specific embeddings.

Doc encoder – The doc encoder processes the data of every job itemizing. Particularly, it takes the SBERT embeddings of the concatenated textual content from the job title and firm. The instinct is that customers can be extra taken with candidate jobs which can be extra related to the search question. By mapping the roles and the search queries to the identical vector area (outlined by SBERT), the mannequin can study to foretell the likelihood of the potential jobs a job seeker will click on.

Interplay encoder – The interplay encoder offers with the person’s previous interactions with job listings. The options are produced by way of a typical characteristic engineering step, which incorporates calculating reputation metrics for job roles and firms, establishing context similarity scores, and extracting interplay parameters from earlier person engagements. It additionally processes the named entities recognized within the job title and search queries with a pre-trained named entity recognition (NER) mannequin.

Every tower generates an unbiased output in parallel, all of that are then concatenated collectively. This mixed characteristic vector is then handed to foretell the clicking likelihood of a job itemizing for a person question. The triple-tower structure gives flexibility in capturing complicated relationships between completely different inputs or options, permitting the mannequin to reap the benefits of the strengths of every tower whereas studying extra expressive representations for the given activity.

Candidate jobs’ predicted click on possibilities are ranked from excessive to low, producing personalised job suggestions. By way of this course of, we be sure that every bit of knowledge—whether or not it’s the person’s search intent, job itemizing particulars, or previous interactions—is absolutely captured by a particular tower devoted to it. The complicated relationships between them are additionally captured via the mix of the tower outputs.

Characteristic engineering

We carry out two units of characteristic engineering processes to extract beneficial info from the uncooked knowledge and feed it into the corresponding towers within the mannequin: commonplace characteristic engineering and fine-tuned SBERT embeddings.

Commonplace characteristic engineering

Our knowledge preparation course of begins with commonplace characteristic engineering. Total, we outline 4 varieties of options:

Reputation – We calculate reputation scores on the particular person job stage, occupation stage, and firm stage. This gives a metric of how engaging a selected job or firm is perhaps.

Textual similarity – To know the contextual relationship between completely different textual parts, we compute similarity scores, together with string similarity between the search question and the job title. This helps us gauge the relevance of a job opening to a job seeker’s search or software historical past.

Interplay – As well as, we extract interplay options from previous person engagements with job listings. A chief instance of that is the embedding similarity between previous clicked job titles and candidate job titles. This measure helps us perceive the similarity between earlier jobs a person has proven curiosity in vs. upcoming job alternatives. This enhances the precision of our job advice engine.

Profile – Lastly, we extract user-defined job curiosity info from the person profile and evaluate it with new job candidates. This helps us perceive if a job candidate matches a person’s curiosity.

An important step in our knowledge preparation is the applying of a pre-trained NER mannequin. By implementing an NER mannequin, we are able to establish and label named entities inside job titles and search queries. Consequently, this enables us to compute similarity scores between these recognized entities, offering a extra centered and context-aware measure of relatedness. This system reduces the noise in our knowledge and provides us a extra nuanced, context-sensitive technique of evaluating jobs.

Effective-tuned SBERT embeddings

To boost the relevance and accuracy of our job advice system, we use the facility of SBERT, a strong transformer-based mannequin, recognized for its proficiency in capturing semantic meanings and contexts from textual content. Nevertheless, generic embeddings like SBERT, though efficient, might not absolutely seize the distinctive nuances and terminologies inherent in a particular area akin to ours, which facilities round employment and job searches. To beat this, we fine-tune the SBERT embeddings utilizing our domain-specific knowledge. This fine-tuning course of optimizes the mannequin to higher perceive and course of the industry-specific language, jargon, and context, making the embeddings extra reflective of our particular area. Consequently, the refined embeddings provide improved efficiency in capturing each semantic and contextual info inside our sphere, resulting in extra correct and significant job suggestions for our customers.

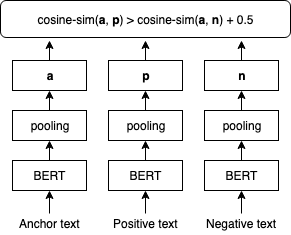

The next determine illustrates the SBERT fine-tuning step.

We fine-tune SBERT embeddings utilizing TripletLoss with a cosine distance metric that learns textual content embedding the place anchor and constructive texts have a better cosine similarity than anchor and detrimental texts. We use customers’ search queries as anchor texts. We mix job titles and employer names as inputs to the constructive and detrimental texts. The constructive texts are sampled from job postings that the corresponding person clicked on, whereas the detrimental texts are sampled from job postings that the person didn’t click on on. The next is pattern implementation of the fine-tuning process:

Mannequin coaching with SageMaker Distributed Information Parallel

We use SageMaker Distributed Information Parallel (SMDDP), a characteristic of the SageMaker ML platform that’s constructed on prime of PyTorch DDP. It gives an optimized atmosphere for operating PyTorch DDP coaching jobs on the SageMaker platform. It’s designed to considerably velocity up deep studying mannequin coaching. It accomplishes this by splitting a big dataset into smaller chunks and distributing them throughout a number of GPUs. The mannequin is replicated on each GPU. Every GPU processes its assigned knowledge independently, and the outcomes are collated and synchronized throughout all GPUs. DDP takes care of gradient communication to maintain mannequin replicas synchronized and overlaps them with gradient computations to hurry up coaching. SMDDP makes use of an optimized AllReduce algorithm to reduce communication between GPUs, lowering synchronization time and bettering total coaching velocity. The algorithm adapts to completely different community circumstances, making it extremely environment friendly for each on-premises and cloud-based environments. Within the SMDDP structure (as proven within the following determine), distributed coaching can be scaled utilizing a cluster of many nodes. This implies not simply a number of GPUs in a computing occasion, however many cases with a number of GPUs, which additional hurries up coaching.

For extra details about this structure, check with Introduction to SageMaker’s Distributed Information Parallel Library.

With SMDDP, we have now been capable of considerably cut back the coaching time for our TTDP mannequin, making it eight occasions sooner. Quicker coaching occasions imply we are able to iterate and enhance our fashions extra shortly, main to higher job suggestions for our customers in a shorter period of time. This effectivity acquire is instrumental in sustaining the competitiveness of our job advice engine in a fast-evolving job market.

You’ll be able to adapt your coaching script with the SMDDP with solely three traces of code, as proven within the following code block. Utilizing PyTorch for example, the one factor that you must do is import the SMDDP library’s PyTorch shopper (smdistributed.dataparallel.torch.torch_smddp). The shopper registers smddp as a backend for PyTorch.

After you’ve a working PyTorch script that’s tailored to make use of the distributed knowledge parallel library, you’ll be able to launch a distributed coaching job utilizing the SageMaker Python SDK.

Evaluating mannequin efficiency

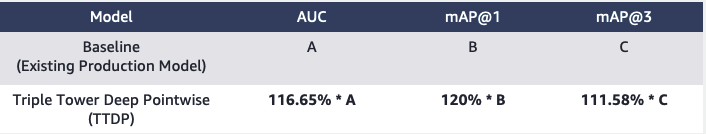

When evaluating the efficiency of a advice system, it’s essential to decide on metrics that align carefully with enterprise targets and supply a transparent understanding of the mannequin’s effectiveness. In our case, we use the AUC to guage our TTDP mannequin’s job click on prediction efficiency and the mAP@Ok to evaluate the standard of the ultimate ranked jobs record.

The AUC refers back to the space underneath the receiver working attribute (ROC) curve. It represents the likelihood {that a} randomly chosen constructive instance can be ranked larger than a randomly chosen detrimental instance. It ranges from 0–1, the place 1 signifies a really perfect classifier and 0.5 represents a random guess. mAP@Ok is a metric generally used to evaluate the standard of knowledge retrieval techniques, akin to our job recommender engine. It measures the typical precision of retrieving the highest Ok related objects for a given question or person. It ranges from 0–1, with 1 indicating optimum rating and 0 indicating the bottom attainable precision on the given Ok worth. We consider the AUC, mAP@1, and mAP@3. Collectively, these metrics enable us to gauge the mannequin’s means to differentiate between constructive and detrimental lessons (AUC) and its success at rating essentially the most related objects on the prime (mAP@Ok).

Based mostly on our offline analysis, the TTDP mannequin outperformed the baseline mannequin—the present XGBoost-based manufacturing mannequin—by 16.65% for AUC, 20% for mAP@1, and 11.82% for mAP@3.

Moreover, we designed a web based A/B take a look at to guage the proposed system and ran the take a look at on a proportion of the US e-mail inhabitants for six weeks. In complete, roughly 22 million emails have been despatched utilizing the job really helpful by the brand new system. The ensuing uplift in clicks in comparison with the earlier manufacturing mannequin was 8.6%. Expertise.com is progressively rising the proportion to roll out the brand new system to its full inhabitants and channels.

Conclusion

Making a job advice system is a posh endeavor. Every job seeker has distinctive wants, preferences, {and professional} experiences that may’t be inferred from a brief search question. On this put up, Expertise.com collaborated with AWS to develop an end-to-end deep learning-based job recommender answer that ranks lists of jobs to suggest to customers. The Expertise.com group really loved collaborating with the AWS group all through the method of fixing this downside. This marks a major milestone in Expertise.com’s transformative journey, because the group takes benefit of the facility of deep studying to empower its enterprise.

This challenge was fine-tuned utilizing SBERT to generate textual content embeddings. On the time of writing, AWS launched Amazon Titan Embeddings as a part of their foundational fashions (FMs) provided via Amazon Bedrock, which is a totally managed service offering a choice of high-performing foundational fashions from main AI firms. We encourage readers to discover the machine studying strategies introduced on this weblog put up and leverage the capabilities supplied by AWS, akin to SMDDP, whereas making use of AWS Bedrock’s foundational fashions to create their very own search functionalities.

References

In regards to the authors

Yi Xiang is a Utilized Scientist II on the Amazon Machine Studying Options Lab, the place she helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Yi Xiang is a Utilized Scientist II on the Amazon Machine Studying Options Lab, the place she helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Tong Wang is a Senior Utilized Scientist on the Amazon Machine Studying Options Lab, the place he helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Tong Wang is a Senior Utilized Scientist on the Amazon Machine Studying Options Lab, the place he helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Dmitriy Bespalov is a Senior Utilized Scientist on the Amazon Machine Studying Options Lab, the place he helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Dmitriy Bespalov is a Senior Utilized Scientist on the Amazon Machine Studying Options Lab, the place he helps AWS prospects throughout completely different industries speed up their AI and cloud adoption.

Anatoly Khomenko is a Senior Machine Studying Engineer at Expertise.com with a ardour for pure language processing matching good folks to good jobs.

Anatoly Khomenko is a Senior Machine Studying Engineer at Expertise.com with a ardour for pure language processing matching good folks to good jobs.

Abdenour Bezzouh is an govt with greater than 25 years expertise constructing and delivering know-how options that scale to thousands and thousands of consumers. Abdenour held the place of Chief Know-how Officer (CTO) at Expertise.com when the AWS group designed and executed this specific answer for Expertise.com.

Abdenour Bezzouh is an govt with greater than 25 years expertise constructing and delivering know-how options that scale to thousands and thousands of consumers. Abdenour held the place of Chief Know-how Officer (CTO) at Expertise.com when the AWS group designed and executed this specific answer for Expertise.com.

Dale Jacques is a Senior AI Strategist throughout the Generative AI Innovation Heart the place he helps AWS prospects translate enterprise issues into AI options.

Dale Jacques is a Senior AI Strategist throughout the Generative AI Innovation Heart the place he helps AWS prospects translate enterprise issues into AI options.

Yanjun Qi is a Senior Utilized Science Supervisor on the Amazon Machine Studying Resolution Lab. She innovates and applies machine studying to assist AWS prospects velocity up their AI and cloud adoption.

Yanjun Qi is a Senior Utilized Science Supervisor on the Amazon Machine Studying Resolution Lab. She innovates and applies machine studying to assist AWS prospects velocity up their AI and cloud adoption.