Clients of each dimension and trade are innovating on AWS by infusing machine studying (ML) into their services. Latest developments in generative AI fashions have additional sped up the necessity of ML adoption throughout industries. Nevertheless, implementing safety, knowledge privateness, and governance controls are nonetheless key challenges confronted by prospects when implementing ML workloads at scale. Addressing these challenges builds the framework and foundations for mitigating danger and accountable use of ML-driven merchandise. Though generative AI may have extra controls in place, corresponding to eradicating toxicity and stopping jailbreaking and hallucinations, it shares the identical foundational parts for safety and governance as conventional ML.

We hear from prospects that they require specialised data and funding of as much as 12 months for constructing out their custom-made Amazon SageMaker ML platform implementation to make sure scalable, dependable, safe, and ruled ML environments for his or her strains of enterprise (LOBs) or ML groups. For those who lack a framework for governing the ML lifecycle at scale, chances are you’ll run into challenges corresponding to team-level useful resource isolation, scaling experimentation assets, operationalizing ML workflows, scaling mannequin governance, and managing safety and compliance of ML workloads.

Governing ML lifecycle at scale is a framework that can assist you construct an ML platform with embedded safety and governance controls primarily based on trade finest practices and enterprise requirements. This framework addresses challenges by offering prescriptive steerage by a modular framework strategy extending an AWS Management Tower multi-account AWS surroundings and the strategy mentioned within the submit Establishing safe, well-governed machine studying environments on AWS.

It gives prescriptive steerage for the next ML platform features:

Multi-account, safety, and networking foundations – This perform makes use of AWS Management Tower and well-architected rules for organising and working multi-account surroundings, safety, and networking providers.

Knowledge and governance foundations – This perform makes use of an information mesh structure for organising and working the info lake, central characteristic retailer, and knowledge governance foundations to allow fine-grained knowledge entry.

ML platform shared and governance providers – This perform permits organising and working widespread providers corresponding to CI/CD, AWS Service Catalog for provisioning environments, and a central mannequin registry for mannequin promotion and lineage.

ML staff environments – This perform permits organising and working environments for ML groups for mannequin growth, testing, and deploying their use circumstances for embedding safety and governance controls.

ML platform observability – This perform helps with troubleshooting and figuring out the basis trigger for issues in ML fashions by centralization of logs and offering instruments for log evaluation visualization. It additionally gives steerage for producing value and utilization studies for ML use circumstances.

Though this framework can present advantages to all prospects, it’s most useful for big, mature, regulated, or international enterprises prospects that wish to scale their ML methods in a managed, compliant, and coordinated strategy throughout the group. It helps allow ML adoption whereas mitigating dangers. This framework is helpful for the next prospects:

Massive enterprise prospects which have many LOBs or departments all in favour of utilizing ML. This framework permits totally different groups to construct and deploy ML fashions independently whereas offering central governance.

Enterprise prospects with a reasonable to excessive maturity in ML. They’ve already deployed some preliminary ML fashions and wish to scale their ML efforts. This framework will help speed up ML adoption throughout the group. These corporations additionally acknowledge the necessity for governance to handle issues like entry management, knowledge utilization, mannequin efficiency, and unfair bias.

Firms in regulated industries corresponding to monetary providers, healthcare, chemistry, and the personal sector. These corporations want sturdy governance and audibility for any ML fashions used of their enterprise processes. Adopting this framework will help facilitate compliance whereas nonetheless permitting for native mannequin growth.

International organizations that have to steadiness centralized and native management. This framework’s federated strategy permits the central platform engineering staff to set some high-level insurance policies and requirements, but in addition offers LOB groups flexibility to adapt primarily based on native wants.

Within the first a part of this sequence, we stroll by the reference structure for organising the ML platform. In a later submit, we’ll present prescriptive steerage for the best way to implement the varied modules within the reference structure in your group.

The capabilities of the ML platform are grouped into 4 classes, as proven within the following determine. These capabilities kind the inspiration of the reference structure mentioned later on this submit:

Construct ML foundations

Scale ML operations

Observable ML

Safe ML

Resolution overview

The framework for governing ML lifecycle at scale framework permits organizations to embed safety and governance controls all through the ML lifecycle that in flip assist organizations scale back danger and speed up infusing ML into their services. The framework helps optimize the setup and governance of safe, scalable, and dependable ML environments that may scale to assist an rising variety of fashions and initiatives. The framework permits the next options:

Account and infrastructure provisioning with group coverage compliant infrastructure assets

Self-service deployment of knowledge science environments and end-to-end ML operations (MLOps) templates for ML use circumstances

LOB-level or team-level isolation of assets for safety and privateness compliance

Ruled entry to production-grade knowledge for experimentation and production-ready workflows

Administration and governance for code repositories, code pipelines, deployed fashions, and knowledge options

A mannequin registry and have retailer (native and central parts) for bettering governance

Safety and governance controls for the end-to-end mannequin growth and deployment course of

On this part, we offer an summary of prescriptive steerage that can assist you construct this ML platform on AWS with embedded safety and governance controls.

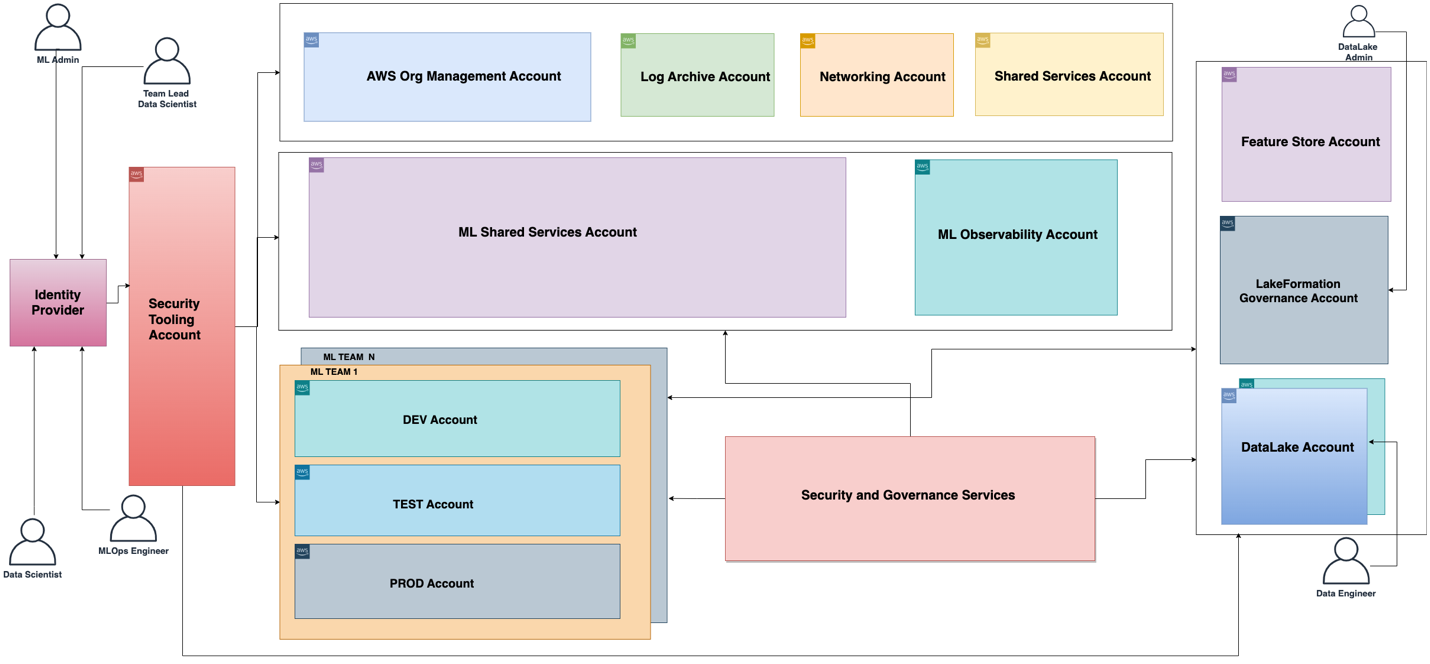

The purposeful structure related to the ML platform is proven within the following diagram. The structure maps the totally different capabilities of the ML platform to AWS accounts.

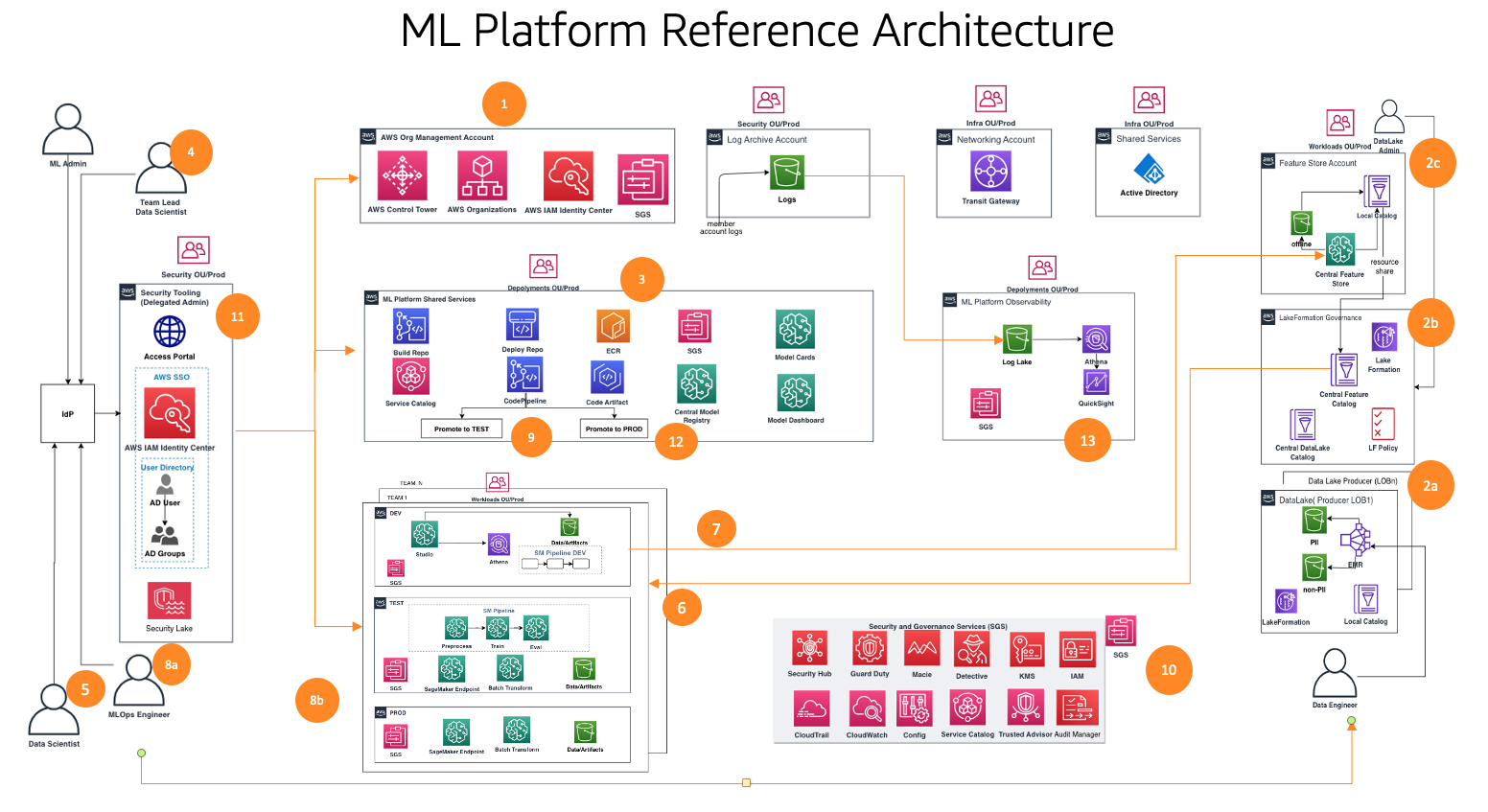

The purposeful structure with totally different capabilities is applied utilizing a lot of AWS providers, together with AWS Organizations, SageMaker, AWS DevOps providers, and an information lake. The reference structure for the ML platform with numerous AWS providers is proven within the following diagram.

This framework considers a number of personas and providers to manipulate the ML lifecycle at scale. We suggest the next steps to arrange your groups and providers:

Utilizing AWS Management Tower and automation tooling, your cloud administrator units up the multi-account foundations corresponding to Organizations and AWS IAM Id Middle (successor to AWS Single Signal-On) and safety and governance providers corresponding to AWS Key Administration Service (AWS KMS) and Service Catalog. As well as, the administrator units up a wide range of group items (OUs) and preliminary accounts to assist your ML and analytics workflows.

Knowledge lake directors arrange your knowledge lake and knowledge catalog, and arrange the central characteristic retailer working with the ML platform admin.

The ML platform admin provisions ML shared providers corresponding to AWS CodeCommit, AWS CodePipeline, Amazon Elastic Container Registry (Amazon ECR), a central mannequin registry, SageMaker Mannequin Playing cards, SageMaker Mannequin Dashboard, and Service Catalog merchandise for ML groups.

The ML staff lead federates by way of IAM Id Middle, makes use of Service Catalog merchandise, and provisions assets within the ML staff’s growth surroundings.

Knowledge scientists from ML groups throughout totally different enterprise items federate into their staff’s growth surroundings to construct the mannequin pipeline.

Knowledge scientists search and pull options from the central characteristic retailer catalog, construct fashions by experiments, and choose the most effective mannequin for promotion.

Knowledge scientists create and share new options into the central characteristic retailer catalog for reuse.

An ML engineer deploys the mannequin pipeline into the ML staff take a look at surroundings utilizing a shared providers CI/CD course of.

After stakeholder validation, the ML mannequin is deployed to the staff’s manufacturing surroundings.

Safety and governance controls are embedded into each layer of this structure utilizing providers corresponding to AWS Safety Hub, Amazon GuardDuty, Amazon Macie, and extra.

Safety controls are centrally managed from the safety tooling account utilizing Safety Hub.

ML platform governance capabilities corresponding to SageMaker Mannequin Playing cards and SageMaker Mannequin Dashboard are centrally managed from the governance providers account.

Amazon CloudWatch and AWS CloudTrail logs from every member account are made accessible centrally from an observability account utilizing AWS native providers.

Subsequent, we dive deep into the modules of the reference structure for this framework.

Reference structure modules

The reference structure contains eight modules, every designed to unravel a selected set of issues. Collectively, these modules tackle governance throughout numerous dimensions, corresponding to infrastructure, knowledge, mannequin, and value. Every module presents a definite set of features and interoperates with different modules to offer an built-in end-to-end ML platform with embedded safety and governance controls. On this part, we current a brief abstract of every module’s capabilities.

Multi-account foundations

This module helps cloud directors construct an AWS Management Tower touchdown zone as a foundational framework. This contains constructing a multi-account construction, authentication and authorization by way of IAM Id Middle, a community hub-and-spoke design, centralized logging providers, and new AWS member accounts with standardized safety and governance baselines.

As well as, this module offers finest apply steerage on OU and account constructions which might be acceptable for supporting your ML and analytics workflows. Cloud directors will perceive the aim of the required accounts and OUs, the best way to deploy them, and key safety and compliance providers they need to use to centrally govern their ML and analytics workloads.

A framework for merchandising new accounts can be coated, which makes use of automation for baselining new accounts when they’re provisioned. By having an automatic account provisioning course of arrange, cloud directors can present ML and analytics groups the accounts they should carry out their work extra shortly, with out sacrificing on a robust basis for governance.

Knowledge lake foundations

This module helps knowledge lake admins arrange an information lake to ingest knowledge, curate datasets, and use the AWS Lake Formation governance mannequin for managing fine-grained knowledge entry throughout accounts and customers utilizing a centralized knowledge catalog, knowledge entry insurance policies, and tag-based entry controls. You can begin small with one account on your knowledge platform foundations for a proof of idea or just a few small workloads. For medium-to-large-scale manufacturing workload implementation, we suggest adopting a multi-account technique. In such a setting, LOBs can assume the position of knowledge producers and knowledge customers utilizing totally different AWS accounts, and the info lake governance is operated from a central shared AWS account. The info producer collects, processes, and shops knowledge from their knowledge area, along with monitoring and making certain the standard of their knowledge belongings. Knowledge customers eat the info from the info producer after the centralized catalog shares it utilizing Lake Formation. The centralized catalog shops and manages the shared knowledge catalog for the info producer accounts.

ML platform providers

This module helps the ML platform engineering staff arrange shared providers which might be utilized by the info science groups on their staff accounts. The providers embody a Service Catalog portfolio with merchandise for SageMaker area deployment, SageMaker area consumer profile deployment, knowledge science mannequin templates for mannequin constructing and deploying. This module has functionalities for a centralized mannequin registry, mannequin playing cards, mannequin dashboard, and the CI/CD pipelines used to orchestrate and automate mannequin growth and deployment workflows.

As well as, this module particulars the best way to implement the controls and governance required to allow persona-based self-service capabilities, permitting knowledge science groups to independently deploy their required cloud infrastructure and ML templates.

ML use case growth

This module helps LOBs and knowledge scientists entry their staff’s SageMaker area in a growth surroundings and instantiate a mannequin constructing template to develop their fashions. On this module, knowledge scientists work on a dev account occasion of the template to work together with the info obtainable on the centralized knowledge lake, reuse and share options from a central characteristic retailer, create and run ML experiments, construct and take a look at their ML workflows, and register their fashions to a dev account mannequin registry of their growth environments.

Capabilities corresponding to experiment monitoring, mannequin explainability studies, knowledge and mannequin bias monitoring, and mannequin registry are additionally applied within the templates, permitting for speedy adaptation of the options to the info scientists’ developed fashions.

ML operations

This module helps LOBs and ML engineers work on their dev situations of the mannequin deployment template. After the candidate mannequin is registered and authorized, they arrange CI/CD pipelines and run ML workflows within the staff’s take a look at surroundings, which registers the mannequin into the central mannequin registry working in a platform shared providers account. When a mannequin is authorized within the central mannequin registry, this triggers a CI/CD pipeline to deploy the mannequin into the staff’s manufacturing surroundings.

Centralized characteristic retailer

After the primary fashions are deployed to manufacturing and a number of use circumstances begin to share options created from the identical knowledge, a characteristic retailer turns into important to make sure collaboration throughout use circumstances and scale back duplicate work. This module helps the ML platform engineering staff arrange a centralized characteristic retailer to offer storage and governance for ML options created by the ML use circumstances, enabling characteristic reuse throughout initiatives.

Logging and observability

This module helps LOBs and ML practitioners acquire visibility into the state of ML workloads throughout ML environments by centralization of log exercise corresponding to CloudTrail, CloudWatch, VPC circulation logs, and ML workload logs. Groups can filter, question, and visualize logs for evaluation, which will help improve safety posture as properly.

Price and reporting

This module helps numerous stakeholders (cloud admin, platform admin, cloud enterprise workplace) to generate studies and dashboards to interrupt down prices at ML consumer, ML staff, and ML product ranges, and monitor utilization corresponding to variety of customers, occasion varieties, and endpoints.

Clients have requested us to offer steerage on what number of accounts to create and the best way to construction these accounts. Within the subsequent part, we offer steerage on that account construction as reference which you could modify to fit your wants in keeping with your enterprise governance necessities.

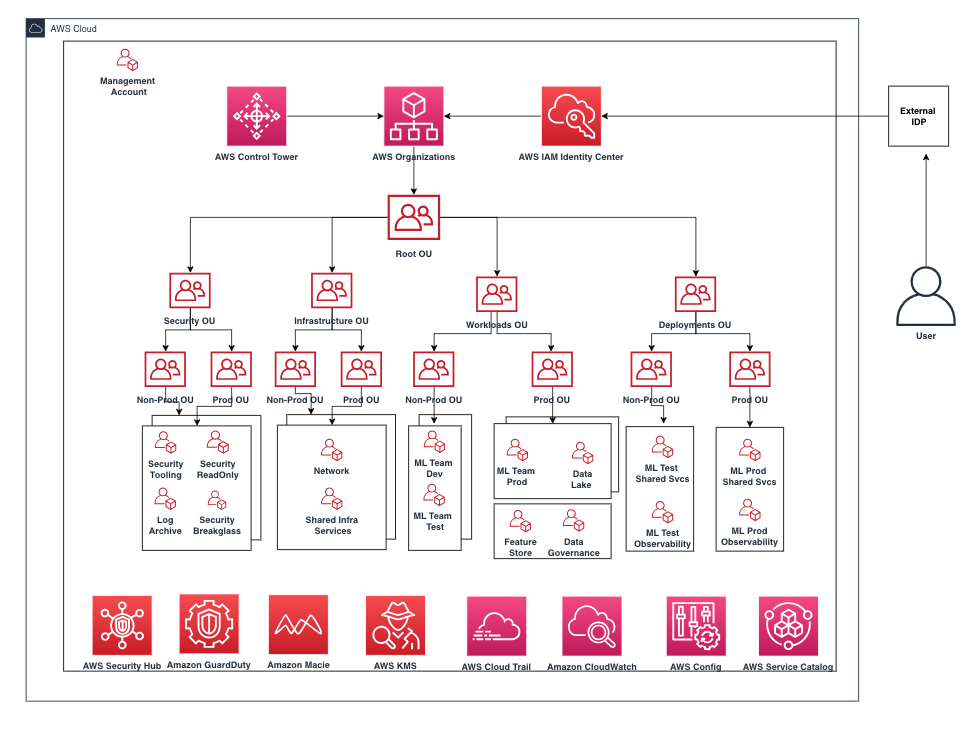

On this part, we talk about our advice for organizing your account construction. We share a baseline reference account construction; nonetheless, we suggest ML and knowledge admins work intently with their cloud admin to customise this account construction primarily based on their group controls.

We suggest organizing accounts by OU for safety, infrastructure, workloads, and deployments. Moreover, inside every OU, manage by non-production and manufacturing OU as a result of the accounts and workloads deployed underneath them have totally different controls. Subsequent, we briefly talk about these OUs.

Safety OU

The accounts on this OU are managed by the group’s cloud admin or safety staff for monitoring, figuring out, defending, detecting, and responding to safety occasions.

Infrastructure OU

The accounts on this OU are managed by the group’s cloud admin or community staff for managing enterprise-level infrastructure shared assets and networks.

We suggest having the next accounts underneath the infrastructure OU:

Community – Arrange a centralized networking infrastructure corresponding to AWS Transit Gateway

Shared providers – Arrange centralized AD providers and VPC endpoints

Workloads OU

The accounts on this OU are managed by the group’s platform staff admins. For those who want totally different controls applied for every platform staff, you may nest different ranges of OU for that objective, corresponding to an ML workloads OU, knowledge workloads OU, and so forth.

We suggest the next accounts underneath the workloads OU:

Staff-level ML dev, take a look at, and prod accounts – Set this up primarily based in your workload isolation necessities

Knowledge lake accounts – Partition accounts by your knowledge area

Central knowledge governance account – Centralize your knowledge entry insurance policies

Central characteristic retailer account – Centralize options for sharing throughout groups

Deployments OU

The accounts on this OU are managed by the group’s platform staff admins for deploying workloads and observability.

We suggest the next accounts underneath the deployments OU as a result of the ML platform staff can arrange totally different units of controls at this OU degree to handle and govern deployments:

ML shared providers accounts for take a look at and prod – Hosts platform shared providers CI/CD and mannequin registry

ML observability accounts for take a look at and prod – Hosts CloudWatch logs, CloudTrail logs, and different logs as wanted

Subsequent, we briefly talk about group controls that must be thought-about for embedding into member accounts for monitoring the infrastructure assets.

AWS surroundings controls

A management is a high-level rule that gives ongoing governance on your general AWS surroundings. It’s expressed in plain language. On this framework, we use AWS Management Tower to implement the next controls that enable you govern your assets and monitor compliance throughout teams of AWS accounts:

Preventive controls – A preventive management ensures that your accounts keep compliance as a result of it disallows actions that result in coverage violations and are applied utilizing a Service Management Coverage (SCP). For instance, you may set a preventive management that ensures that CloudTrail isn’t deleted or stopped in AWS accounts or Areas.

Detective controls – A detective management detects noncompliance of assets inside your accounts, corresponding to coverage violations, gives alerts by the dashboard, and is applied utilizing AWS Config guidelines. For instance, you may create a detective management to detects whether or not public learn entry is enabled to the Amazon Easy Storage Service (Amazon S3) buckets within the log archive shared account.

Proactive controls – A proactive management scans your assets earlier than they’re provisioned and makes positive that the assets are compliant with that management and are applied utilizing AWS CloudFormation hooks. Assets that aren’t compliant is not going to be provisioned. For instance, you may set a proactive management that checks that direct web entry isn’t allowed for a SageMaker pocket book occasion.

Interactions between ML platform providers, ML use circumstances, and ML operations

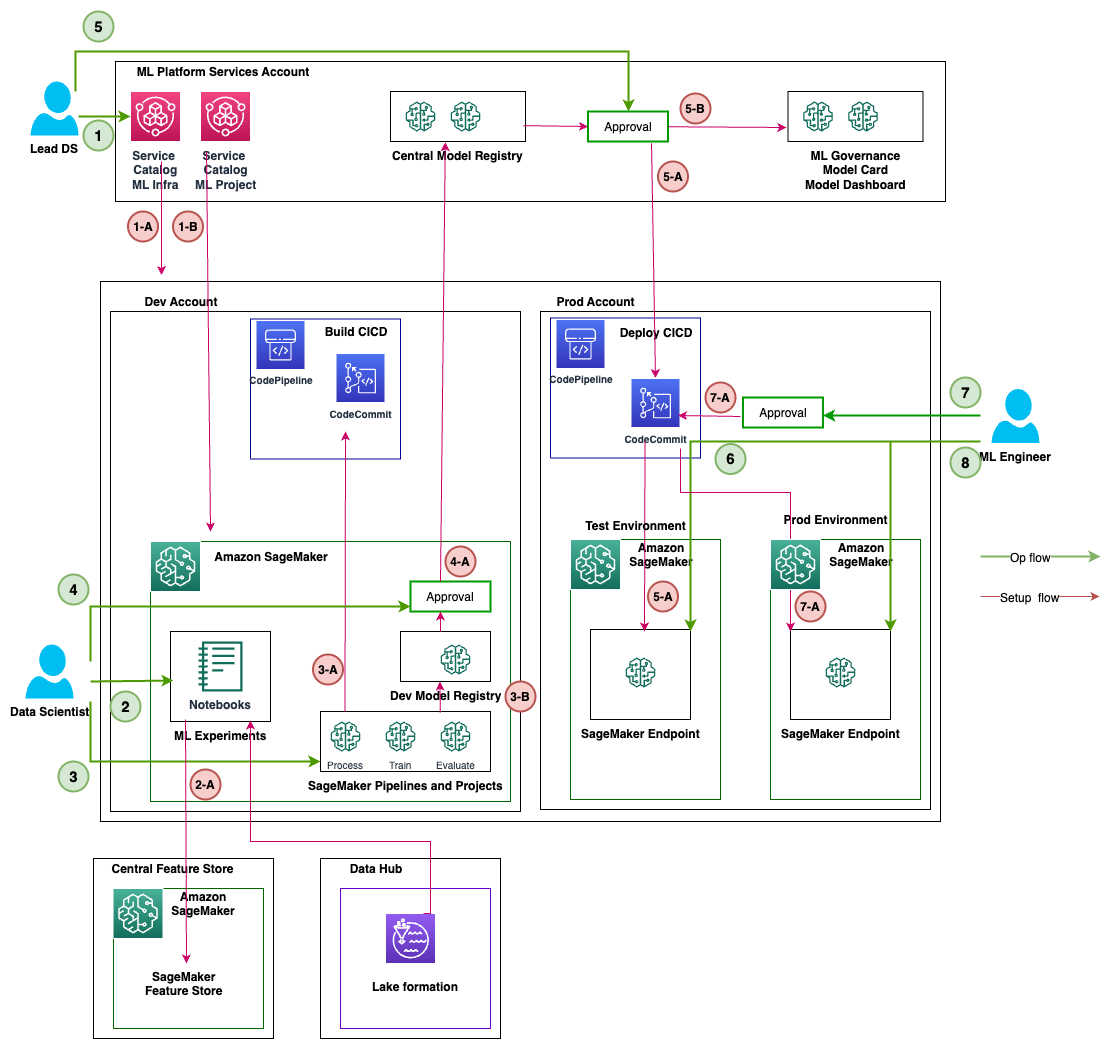

Completely different personas, corresponding to the pinnacle of knowledge science (lead knowledge scientist), knowledge scientist, and ML engineer, function modules 2–6 as proven within the following diagram for various levels of ML platform providers, ML use case growth, and ML operations together with knowledge lake foundations and the central characteristic retailer.

The next desk summarizes the ops circulation exercise and setup circulation steps for various personas. As soon as a persona initiates a ML exercise as a part of ops circulation, the providers run as talked about in setup circulation steps.

Persona

Ops Circulate Exercise – Quantity

Ops Circulate Exercise – Description

Setup Circulate Step – Quantity

Setup Circulate Step – Description

Lead Knowledge Science or ML Staff Lead

1

Makes use of Service Catalog within the ML platform providers account and deploys the next:

ML infrastructure

SageMaker initiatives

SageMaker mannequin registry

1-A

Units up the dev, take a look at, and prod environments for LOBs

Units up SageMaker Studio within the ML platform providers account

1-B

Units up SageMaker Studio with the required configuration

Knowledge Scientist

2

Conducts and tracks ML experiments in SageMaker notebooks

2-A

Makes use of knowledge from Lake Formation

Saves options within the central characteristic retailer

3

Automates profitable ML experiments with SageMaker initiatives and pipelines

3-A

Initiates SageMaker pipelines (preprocess, practice, consider) within the dev account

Initiates the construct CI/CD course of with CodePipeline within the dev account

3-B

After the SageMaker pipelines run, saves the mannequin within the native (dev) mannequin registry

Lead Knowledge Scientist or ML Staff Lead

4

Approves the mannequin within the native (dev) mannequin registry

4-A

Mannequin metadata and mannequin bundle writes from the native (dev) mannequin registry to the central mannequin registry

5

Approves the mannequin within the central mannequin registry

5-A

Initiates the deployment CI/CD course of to create SageMaker endpoints within the take a look at surroundings

5-B

Writes the mannequin info and metadata to the ML governance module (mannequin card, mannequin dashboard) within the ML platform providers account from the native (dev) account

ML Engineer

6

Checks and displays the SageMaker endpoint within the take a look at surroundings after CI/CD

.

7

Approves deployment for SageMaker endpoints within the prod surroundings

7-A

Initiates the deployment CI/CD course of to create SageMaker endpoints within the prod surroundings

8

Checks and displays the SageMaker endpoint within the take a look at surroundings after CI/CD

.

Personas and interactions with totally different modules of the ML platform

Every module caters to specific goal personas inside particular divisions that make the most of the module most frequently, granting them major entry. Secondary entry is then permitted to different divisions that require occasional use of the modules. The modules are tailor-made in direction of the wants of specific job roles or personas to optimize performance.

We talk about the next groups:

Central cloud engineering – This staff operates on the enterprise cloud degree throughout all workloads for organising widespread cloud infrastructure providers, corresponding to organising enterprise-level networking, id, permissions, and account administration

Knowledge platform engineering – This staff manages enterprise knowledge lakes, knowledge assortment, knowledge curation, and knowledge governance

ML platform engineering – This staff operates on the ML platform degree throughout LOBs to offer shared ML infrastructure providers corresponding to ML infrastructure provisioning, experiment monitoring, mannequin governance, deployment, and observability

The next desk particulars which divisions have major and secondary entry for every module in keeping with the module’s goal personas.

Module Quantity

Modules

Major Entry

Secondary Entry

Goal Personas

Variety of accounts

1

Multi-account foundations

Central cloud engineering

Particular person LOBs

Cloud admin

Cloud engineers

Few

2

Knowledge lake foundations

Central cloud or knowledge platform engineering

Particular person LOBs

Knowledge lake admin

Knowledge engineers

A number of

3

ML platform providers

Central cloud or ML platform engineering

Particular person LOBs

ML platform Admin

ML staff Lead

ML engineers

ML governance lead

One

4

ML use case growth

Particular person LOBs

Central cloud or ML platform engineering

Knowledge scientists

Knowledge engineers

ML staff lead

ML engineers

A number of

5

ML operations

Central cloud or ML engineering

Particular person LOBs

ML Engineers

ML staff leads

Knowledge scientists

A number of

6

Centralized characteristic retailer

Central cloud or knowledge engineering

Particular person LOBs

Knowledge engineer

Knowledge scientists

One

7

Logging and observability

Central cloud engineering

Particular person LOBs

One

8

Price and reporting

Particular person LOBs

Central platform engineering

LOB executives

ML managers

One

Conclusion

On this submit, we launched a framework for governing the ML lifecycle at scale that helps you implement well-architected ML workloads embedding safety and governance controls. We mentioned how this framework takes a holistic strategy for constructing an ML platform contemplating knowledge governance, mannequin governance, and enterprise-level controls. We encourage you to experiment with the framework and ideas launched on this submit and share your suggestions.

In regards to the authors

Ram Vittal is a Principal ML Options Architect at AWS. He has over 3 many years of expertise architecting and constructing distributed, hybrid, and cloud purposes. He’s captivated with constructing safe, scalable, dependable AI/ML and massive knowledge options to assist enterprise prospects with their cloud adoption and optimization journey to enhance their enterprise outcomes. In his spare time, he rides bike and walks together with his three-year previous sheep-a-doodle!

Ram Vittal is a Principal ML Options Architect at AWS. He has over 3 many years of expertise architecting and constructing distributed, hybrid, and cloud purposes. He’s captivated with constructing safe, scalable, dependable AI/ML and massive knowledge options to assist enterprise prospects with their cloud adoption and optimization journey to enhance their enterprise outcomes. In his spare time, he rides bike and walks together with his three-year previous sheep-a-doodle!

Sovik Kumar Nath is an AI/ML answer architect with AWS. He has in depth expertise designing end-to-end machine studying and enterprise analytics options in finance, operations, advertising and marketing, healthcare, provide chain administration, and IoT. Sovik has printed articles and holds a patent in ML mannequin monitoring. He has double masters levels from the College of South Florida, College of Fribourg, Switzerland, and a bachelors diploma from the Indian Institute of Expertise, Kharagpur. Exterior of labor, Sovik enjoys touring, taking ferry rides, and watching films.

Sovik Kumar Nath is an AI/ML answer architect with AWS. He has in depth expertise designing end-to-end machine studying and enterprise analytics options in finance, operations, advertising and marketing, healthcare, provide chain administration, and IoT. Sovik has printed articles and holds a patent in ML mannequin monitoring. He has double masters levels from the College of South Florida, College of Fribourg, Switzerland, and a bachelors diploma from the Indian Institute of Expertise, Kharagpur. Exterior of labor, Sovik enjoys touring, taking ferry rides, and watching films.

Maira Ladeira Tanke is a Senior Knowledge Specialist at AWS. As a technical lead, she helps prospects speed up their achievement of enterprise worth by rising expertise and revolutionary options. Maira has been with AWS since January 2020. Previous to that, she labored as an information scientist in a number of industries specializing in attaining enterprise worth from knowledge. In her free time, Maira enjoys touring and spending time along with her household someplace heat.

Maira Ladeira Tanke is a Senior Knowledge Specialist at AWS. As a technical lead, she helps prospects speed up their achievement of enterprise worth by rising expertise and revolutionary options. Maira has been with AWS since January 2020. Previous to that, she labored as an information scientist in a number of industries specializing in attaining enterprise worth from knowledge. In her free time, Maira enjoys touring and spending time along with her household someplace heat.

Ryan Lempka is a Senior Options Architect at Amazon Net Companies, the place he helps his prospects work backwards from enterprise targets to develop options on AWS. He has deep expertise in enterprise technique, IT programs administration, and knowledge science. Ryan is devoted to being a lifelong learner, and enjoys difficult himself on daily basis to study one thing new.

Ryan Lempka is a Senior Options Architect at Amazon Net Companies, the place he helps his prospects work backwards from enterprise targets to develop options on AWS. He has deep expertise in enterprise technique, IT programs administration, and knowledge science. Ryan is devoted to being a lifelong learner, and enjoys difficult himself on daily basis to study one thing new.

Sriharsh Adari is a Senior Options Architect at Amazon Net Companies (AWS), the place he helps prospects work backwards from enterprise outcomes to develop revolutionary options on AWS. Over time, he has helped a number of prospects on knowledge platform transformations throughout trade verticals. His core space of experience embody Expertise Technique, Knowledge Analytics, and Knowledge Science. In his spare time, he enjoys taking part in sports activities, binge-watching TV reveals, and taking part in Tabla.

Sriharsh Adari is a Senior Options Architect at Amazon Net Companies (AWS), the place he helps prospects work backwards from enterprise outcomes to develop revolutionary options on AWS. Over time, he has helped a number of prospects on knowledge platform transformations throughout trade verticals. His core space of experience embody Expertise Technique, Knowledge Analytics, and Knowledge Science. In his spare time, he enjoys taking part in sports activities, binge-watching TV reveals, and taking part in Tabla.