Trendy robots know find out how to sense their atmosphere and reply to language, however what they do not know is usually extra vital than what they do know. Educating robots to ask for assist is vital to creating them safer and extra environment friendly.

Engineers at Princeton College and Google have provide you with a brand new strategy to educate robots to know when they do not know. The approach includes quantifying the fuzziness of human language and utilizing that measurement to inform robots when to ask for additional instructions. Telling a robotic to select up a bowl from a desk with just one bowl is pretty clear. However telling a robotic to select up a bowl when there are 5 bowls on the desk generates a a lot greater diploma of uncertainty—and triggers the robotic to ask for clarification.

The paper, “Robots That Ask for Assist: Uncertainty Alignment for Giant Language Mannequin Planners,” was introduced Nov. 8 on the Convention on Robotic Studying.

As a result of duties are usually extra advanced than a easy “decide up a bowl” command, the engineers use massive language fashions (LLMs)—the expertise behind instruments comparable to ChatGPT—to gauge uncertainty in advanced environments. LLMs are bringing robots highly effective capabilities to observe human language, however LLM outputs are nonetheless incessantly unreliable, stated Anirudha Majumdar, an assistant professor of mechanical and aerospace engineering at Princeton and the senior creator of a research outlining the brand new technique.

“Blindly following plans generated by an LLM may trigger robots to behave in an unsafe or untrustworthy method, and so we’d like our LLM-based robots to know when they do not know,” stated Majumdar.

The system additionally permits a robotic’s person to set a goal diploma of success, which is tied to a selected uncertainty threshold that may lead a robotic to ask for assist. For instance, a person would set a surgical robotic to have a a lot decrease error tolerance than a robotic that is cleansing up a lounge.

“We would like the robotic to ask for sufficient assist such that we attain the extent of success that the person needs. However in the meantime, we wish to reduce the general quantity of assist that the robotic wants,” stated Allen Ren, a graduate pupil in mechanical and aerospace engineering at Princeton and the research’s lead creator. Ren acquired a greatest pupil paper award for his Nov. 8 presentation on the Convention on Robotic Studying in Atlanta. The brand new technique produces excessive accuracy whereas decreasing the quantity of assist required by a robotic in comparison with different strategies of tackling this challenge.

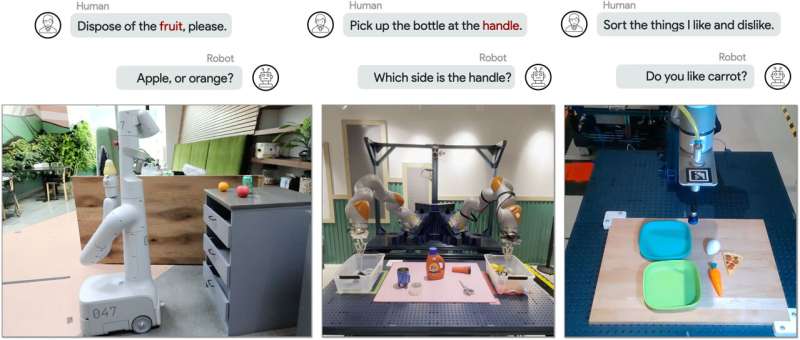

The researchers examined their technique on a simulated robotic arm and on two kinds of robots at Google services in New York Metropolis and Mountain View, California, the place Ren was working as a pupil analysis intern. One set of {hardware} experiments used a tabletop robotic arm tasked with sorting a set of toy meals gadgets into two totally different classes; a setup with a left and proper arm added a further layer of ambiguity.

Probably the most advanced experiments concerned a robotic arm mounted on a wheeled platform and positioned in an workplace kitchen with a microwave and a set of recycling, compost and trash bins. In a single instance, a human asks the robotic to “place the bowl within the microwave,” however there are two bowls on the counter—a steel one and a plastic one.

The robotic’s LLM-based planner generates 4 potential actions to hold out based mostly on this instruction, like multiple-choice solutions, and every possibility is assigned a likelihood. Utilizing a statistical method referred to as conformal prediction and a user-specified assured success charge, the researchers designed their algorithm to set off a request for human assist when the choices meet a sure likelihood threshold. On this case, the highest two choices—place the plastic bowl within the microwave or place the steel bowl within the microwave—meet this threshold, and the robotic asks the human which bowl to put within the microwave.

In one other instance, an individual tells the robotic, “There’s an apple and a grimy sponge … It’s rotten. Are you able to eliminate it?” This doesn’t set off a query from the robotic, because the motion “put the apple within the compost” has a sufficiently greater likelihood of being right than every other possibility.

“Utilizing the strategy of conformal prediction, which quantifies the language mannequin’s uncertainty in a extra rigorous manner than prior strategies, permits us to get to the next degree of success” whereas minimizing the frequency of triggering assist, stated the research’s senior creator Anirudha Majumdar, an assistant professor of mechanical and aerospace engineering at Princeton.

Robots’ bodily limitations typically give designers insights not available from summary methods. Giant language fashions “would possibly discuss their manner out of a dialog, however they can not skip gravity,” stated co-author Andy Zeng, a analysis scientist at Google DeepMind. “I am at all times eager on seeing what we are able to do on robots first, as a result of it typically sheds mild on the core challenges behind constructing usually clever machines.”

Ren and Majumdar started collaborating with Zeng after he gave a chat as a part of the Princeton Robotics Seminar collection, stated Majumdar. Zeng, who earned a pc science Ph.D. from Princeton in 2019, outlined Google’s efforts in utilizing LLMs for robotics, and introduced up some open challenges. Ren’s enthusiasm for the issue of calibrating the extent of assist a robotic ought to ask for led to his internship and the creation of the brand new technique.

“We loved with the ability to leverage the size that Google has” when it comes to entry to massive language fashions and totally different {hardware} platforms, stated Majumdar.

Ren is now extending this work to issues of energetic notion for robots: For example, a robotic might have to make use of predictions to find out the placement of a tv, desk or chair inside a home, when the robotic itself is in a unique a part of the home. This requires a planner based mostly on a mannequin that mixes imaginative and prescient and language data, citing a brand new set of challenges in estimating uncertainty and figuring out when to set off assist, stated Ren.

Extra data:

Allen Z. Ren et al, Robots That Ask For Assist: Uncertainty Alignment for Giant Language Mannequin Planners (2023) openreview.internet/discussion board?id=4ZK8ODNyFXx

Princeton College

Quotation:

How do you make a robotic smarter? Program it to know what it would not know (2023, November 29)

retrieved 29 November 2023

from https://techxplore.com/information/2023-11-robot-smarter-doesnt.html

This doc is topic to copyright. Aside from any honest dealing for the aim of personal research or analysis, no

half could also be reproduced with out the written permission. The content material is offered for data functions solely.