Deep studying fashions are usually extremely complicated. Whereas many conventional machine studying fashions make do with simply a few tons of of parameters, deep studying fashions have hundreds of thousands or billions of parameters. The massive language mannequin GPT-4 that OpenAI launched within the spring of 2023 is rumored to have practically 2 trillion parameters. It goes with out saying that the interaction between all these parameters is method too sophisticated for people to grasp.

That is the place visualizations in ML are available in. Graphical representations of buildings and knowledge movement inside a deep studying mannequin make its complexity simpler to grasp and allow perception into its decision-making course of. With the correct visualization technique and a scientific strategy, many seemingly mysterious coaching points and underperformance of deep studying fashions could be traced again to root causes.

On this article, we’ll discover a variety of deep studying visualizations and focus on their applicability. Alongside the way in which, I’ll share many sensible examples and level to libraries and in-depth tutorials for particular person strategies.

Why can we wish to visualize deep studying fashions?

Visualizing deep studying fashions may help us with a number of totally different aims:

Interpretability and explainability: The efficiency of deep studying fashions is, at instances, staggering, even for seasoned knowledge scientists and ML engineers. Visualizations present methods to dive right into a mannequin’s construction and uncover why it succeeds in studying the relationships encoded within the coaching knowledge.

Debugging mannequin coaching: It’s truthful to imagine that everybody coaching deep studying fashions has encountered a scenario the place a mannequin doesn’t be taught or struggles with a specific set of samples. The explanations for this vary from wrongly related mannequin elements to misconfigured optimizers. Visualizations are nice for monitoring coaching runs and diagnosing points.

Mannequin optimization: Fashions with fewer parameters are usually quicker to compute and extra resource-efficient whereas being extra sturdy and generalizing higher to unseen samples. Visualizations can uncover which components of a mannequin are important – and which layers is perhaps omitted with out compromising the mannequin’s efficiency.

Understanding and educating ideas: Deep studying is generally based mostly on pretty easy activation capabilities and mathematical operations like matrix multiplication. Many highschool college students will know all of the maths required to grasp a deep studying mannequin’s inner calculations step-by-step. But it surely’s removed from apparent how this offers rise to fashions that may seemingly “perceive” photos or translate fluently between a number of languages. It’s not a secret amongst educators that good visualizations are key for college kids to grasp complicated and summary ideas akin to deep studying. Interactive visualizations, specifically, have confirmed useful for these new to the sphere.

How is deep studying visualization totally different from conventional ML visualization?

At this level, you would possibly surprise how visualizing deep studying fashions differs from visualizations of conventional machine studying fashions. In spite of everything, aren’t deep studying fashions carefully associated to their predecessors?

Deep studying fashions are characterised by a lot of parameters and a layered construction. Many equivalent neurons are organized into layers stacked on high of one another. Every neuron is described by a small variety of weights and an activation operate. Whereas the activation operate is often chosen by the mannequin’s creator (and is thus a so-called hyperparameter), the weights are discovered throughout coaching.

This pretty easy construction offers rise to unprecedented efficiency on nearly each machine studying process recognized right this moment. From our human perspective, the value we pay is that deep studying fashions are a lot bigger than conventional ML fashions.

It’s additionally rather more tough to see how the intricate community of neurons processes the enter knowledge than to grasp, say, a choice tree. Thus, the primary focus of deep studying visualizations is to uncover the information movement inside a mannequin and to offer insights into what the structurally equivalent layers be taught to deal with throughout coaching.

That mentioned, most of the machine studying visualization methods I lined in my final weblog put up apply to deep studying fashions as effectively. For instance, confusion matrices and ROC curves are useful when working with deep studying classifiers, simply as they’re for extra conventional classification fashions.

Who ought to use deep studying visualization?

The quick reply to that query is: Everybody who works with deep studying fashions!

Particularly, the next teams come to thoughts:

Deep studying researchers: Many visualization methods are first developed by tutorial researchers seeking to enhance current deep studying algorithms or to grasp why a specific mannequin reveals a sure attribute.

Knowledge scientists and ML engineers: Creating and coaching deep studying fashions is not any simple feat. Whether or not a mannequin underperforms, struggles to be taught, or generates suspiciously good outcomes – visualizations assist us to establish the basis trigger. Thus, mastering totally different visualization approaches is a useful addition to any deep studying practitioner’s toolbox.

Downstream shoppers of deep studying fashions: Visualizations show beneficial to people with technical backgrounds who eat deep studying fashions through APIs or built-in deep learning-based elements into software program functions. As an illustration, Fb’s ActiVis is a visible analytics system tailor-made to in-house engineers, facilitating the exploration of deployed neural networks.

Educators and college students: These encountering deep neural networks for the primary time – and the folks educating them – typically wrestle to grasp how the mannequin code they write interprets right into a computational graph that may course of complicated enter knowledge like photos or speech. Visualizations make it simpler to grasp how every thing comes collectively and what a mannequin discovered throughout coaching.

Varieties of deep studying visualization

There are various totally different approaches to deep studying mannequin visualization. Which one is best for you relies on your aim. As an illustration, deep studying researchers typically delve into intricate architectural blueprints to uncover the contributions of various mannequin components to its efficiency. ML engineers are sometimes extra serious about plots of analysis metrics throughout coaching, as their aim is to ship the best-performing mannequin as shortly as potential.

On this article, we’ll focus on the next approaches:

Deep studying mannequin structure visualization: Graph-like illustration of a neural community with nodes representing layers and edges representing connections between neurons.

Activation heatmap: Layer-wise visualization of activations in a deep neural community that gives insights into what enter components a mannequin is delicate to.

Characteristic visualization: Heatmaps that visualize what options or patterns a deep studying mannequin can detect in its enter.

Deep characteristic factorization: Superior technique to uncover high-level ideas a deep studying mannequin discovered throughout coaching.

Coaching dynamics plots: Visualization of mannequin efficiency metrics throughout coaching epochs.

Gradient plots: Illustration of the loss operate gradients at totally different layers inside a deep studying mannequin. Knowledge scientists typically use these plots to detect exploding or vanishing gradients throughout mannequin coaching.

Loss panorama: Three-dimensional illustration of the loss operate’s worth throughout a deep studying mannequin’s enter house.

Visualizing consideration: Heatmap and graph-like visible representations of a transformer-model’s consideration that can be utilized, e.g., to confirm if a mannequin focuses on the right components of the enter knowledge.

Visualizing embeddings: Graphical illustration of embeddings, a vital constructing block for a lot of NLP and laptop imaginative and prescient functions, in a low-dimensional house to unveil their relationships and semantic similarity.

Deep studying mannequin structure visualization

Visualizing the structure of a deep studying mannequin – its neurons, layers, and connections between them – can serve many functions:

It exposes the movement of knowledge from the enter to the output, together with the shapes it takes when it’s handed between layers.

It offers a transparent thought of the variety of parameters within the mannequin.

You may see which elements repeat all through the mannequin and the way they’re linked.

There are alternative ways to visualise a deep studying mannequin’s structure:

Mannequin diagrams expose the mannequin’s constructing blocks and their interconnection.

Flowcharts purpose to offer insights into knowledge flows and mannequin dynamics.

Layer-wise representations of deep studying fashions are typically considerably extra complicated and expose activations and intra-layer buildings.

All of those visualizations don’t solely fulfill curiosity. They empower deep studying practitioners to fine-tune fashions, diagnose points, and construct upon this information to create much more highly effective algorithms.

You’ll be capable to discover mannequin structure visualization utilities for the entire huge deep studying frameworks. Generally, they’re supplied as a part of the primary bundle, whereas in different circumstances, separate libraries are supplied by the framework’s maintainers or neighborhood members.

How do you visualize a PyTorch mannequin’s structure?

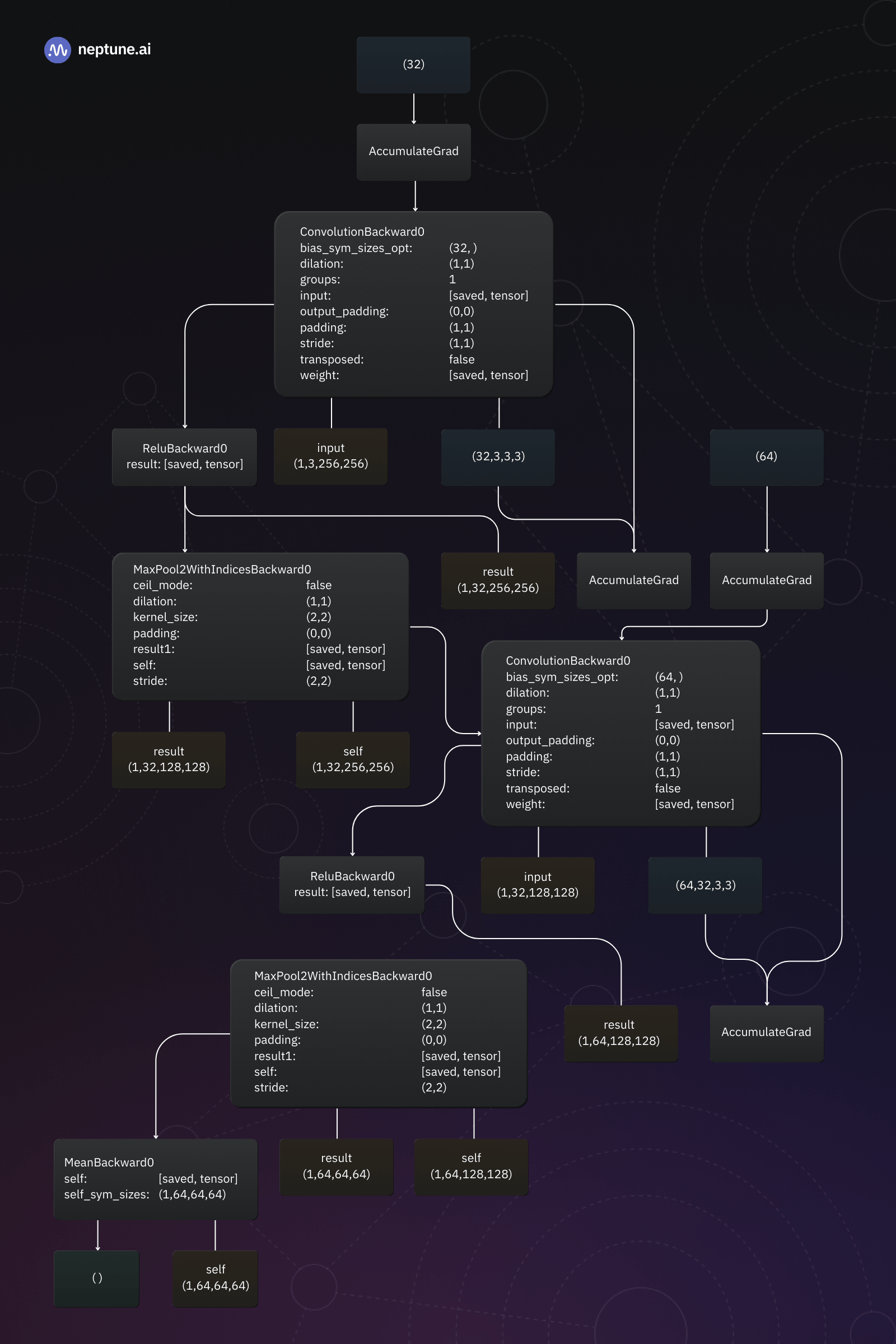

If you’re utilizing PyTorch, you need to use PyTorchViz to create mannequin structure visualizations. This library visualizes a mannequin’s particular person elements and highlights the information movement between them.

Right here’s the essential code:

The Colab pocket book accompanying this text comprises an entire PyTorch mannequin structure visualization instance.

PyTorchViz makes use of 4 colours within the mannequin structure graph:

Blue nodes signify tensors or variables within the computation graph. These are the information components that movement by the operations.

Grey nodes signify PyTorch capabilities or operations carried out on tensors.

Inexperienced nodes signify gradients or derivatives of tensors. They showcase the backpropagation movement of gradients by the computation graph.

Orange nodes signify the ultimate loss or goal operate optimized throughout coaching.

How do you visualize a Keras mannequin’s structure?

To visualise the structure of a Keras deep studying mannequin, you need to use the plot_model utility operate that’s supplied as a part of the library:

I’ve ready an entire instance for Keras structure visualization within the Colab pocket book for this text.

The output generated by the plot_model operate is sort of easy to grasp: Every field represents a mannequin layer and exhibits its identify, sort, and enter and output shapes. The arrows point out the movement of knowledge between layers.

By the way in which, Keras additionally gives a model_to_dot operate to create graphs just like the one produced by PyTorchViz above.

Activation heatmaps

Activation heatmaps are visible representations of the internal workings of deep neural networks. They present which neurons are activated layer-by-layer, permitting us to see how the activations movement by the mannequin.

An activation heatmap could be generated for only a single enter pattern or an entire assortment. Within the latter case, we’ll usually select to depict the common, median, minimal, or most activation. This enables us, for instance, to establish areas of the community that hardly ever contribute to the mannequin’s output and is perhaps pruned with out affecting its efficiency.

Let’s take a pc imaginative and prescient mannequin for example. To generate an activation heatmap, we’ll feed a pattern picture into the mannequin and file the output worth of every activation operate within the deep neural community. Then, we will create a heatmap visualization for a layer within the mannequin by coloring its neurons in response to the activation operate’s output. Alternatively, we will colour the enter pattern’s pixels based mostly on the activation they trigger within the internal layer. This tells us which components of the enter attain the actual layer.

For typical deep studying fashions with many layers and hundreds of thousands of neurons, this easy strategy will produce very sophisticated and noisy visualizations. Therefore, deep studying researchers and knowledge scientists have provide you with loads of totally different strategies to simplify activation heatmaps.

However the aim stays the identical: We wish to uncover which components of our mannequin contribute to the output and in what method.

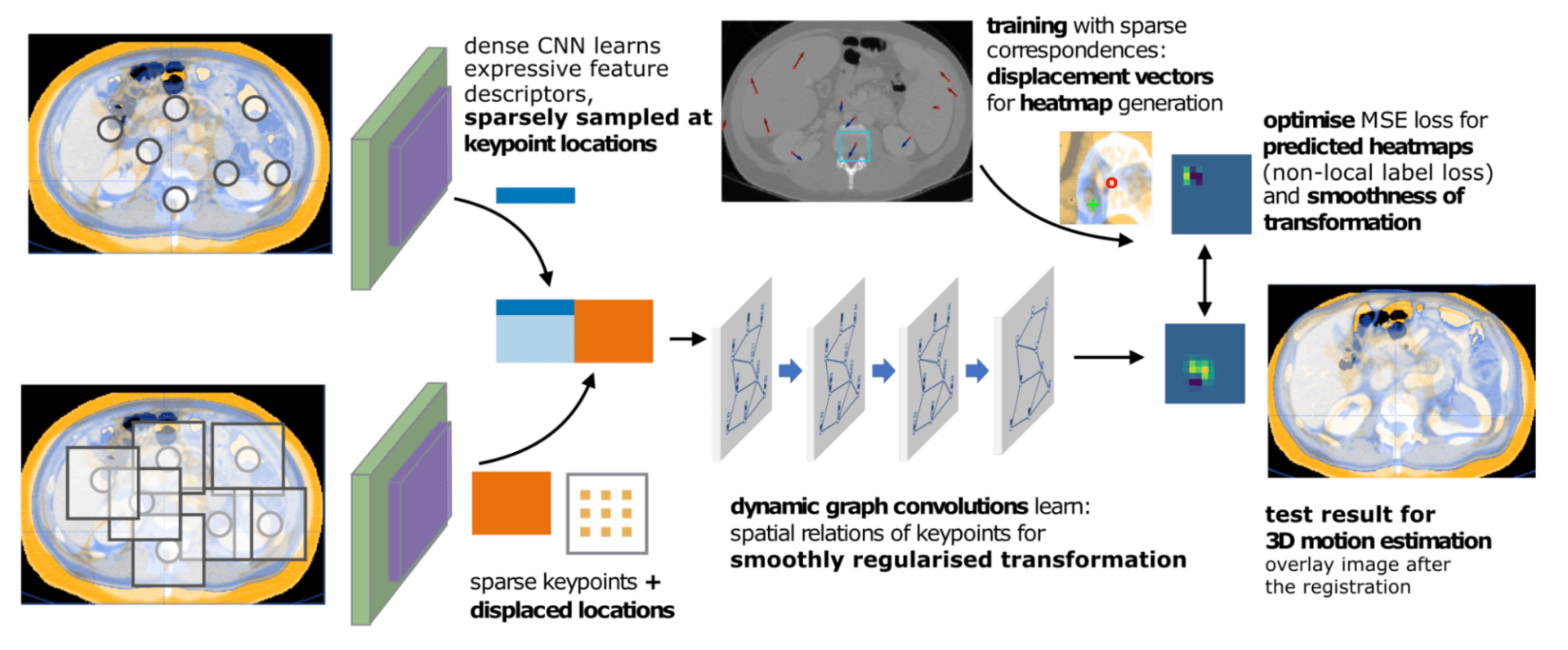

As an illustration, within the instance above, activation heatmaps spotlight the areas of an MRI scan that contributed most to the CNN’s output.

Offering such visualizations together with the mannequin output aids healthcare professionals in making knowledgeable selections. Right here’s how:

Lesion detection and abnormality identification: The heatmaps spotlight the essential areas within the picture, aiding within the identification of lesions and abnormalities.

Severity evaluation of abnormalities: The depth of the heatmap instantly correlates with the severity of lesions or abnormalities. A bigger and brighter space on the heatmap signifies a extra extreme situation, enabling a fast evaluation of the problem.

Figuring out mannequin errors: If the mannequin’s activation is excessive for areas of the MRI scan that aren’t medically vital (e.g., the cranium cap and even components exterior of the mind), it is a telltale signal of a mistake. Even with out deep studying experience, medical professionals will instantly see that this specific mannequin output can’t be trusted.

How do you create a visualization heatmap for a PyTorch mannequin?

The TorchCam library gives a number of strategies to generate activation heatmaps for PyTorch fashions.

To generate an activation heatmap for a PyTorch mannequin, we have to take the next steps:

Initialize one of many strategies supplied by TorchCam with our mannequin.

Cross a pattern enter into the mannequin and file the output.

Apply the initialized TorchCam technique.

The accompanying Colab pocket book comprises a full TorchCam activation heatmap instance utilizing a ResNet picture classification mannequin.

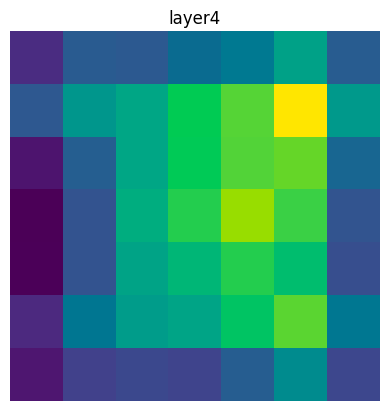

As soon as we’ve computed them, we will plot the activation heatmaps for every layer within the mannequin:

In my instance mannequin’s case, the output isn’t overly useful:

We are able to enormously improve the plot’s worth by overlaying the unique enter picture. Fortunately for us, TorchCam gives the overlay_mask utility operate for this goal:

As you’ll be able to see within the instance plot above, the activation heatmap exposes the areas of the enter picture that resulted within the biggest activation of neurons within the internal layer of the deep studying mannequin. This helps engineers and the overall viewers to grasp what’s taking place contained in the mannequin.

Characteristic visualization

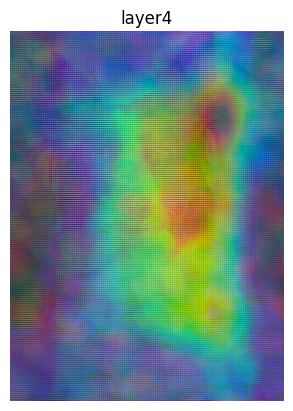

Characteristic visualization reveals the options discovered by a deep neural community. It’s significantly useful in laptop imaginative and prescient, the place it reveals which summary options in an enter picture a neural community responds to. For instance, {that a} neuron in a CNN structure is extremely conscious of diagonal edges or textures like fur.

This helps us perceive what the mannequin is in search of in photos. The primary distinction to the activation heatmaps mentioned within the earlier part is that these present the overall response to areas of an enter picture, whereas characteristic visualization goes a stage deeper and makes an attempt to uncover a mannequin’s response to summary ideas.

By means of characteristic visualization, we will acquire beneficial insights into the precise options that deep neural networks are processing at totally different layers. Usually, layers near the mannequin’s enter will reply to easier options like edges, whereas layers nearer to the mannequin’s output will detect extra summary ideas.

Such insights not solely help in understanding the internal workings but additionally function a toolkit for fine-tuning and enhancing the mannequin’s efficiency. By inspecting the options which might be activated incorrectly or inconsistently, we will refine the coaching course of or establish knowledge high quality points.

In my Colab pocket book for this text, you’ll find the complete instance code for producing characteristic visualizations for a PyTorch CNN. Right here, we’ll deal with discussing the outcome and what we will be taught from it.

As you’ll be able to see from the plots above, the CNN detects totally different patterns or options in each layer. For those who look carefully on the higher row, which corresponds to the primary 4 layers of the mannequin, you’ll be able to see that these layers detect the perimeters within the picture. As an illustration, within the second and fourth panels of the primary row, you’ll be able to see that the mannequin identifies the nostril and the ears of the canine.

Because the activations movement by the mannequin, it turns into ever more difficult to make out what the mannequin is detecting. But when we analyzed extra carefully, we’d doubtless discover that particular person neurons are activated by, e.g., the canine’s ears or eyes.

Deep characteristic factorizations

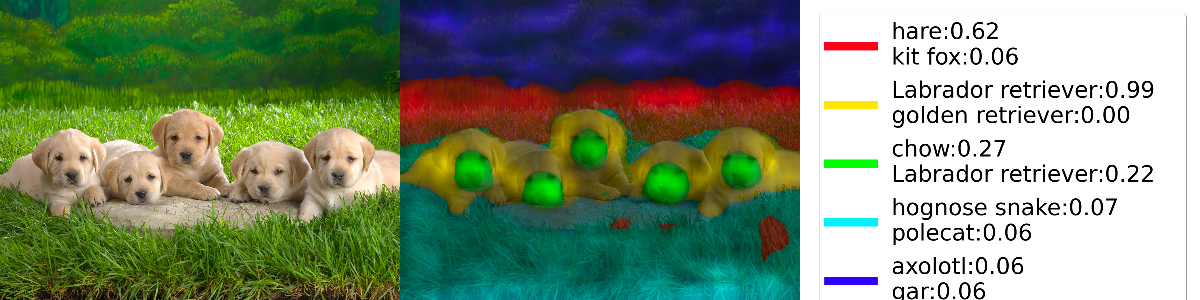

Deep Characteristic Factorizatio (DFF) is a technique to research the contains a convolutional neural community has discovered. DFF identifies areas within the community’s characteristic house that belong to the identical semantic idea. By assigning totally different colours to those areas, we will create a visualization that enables us to see whether or not the options recognized by the mannequin are significant.

As an illustration, within the instance above, we discover that the mannequin bases its determination (that the picture exhibits labrador retrievers) on the puppies, not the encompassing grass. The nostril area would possibly level to a chow, however the form of the top and ears push the mannequin towards “labrador retriever.” This determination logic mimics the way in which a human would strategy the duty.

DFF is on the market in PyTorch-gradcam, which comes with an in depth DFF tutorial that additionally discusses the best way to interpret the outcomes. The picture above is predicated on this tutorial. I’ve simplified the code and added some further feedback. You’ll discover my advisable strategy to Deep Characteristic Factorization with PyTorch-gradcam within the Colab pocket book.

Coaching dynamics plots

Coaching dynamics plots present how a mannequin learns. Coaching progress is often gauged by efficiency metrics akin to loss and accuracy. By visualizing these metrics, knowledge scientists and deep studying practitioners can receive essential insights:

Studying Development: Coaching dynamics plots reveal how shortly or slowly a mannequin converges. Speedy convergence can level to overfitting, whereas erratic fluctuations could point out points like poor initialization or improper studying charge tuning.

Early Stopping: Plotting losses helps to establish the purpose at which a mannequin begins overfitting the coaching knowledge. A lowering coaching loss whereas the validation loss rises is a transparent signal of overfitting. The purpose the place overfitting units in is the optimum time to halt coaching.

Gradient plots

If plots of efficiency metrics are inadequate to grasp a mannequin’s coaching progress (or lack thereof), plotting the loss operate’s gradients could be useful.

To regulate the weights of a neural community throughout coaching, we use a way known as backpropagation to compute the gradient of the loss operate with respect to the weights and biases of our community. The gradient is a high-dimensional vector that factors within the path of the steepest enhance of the loss operate. Thus, we will use that info to shift our weights and biases in the other way. The training charge controls the quantity by which we alter the weights and biases.

Vanishing or exploding gradients can forestall deep neural networks from studying. Plotting the imply magnitude of gradients for various layers can reveal whether or not gradients are vanishing (approaching zero) or exploding (turning into extraordinarily giant). If the gradient vanishes, we don’t know wherein path to shift our weights and biases, so coaching is caught. An exploding gradient results in giant modifications within the weights and biases, typically overshooting the goal and inflicting fast fluctuations within the loss.

Machine studying experiment trackers like neptune.ai allow knowledge scientists and ML engineers to trace and plot gradients throughout coaching.

Do you are feeling like experimenting with neptune.ai?

To be taught extra about vanishing and exploding gradients and the best way to use gradient plots to detect them, I like to recommend Katherine Li’s in-depth weblog put up on debugging, monitoring, and fixing gradient-related issues.

Loss landscapes

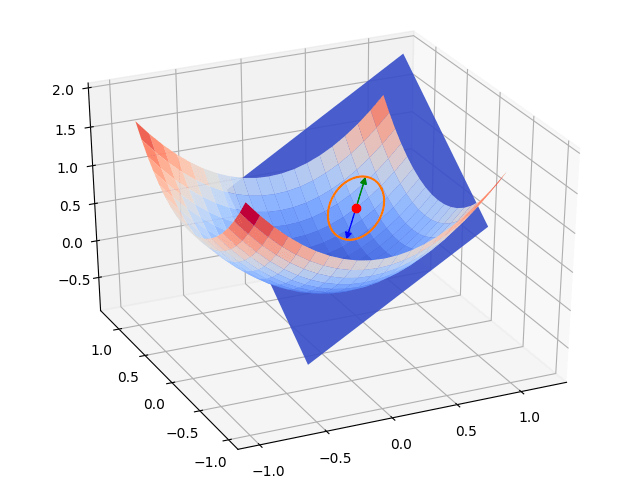

We can’t simply plot gradient magnitudes however instantly visualize the loss operate and its gradients. These visualizations are generally known as “loss landscapes.”

Inspecting a loss panorama helps knowledge scientists and machine studying practitioners perceive how an optimization algorithm strikes the weights and biases in a mannequin towards a loss operate’s minimal.

In an idealized case just like the one proven within the determine above, the loss panorama may be very easy. The gradient solely modifications barely throughout the floor. Deep neural networks typically exhibit a way more complicated loss panorama with spikes and trenches. Reliably converging in the direction of a minimal of the loss operate in these circumstances requires sturdy optimizers akin to Adam.

To plot a loss panorama for a PyTorch mannequin, you need to use the code supplied by the authors of a seminal paper on the subject. To get a primary impression, try the interactive Loss Panorama Visualizer utilizing this library behind the scenes. There may be additionally a TensorFlow port of the identical code.

Loss landscapes don’t solely present perception into how deep studying fashions be taught, however they can be stunning to have a look at. Javier Ideami has created the Loss Panorama undertaking with many creative movies and interactive animations of assorted loss landscapes.

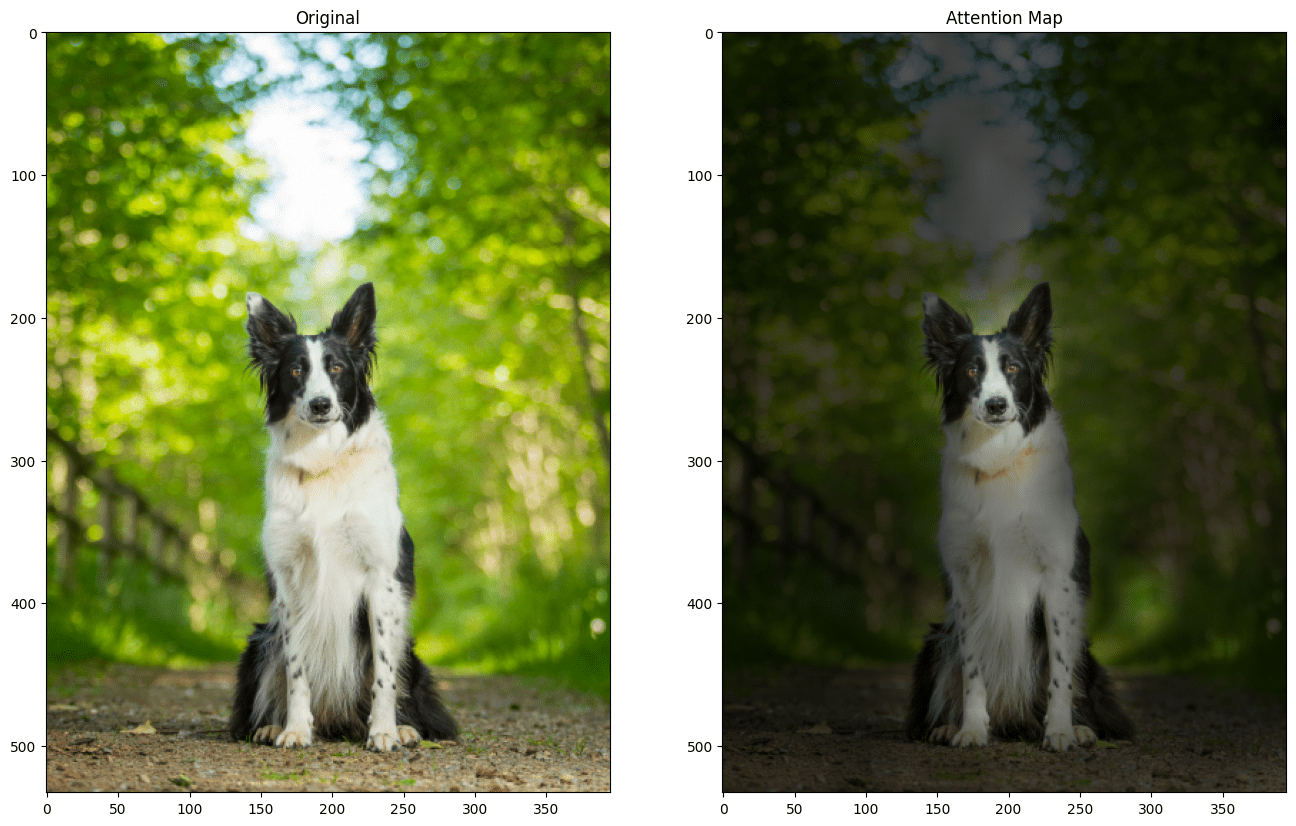

Visualizing consideration

Famously, the transformer fashions which have revolutionized deep studying over the previous few years are based mostly on consideration mechanisms. Visualizing what components of the enter a mannequin attends to gives us with essential insights:

Decoding self-attention: Transformers make the most of self-attention mechanisms to weigh the significance of various components of the enter sequence. Visualizing consideration maps helps us grasp which components the mannequin focuses on.

Diagnosing errors: When the mannequin attends to irrelevant components of the enter sequence, it could result in prediction errors. Visualization permits us to detect such points.

Exploring contextual info: Transformer fashions excel at capturing contextual info from enter sequences. Consideration maps present how the mannequin distributes consideration throughout the enter’s components, revealing how context is constructed and propagated by layers.

Understanding how transformers work: Visualizing consideration and its movement by the mannequin at totally different levels helps us perceive how transformers course of their enter. Jacob Gildenblat’s Exploring Explainability for Imaginative and prescient Transformers takes you on a visible journey by Fb’s Knowledge-efficient Picture Transformer (deit-tiny).

Visualizing embeddings

Embeddings are high-dimensional vectors that seize semantic info. These days, they’re usually generated by deep studying fashions. Visualizing embeddings helps to grasp this complicated, high-dimensional knowledge.

Sometimes, embeddings are projected right down to a two- or three-dimensional house and represented by factors. Customary methods embrace principal element evaluation, t-SNE, and UMAP. I’ve lined the latter two in-depth within the part on visualizing cluster evaluation in my article on machine studying visualization.

Thus, it’s no shock that embedding visualizations reveal knowledge patterns, similarities, and anomalies by grouping embeddings into clusters. As an illustration, in case you visualize phrase embeddings with one of many strategies talked about above, you’ll discover that semantically related phrases will find yourself shut collectively within the projection house.

The TensorFlow embedding projector offers everybody entry to interactive visualizations of well-known embeddings like commonplace Word2vec corpora.

When to make use of which deep studying visualization

We are able to break down the deep studying mannequin lifecycle into 4 totally different phases:

1

Pre-training

2

Throughout coaching

3

Submit-training

4

Inference

Every of those phases requires totally different visualizations.

Pre-training deep studying mannequin visualization

Throughout early mannequin improvement, discovering an appropriate mannequin structure is essentially the most important process.

Structure visualizations supply insights into how your mannequin processes info. To know the structure of your deep studying mannequin, you’ll be able to visualize the layers, their connections, and the information movement between them.

Deep studying mannequin visualization throughout mannequin coaching

Within the coaching part, understanding coaching progress is essential. To this finish, coaching dynamics and gradient plots are essentially the most useful visualizations.

If coaching doesn’t yield the anticipated outcomes, characteristic visualizations or inspecting the mannequin’s loss panorama intimately can present beneficial insights. For those who’re coaching transformer-based fashions, visualizing consideration or embeddings can lead you on the correct path.

Submit-training deep studying mannequin visualizations

As soon as the mannequin is totally educated, the primary aim of visualizations is to offer insights into how a mannequin processes knowledge to supply its outputs.

Activation heatmaps uncover which components of the enter are thought-about most essential by the mannequin. Characteristic visualizations reveal the contains a mannequin discovered throughout coaching and assist us perceive what patterns a mannequin is in search of within the enter knowledge at totally different layers. Deep Characteristic Factorization goes a step additional and visualizes areas within the enter house related to the identical idea.

For those who’re working with transformers, consideration and embedding visualizations may help you validate that your mannequin focuses on a very powerful enter components and captures semantically significant ideas.

Inference

At inference time – when a mannequin is used to make predictions or generate outputs – visualizations may help monitor and debug circumstances the place a mannequin went fallacious.

The strategies used are the identical as those you would possibly use within the post-training part however the aim is totally different: As a substitute of understanding the mannequin as an entire, we’re now serious about how the mannequin handles a person enter occasion.

Conclusion

We lined a whole lot of methods to visualise deep studying fashions. We began by asking why we would need visualizations within the first place after which regarded into a number of methods, typically accompanied by hands-on examples. Lastly, we mentioned the place within the mannequin lifecycle the totally different deep studying visualization approaches promise essentially the most beneficial insights.

I hope you loved this text and have some concepts about which visualizations you’ll discover on your present deep studying initiatives. The visualization examples in my Colab pocket book can function beginning factors. Please be at liberty to repeat and adapt them to your wants!

FAQ

Deep studying mannequin visualizations are approaches and methods to render complicated neural networks extra comprehensible by graphical representations. Deep studying fashions encompass many layers described by hundreds of thousands of parameters. Mannequin visualizations remodel this complexity into a visible language that people can comprehend.

Deep studying mannequin visualization could be so simple as plotting curves to grasp how a mannequin’s efficiency modifications over time or as refined as producing three-dimensional heatmaps to understand how the totally different layers of a mannequin contribute to its output.

One frequent strategy for visualizing a deep studying mannequin’s structure is graphs illustrating the connections and knowledge movement between its elements.

You need to use the PyTorchViz library to generate structure visualizations for PyTorch fashions. For those who’re utilizing TensorFlow or Keras, try the built-in mannequin plotting utilities.

There are various methods to visualise deep studying fashions:

Deep studying mannequin structure visualizations uncover a mannequin’s inner construction and the way knowledge flows by it.

Activation heatmaps and have visualizations present insights into what a deep studying mannequin “appears to be like at” and the way this info is processed contained in the mannequin.

Coaching dynamics plots and gradient plots present how a deep studying mannequin learns and assist to establish causes of stalling coaching progress.

Additional, a whole lot of are relevant to deep studying fashions as effectively.

To efficiently combine deep studying mannequin visualization into your knowledge science workflow, comply with this guideline:

Set up a transparent goal. What aim are you making an attempt to realize by visualizations?

Select the suitable visualization approach. Usually, ranging from an summary high-level visualization and subsequently diving deeper is the way in which to go.

Choose the correct libraries and instruments. Some visualization approaches are framework-agnostic, whereas different implementations are particular to a deep studying framework or a specific household of fashions.

Iterate and enhance. It’s unlikely that your first visualization totally meets your or your stakeholders’ wants.

For a extra in-depth dialogue, try the part in my article on visualizing machine studying fashions.

There are a number of methods to visualise TensorFlow fashions. To generate structure visualizations, you need to use the plot_model and model_to_dot utility capabilities in tensorflow.keras.utils.

If you want to discover the construction and knowledge flows inside a TensorFlow mannequin interactively, you need to use TensorBoard, the open-source experiment monitoring and visualization toolkit maintained by the TensorFlow workforce. Take a look at the official Inspecting the TensorFlow Graph tutorial to find out how.

You need to use PyTorchViz to create mannequin structure visualizations for PyTorch deep studying fashions. These visualizations present insights into knowledge movement, activation capabilities, and the way the totally different mannequin elements are interconnected.

To discover the loss panorama of a PyTorch mannequin, you’ll be able to generate stunning visualizations utilizing the code supplied by the authors of the seminal paper Visualizing the Loss Panorama of Neural Nets. Yow will discover an interactive model on-line.

Listed below are three visualization approaches that work effectively for convolutional neural networks:

Characteristic visualization: Uncover which options the CNN’s filters detect throughout the layers. Sometimes, decrease layers detect fundamental buildings like edges, whereas the higher layers detect extra summary ideas and relationships between picture components.

Activation Maps: Get perception into which areas of the enter picture result in the very best activations as knowledge flows by the CNN. This lets you see what the mannequin focuses on when computing its prediction.

Deep Characteristic Factorization: Study which summary ideas the CNN has discovered and confirm that they’re significant semantically.

Transformer fashions are based mostly on consideration mechanisms and embeddings. Naturally, that is what visualization methods deal with:

Consideration visualizations uncover what components and components of the enter a transformer mannequin attends to. They aid you perceive the contextual info the mannequin extracts and the way consideration flows by the mannequin.

Visualizing embeddings usually entails projecting these high-dimensional vectors right into a two- or three-dimensional house the place embedding vectors representing related ideas are grouped carefully collectively.

Deep studying fashions are extremely complicated. Even for knowledge scientists and machine studying engineers, it may be tough to understand how knowledge flows by them. Deep studying visualization methods present a variety of how to scale back this complexity and foster insights by graphical representations.

Visualizations are additionally useful when speaking deep studying outcomes to non-technical stakeholders. Heatmaps, specifically, are an effective way to convey how a mannequin identifies related info within the enter and transforms it right into a prediction.