This put up is co-written with Shamik Ray, Srivyshnav Okay S, Jagmohan Dhiman and Soumya Kundu from Twilio.

Right this moment’s main corporations belief Twilio’s Buyer Engagement Platform (CEP) to construct direct, customized relationships with their prospects all over the place on this planet. Twilio allows corporations to make use of communications and knowledge so as to add intelligence and safety to each step of the client journey, from gross sales and advertising to progress and customer support, and plenty of extra engagement use instances in a versatile, programmatic method. Throughout 180 nations, tens of millions of builders and tons of of 1000’s of companies use Twilio to create magical experiences for his or her prospects. Being one of many largest AWS prospects, Twilio engages with knowledge and synthetic intelligence and machine studying (AI/ML) companies to run their every day workloads. This put up outlines the steps AWS and Twilio took emigrate Twilio’s present machine studying operations (MLOps), the implementation of coaching fashions, and working batch inferences to Amazon SageMaker.

ML fashions don’t function in isolation. They need to combine into present manufacturing programs and infrastructure to ship worth. This necessitates contemplating the complete ML lifecycle throughout design and growth. With the fitting processes and instruments, MLOps allows organizations to reliably and effectively undertake ML throughout their groups for his or her particular use instances. SageMaker features a suite of options for MLOps that features Amazon SageMaker Pipelines and Amazon SageMaker Mannequin Registry. Pipelines permit for simple creation and administration of ML workflows whereas additionally providing storage and reuse capabilities for workflow steps. The mannequin registry simplifies mannequin deployment by centralizing mannequin monitoring.

This put up focuses on easy methods to obtain flexibility in utilizing your knowledge supply of selection and combine it seamlessly with Amazon SageMaker Processing jobs. With SageMaker Processing jobs, you need to use a simplified, managed expertise to run knowledge preprocessing or postprocessing and mannequin analysis workloads on the SageMaker platform.

Twilio wanted to implement an MLOps pipeline that queried knowledge from PrestoDB. PrestoDB is an open supply SQL question engine that’s designed for quick analytic queries in opposition to knowledge of any dimension from a number of sources.

On this put up, we present you a step-by-step implementation to attain the next:

Use case overview

Twilio skilled a binary classification ML mannequin utilizing scikit-learn’s RandomForestClassifier to combine into their MLOps pipeline. This mannequin is used as a part of a batch course of that runs periodically for his or her every day workloads, making coaching and inference workflows repeatable to speed up mannequin growth. The coaching knowledge used for this pipeline is made obtainable by PrestoDB and skim into Pandas by the PrestoDB Python shopper.

The tip purpose was to transform the present steps into two pipelines: a coaching pipeline and a batch remodel pipeline that linked the information queried from PrestoDB to a SageMaker Processing job, and eventually deploy the skilled mannequin to a SageMaker endpoint for real-time inference.

On this put up, we use an open supply dataset obtainable by the TPCH connector that’s packaged with PrestoDB as an example the end-to-end workflow that Twilio used. Twilio was ready to make use of this resolution emigrate their present MLOps pipeline to SageMaker. All of the code for this resolution is accessible within the GitHub repo.

Answer overview

This resolution is split into three foremost steps:

Mannequin coaching pipeline – On this step, we join a SageMaker Processing job to fetch knowledge from a PrestoDB occasion, practice and tune the ML mannequin, consider it, and register it with the SageMaker mannequin registry.

Batch remodel pipeline – On this step, we run a preprocessing knowledge step that reads knowledge from a PrestoDB occasion and runs batch inference on the registered ML mannequin (from the mannequin registry) that we approve as part of this pipeline. This mannequin is authorized both programmatically or manually by the mannequin registry.

Actual-time inference – On this step, we deploy the most recent authorized mannequin as a SageMaker endpoint for real-time inference.

All pipeline parameters used on this resolution exist in a single config.yml file. This file consists of the mandatory AWS and PrestoDB credentials to connect with the PrestoDB occasion, data on the coaching hyperparameters and SQL queries which can be run at coaching, and inference steps to learn knowledge from PrestoDB. This resolution is very customizable for industry-specific use instances in order that it may be used with minimal code adjustments by easy updates within the config file.

The next code reveals an instance of how a question is configured throughout the config.yml file. This question is used on the knowledge processing step of the coaching pipeline to fetch knowledge from the PrestoDB occasion. Right here, we predict whether or not an order is a high_value_order or a low_value_order based mostly on the orderpriority as given from the TPC-H knowledge. For extra data on the TPC-H knowledge, its database entities, relationships, and traits, discuss with TPC Benchmark H. You possibly can change the question to your use case throughout the config file and run the answer with no code adjustments.

The primary steps of this resolution are described intimately within the following sections.

Information preparation and coaching

The info preparation and coaching pipeline consists of the next steps:

The coaching knowledge is learn from a PrestoDB occasion, and any characteristic engineering wanted is completed as a part of the SQL queries run in PrestoDB at retrieval time. The queries which can be used to fetch knowledge at coaching and batch inference steps are configured within the config file.

We use the FrameworkProcessor with SageMaker Processing jobs to learn knowledge from PrestoDB utilizing the Python PrestoDB shopper.

For the coaching and tuning step, we use the SKLearn estimator from the SageMaker SDK and the RandomForestClassifier from scikit-learn to coach the ML mannequin. The HyperparameterTuner class is used for working automated mannequin tuning, which finds the most effective model of the mannequin by working many coaching jobs on the dataset utilizing the algorithm and the ranges of hyperparameters.

The mannequin analysis step checks that the skilled and tuned mannequin has an accuracy stage above a user-defined threshold and solely then register that mannequin throughout the mannequin registry. If the mannequin accuracy doesn’t meet the brink, the pipeline fails and the mannequin just isn’t registered with the mannequin registry.

The mannequin coaching pipeline is then run with pipeline.begin, which invokes and instantiates all of the previous steps.

Batch remodel

The batch remodel pipeline consists of the next steps:

The pipeline implements a knowledge preparation step that retrieves knowledge from a PrestoDB occasion (utilizing a knowledge preprocessing script) and shops the batch knowledge in Amazon Easy Storage Service (Amazon S3).

The most recent mannequin registered within the mannequin registry from the coaching pipeline is authorized.

A Transformer occasion is used to runs a batch remodel job to get inferences on the complete dataset saved in Amazon S3 from the information preparation step and retailer the output in Amazon S3.

SageMaker real-time inference

The SageMaker endpoint pipeline consists of the next steps:

The most recent authorized mannequin is retrieved from the mannequin registry utilizing the describe_model_package operate from the SageMaker SDK.

The most recent authorized mannequin is deployed as a real-time SageMaker endpoint.

The mannequin is deployed on a ml.c5.xlarge occasion with a minimal occasion depend of 1 and a most occasion depend of three (configurable by the person) with the automated scaling coverage set to ENABLED. This removes pointless situations so that you don’t pay for provisioned situations that you simply aren’t utilizing.

Conditions

To implement the answer offered on this put up, it is best to have an AWS account, a SageMaker area to entry Amazon SageMaker Studio, and familiarity with SageMaker, Amazon S3, and PrestoDB.

The next stipulations additionally must be in place earlier than working this code:

PrestoDB – We use the built-in datasets obtainable in PrestoDB by the TPCH connector for this resolution. Comply with the directions within the GitHub README.md to arrange PrestoDB on an Amazon Elastic Compute Cloud (Amazon EC2) occasion in your account. If you have already got entry to a PrestoDB occasion, you possibly can skip this step however notice its connection particulars (see the presto part within the config file). When you have got your PrestoDB credentials, fill out the presto part within the config file as follows (enter your host public IP, port, credentials, catalog and schema):

VPC community configurations – We additionally outline the encryption, community isolation, and VPC configurations of the ML mannequin and operations within the config file. For extra data on community configurations and preferences, discuss with Hook up with SageMaker Inside your VPC. In case you are utilizing the default VPC and safety teams then you possibly can depart these configuration parameters empty, see instance on this configuration file. If not, then within the aws part, specify the enable_network_isolation standing, security_group_ids, and subnets based mostly in your community isolation preferences. :

IAM position – Arrange an AWS Id and Entry Administration (IAM) position with applicable permissions to permit SageMaker to entry AWS Secrets and techniques Supervisor, Amazon S3, and different companies inside your AWS account. Till an AWS CloudFormation template is offered that creates the position with the requisite IAM permissions, use a SageMaker position that enables the AmazonSageMakerFullAccess AWS managed coverage to your position.

Secrets and techniques Supervisor secret – Arrange a secret in Secrets and techniques Supervisor for the PrestoDB person identify and password. Name the key prestodb-credentials and add a username discipline and password discipline to it. For directions, discuss with Create and handle secrets and techniques with AWS Secrets and techniques Supervisor.

Deploy the answer

Full the next steps to deploy the answer:

Clone the GitHub repository in SageMaker Studio. For directions, see Clone a Git Repository in SageMaker Studio Traditional.

Edit the config.yml file as follows:

Edit the parameter values within the presto part. These parameters outline the connectivity to PrestoDB.

Edit the parameter values within the aws part. These parameters outline the community connectivity, IAM position, bucket identify, AWS Area, and different AWS Cloud-related parameters.

Edit the parameter values within the sections akin to the pipeline steps (training_step, tuning_step, transform_step, and so forth).

Overview all of the parameters in these sections fastidiously and edit them as applicable to your use case.

When the stipulations are full and the config.yml file is about up appropriately, you’re able to run the mlops-pipeline-prestodb resolution. The next structure diagram offers a visible illustration of the steps that you simply implement.

The diagram reveals the next three steps:

Half 1: Coaching – This pipeline consists of the information preprocessing step, the coaching and tuning step, the mannequin analysis step, the situation step, and the register mannequin step. The practice, take a look at, and validation datasets and analysis report which can be generated on this pipeline are despatched to an S3 bucket.

Half 2: Batch remodel – This pipeline consists of the batch knowledge preprocessing step, approving the most recent mannequin from the mannequin registry, creating the mannequin occasion, and performing batch transformation on knowledge that’s saved and retrieved from an S3 bucket.

The PrestoDB server is hosted on an EC2 occasion, with credentials saved in Secrets and techniques Supervisor.

Half 3: SageMaker real-time inference – Lastly, the most recent authorized mannequin from the SageMaker mannequin registry is deployed as a SageMaker real-time endpoint for inference.

Check the answer

On this part, we stroll by the steps of working the answer.

Coaching pipeline

Full the next steps to run the coaching pipeline

(0_model_training_pipeline.ipynb):

On the SageMaker Studio console, select 0_model_training_pipeline.ipynb within the navigation pane.

When the pocket book is open, on the Run menu, select Run All Cells to run the code on this pocket book.

This pocket book demonstrates how you need to use SageMaker Pipelines to string collectively a sequence of knowledge processing, mannequin coaching, tuning, and analysis steps to coach a binary classification ML mannequin utilizing scikit-learn.

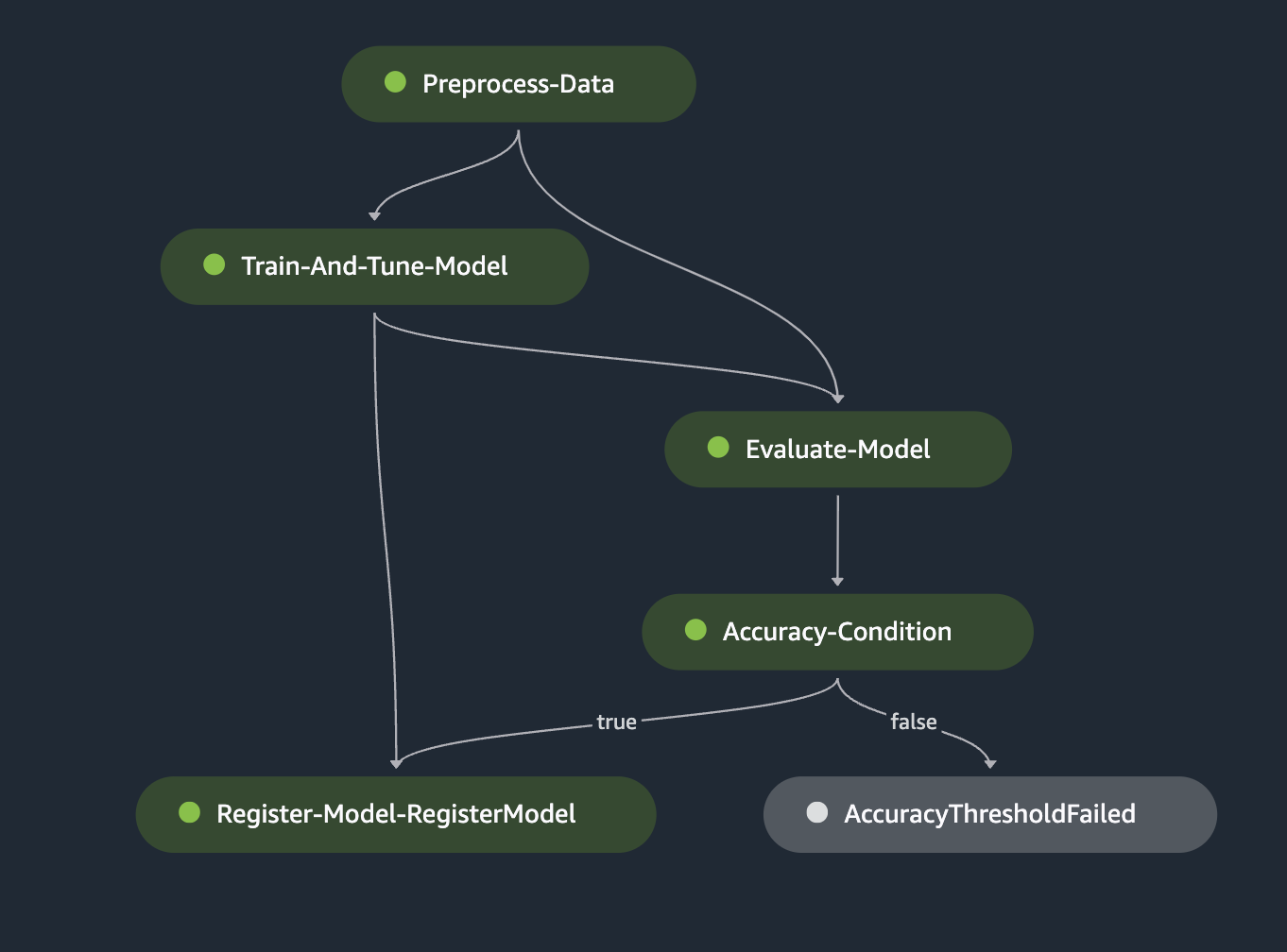

On the finish of this run, navigate to pipelines within the navigation pane. Your pipeline construction on SageMaker Pipelines ought to seem like the next determine.

The coaching pipeline consists of the next steps which can be carried out by the pocket book run:

Preprocess the information – On this step, we create a processing job for knowledge preprocessing. For extra data on processing jobs, see Course of knowledge. We use a preprocessing script to attach and question knowledge from a PrestoDB occasion utilizing the user-specified SQL question within the config file. This step splits and sends knowledge retrieved from PrestoDB as practice, take a look at, and validation recordsdata to an S3 bucket. The ML mannequin is skilled utilizing the information in these recordsdata.

The sklearn_processor is used within the ProcessingStep to run the scikit-learn script that preprocesses knowledge. The step is outlined as follows:

Right here, we use config[‘scripts’][‘source_dir’], which factors to the information preprocessing script that connects to the PrestoDB occasion. Parameters used as arguments in step_args are configurable and fetched from the config file.

Prepare the mannequin – On this step, we create a coaching job to coach a mannequin. For extra data on coaching jobs, see Prepare a Mannequin with Amazon SageMaker. Right here, we use the Scikit Study Estimator from the SageMaker SDK to deal with the end-to-end coaching and deployment of customized Scikit-learn code. The RandomForestClassifier is used to coach the ML mannequin for our binary classification use case. The HyperparameterTuner class is used for working automated mannequin tuning to find out the set of hyperparameters that present the most effective efficiency based mostly on a user-defined metric threshold (for instance, maximizing the AUC metric).

Within the following code, the sklearn_estimator object is used with parameters which can be configured within the config file and makes use of a coaching script to coach the ML mannequin. This step accesses the practice, take a look at, and validation recordsdata that have been created as part of the earlier knowledge preprocessing step.

Consider the mannequin – This step checks if the skilled and tuned mannequin has an accuracy stage above a user-defined threshold, and solely then registers the mannequin with the mannequin registry. If the mannequin accuracy doesn’t meet the user-defined threshold, the pipeline fails and the mannequin just isn’t registered with the mannequin registry. We use the ScriptProcessor with an analysis script {that a} person creates to judge the skilled mannequin based mostly on a metric of selection.

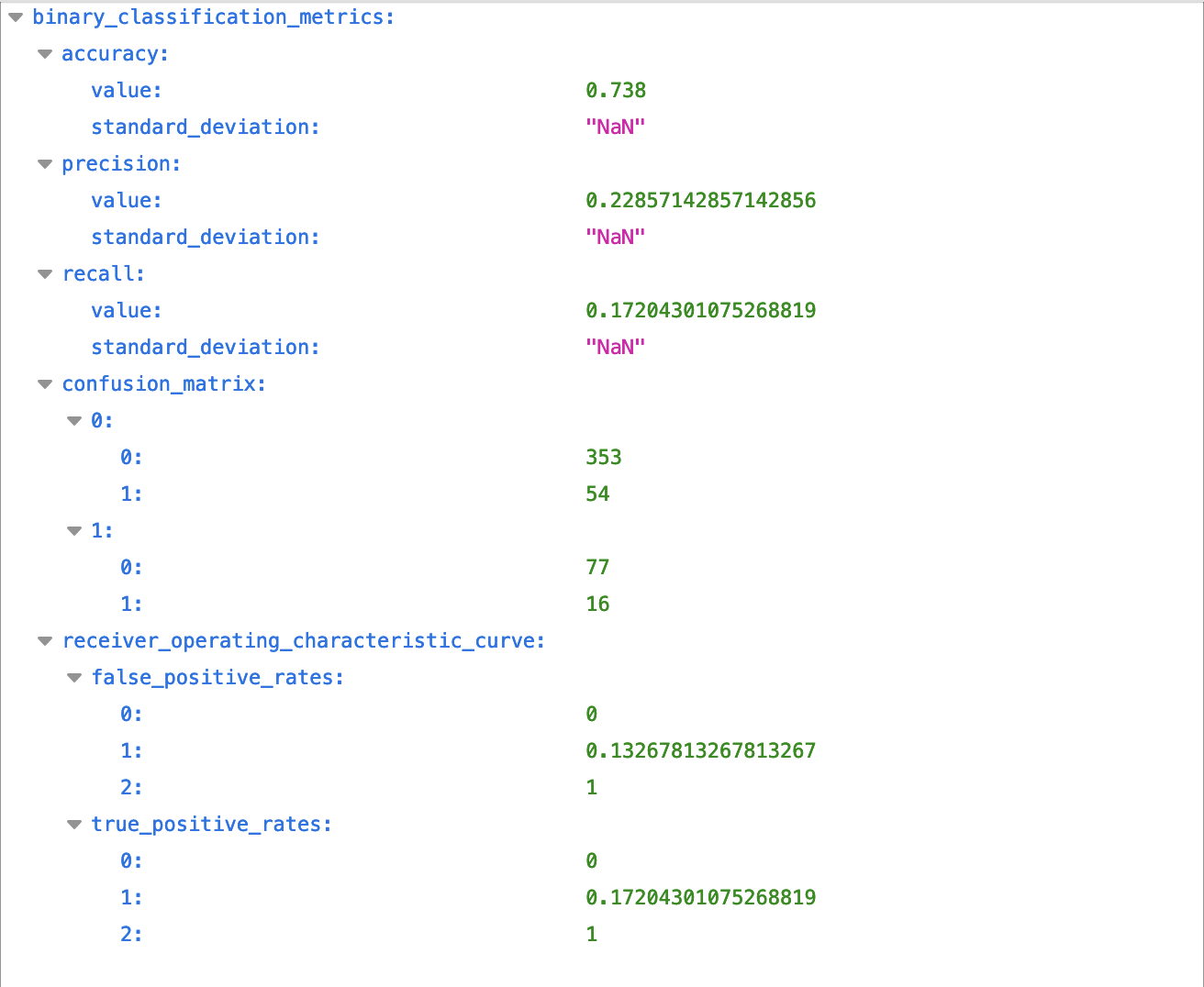

The analysis step makes use of the analysis script as a code entry. This script prepares the options and goal values, and calculates the prediction possibilities utilizing mannequin.predict. On the finish of the run, an analysis report is shipped to Amazon S3 that accommodates data on precision, recall, and accuracy metrics.

The next screenshot reveals an instance of an analysis report.

Add circumstances – After the mannequin is evaluated, we are able to add circumstances to the pipeline with a ConditionStep. This step registers the mannequin provided that the given user-defined metric threshold is met. In our resolution, we solely need to register the brand new mannequin model with the mannequin registry if the brand new mannequin meets a particular accuracy situation of above 70%.

If the accuracy situation just isn’t met, a step_fail step is run that sends an error message to the person, and the pipeline fails. For example, as a result of the user-defined accuracy situation is about to 0.7 within the config file, and the accuracy calculated throughout the analysis step exceeds it (73.8%), the result of this step is about to True and the mannequin strikes to the final step of the coaching pipeline.

Register the mannequin – The RegisterModel step registers a sagemaker.mannequin.Mannequin or a sagemaker.pipeline.PipelineModel with the SageMaker mannequin registry. When the skilled mannequin meets the mannequin efficiency necessities, a brand new model of the mannequin is registered with the SageMaker mannequin registry.

The mannequin is registered with the mannequin registry with an approval standing set to PendingManualApproval. This implies the mannequin can’t be deployed on a SageMaker endpoint except its standing within the registry is modified to Accredited manually on the SageMaker console, programmatically, or by an AWS Lambda operate.

Now that the mannequin is registered, you may get entry to the registered mannequin manually on the SageMaker Studio mannequin registry console or programmatically within the subsequent pocket book, approve it, and run the batch remodel pipeline.

Batch remodel pipeline

Full the next steps to run the batch remodel pipeline (1_batch_transform_pipeline.ipynb):

On the SageMaker Studio console, select 1_batch_transform_pipeline.ipynb within the navigation pane.

When the pocket book is open, on the Run menu, select Run All Cells to run the code on this pocket book.

This pocket book will run a batch remodel pipeline utilizing the mannequin skilled within the earlier pocket book.

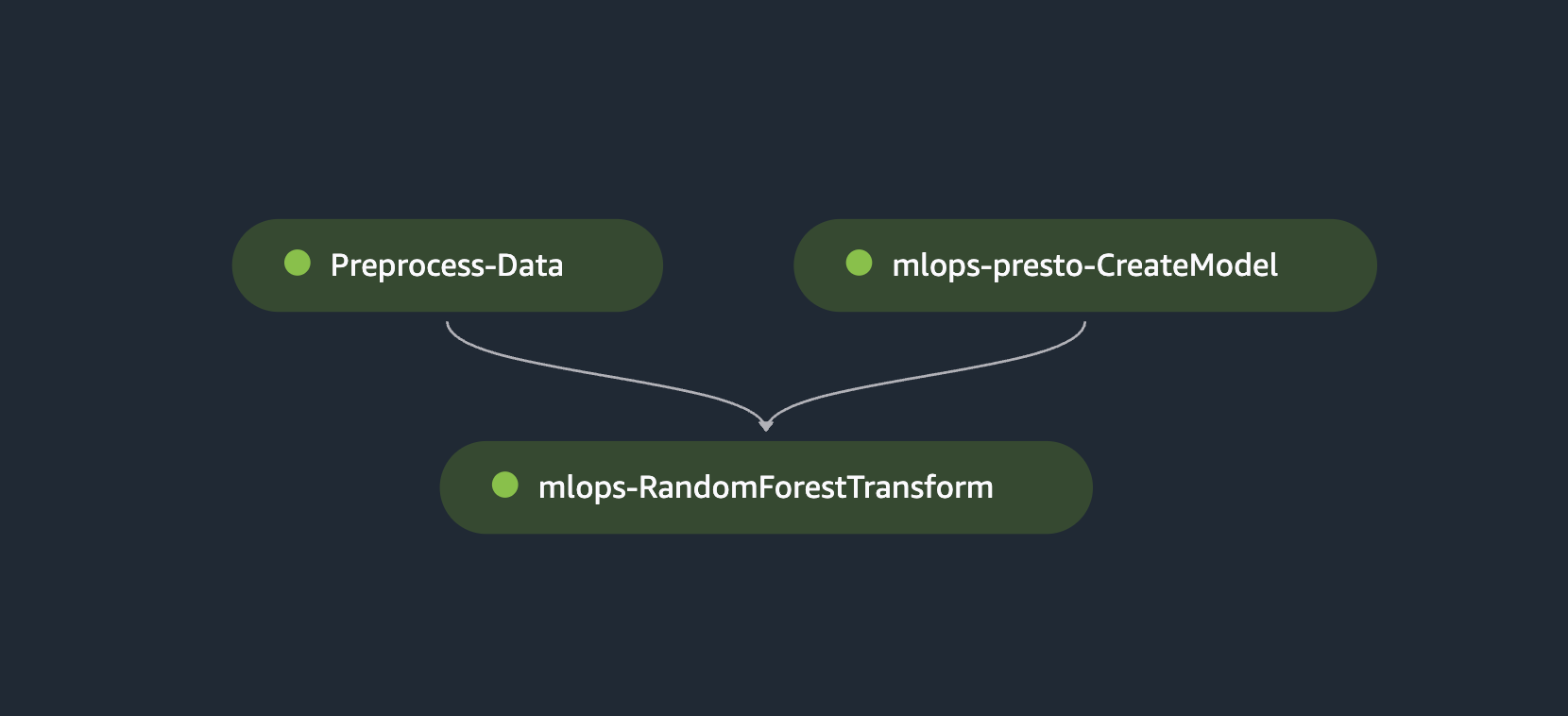

On the finish of the batch remodel pipeline, your pipeline construction on SageMaker Pipelines ought to seem like the next determine.

The batch remodel pipeline consists of the next steps which can be carried out by the pocket book run:

Extract the most recent authorized mannequin from the SageMaker mannequin registry – On this step, we extract the most recent mannequin from the mannequin registry and set the ModelApprovalStatus to Accredited:

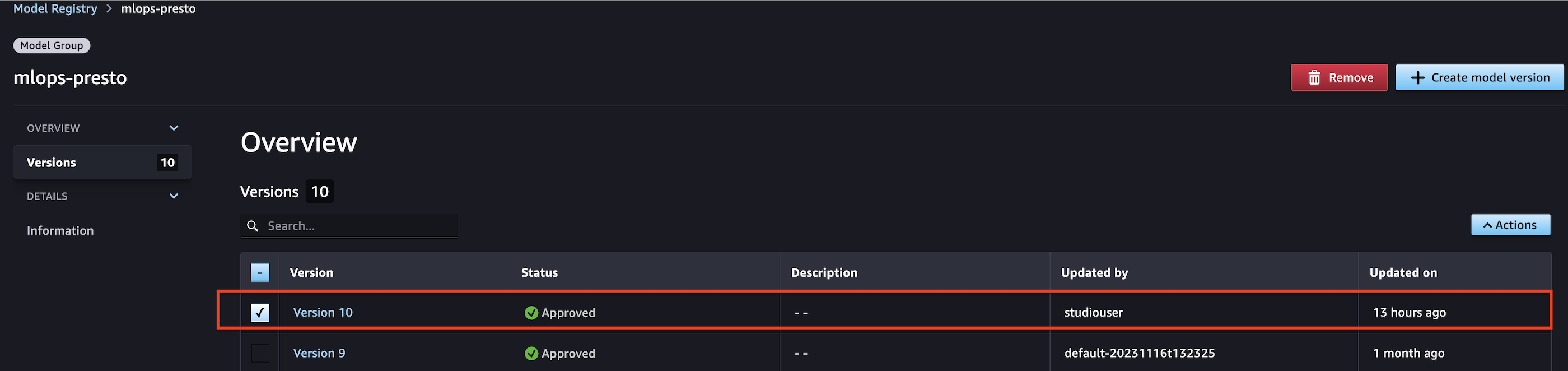

Now we’ve extracted the most recent mannequin from the SageMaker mannequin registry and programmatically authorized it. You can even approve the mannequin manually on the SageMaker mannequin registry web page in SageMaker Studio as proven within the following screenshot.

Learn uncooked knowledge for inference from PrestoDB and retailer it in an S3 bucket – After the most recent mannequin is authorized, batch knowledge is fetched from the PrestoDB occasion and used for the batch remodel step. On this step, we use a batch preprocessing script that queries knowledge from PrestoDB and saves it in a batch listing inside an S3 bucket. The question that’s used to fetch batch knowledge is configured by the person throughout the config file within the transform_step part:

After the batch knowledge is extracted into the S3 bucket, we create a mannequin occasion and level to the inference.py script, which accommodates code that runs as a part of getting inference from the skilled mannequin:

Create a batch remodel step to carry out inference on the batch knowledge saved in Amazon S3 – Now {that a} mannequin occasion is created, create a Transformer occasion with the suitable mannequin sort, compute occasion sort, and desired output S3 URI. Particularly, move within the ModelName from the CreateModelStep step_create_model properties. The CreateModelStep properties attribute matches the article mannequin of the DescribeModel response object. Use a remodel step for batch transformation to run inference on a complete dataset. For extra details about batch remodel, see Run Batch Transforms with Inference Pipelines.

A remodel step requires a transformer and the information on which to run batch inference:

Now that the transformer object is created, move the transformer enter (which accommodates the batch knowledge from the batch preprocess step) into the TransformStep declaration. Retailer the output of this pipeline in an S3 bucket.

SageMaker real-time inference

Full the next steps to run the real-time inference pipeline (2_realtime_inference.ipynb):

On the SageMaker Studio console, select 2_realtime_inference_pipeline.ipynb within the navigation pane.

When the pocket book is open, on the Run menu, select Run All Cells to run the code on this pocket book.

This pocket book extracts the most recent authorized mannequin from the mannequin registry and deploys it as a SageMaker endpoint for real-time inference. It does so by finishing the next steps:

Extract the most recent authorized mannequin from the SageMaker mannequin registry – To deploy a real-time SageMaker endpoint, first fetch the picture URI of your selection and extract the most recent authorized mannequin from the mannequin registry. After the most recent authorized mannequin is extracted, we use a container checklist with the desired inference.py because the script for the deployed mannequin to make use of at inference. This mannequin creation and endpoint deployment are particular to the scikit-learn mannequin configuration.

Within the following code, we use the inference.py file particular to the scikit-learn mannequin. We then create our endpoint configuration, setting our ManagedInstanceScaling to ENABLED with our desired MaxInstanceCount and MinInstanceCount for automated scaling:

Run inference on the deployed real-time endpoint – After you have got extracted the most recent authorized mannequin, created the mannequin from the specified picture URI, and configured the endpoint configuration, you possibly can deploy it as a real-time SageMaker endpoint:

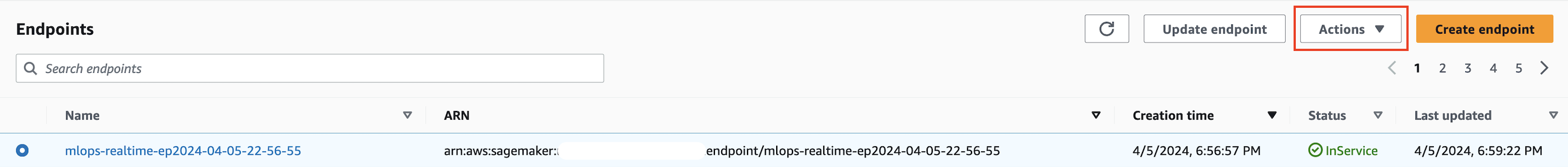

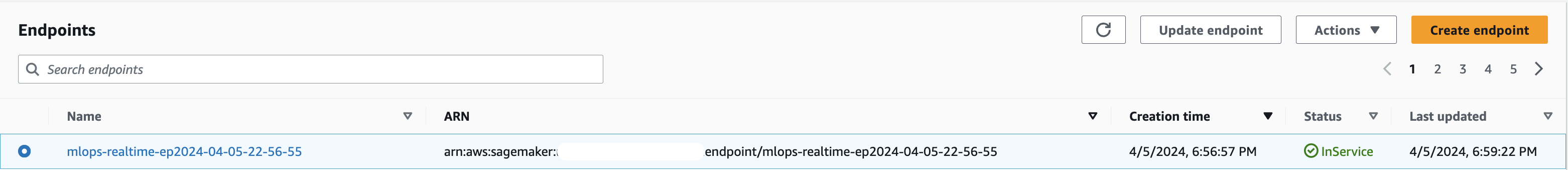

Upon deployment, you possibly can view the endpoint in service on the SageMaker Endpoints web page.

Now you possibly can run inference in opposition to the information extracted from PrestoDB:

Outcomes

Right here is an instance of an inference request and response from the actual time endpoint utilizing the implementation above:

Inference request format (view and alter this instance as you want to to your customized use case)

Response from the actual time endpoint

Clear up

To scrub up the endpoint used on this resolution to keep away from additional prices, full the next steps:

On the SageMaker console, select Endpoints within the navigation pane.

Choose the endpoint to delete.

On the Actions menu, select Delete.

Conclusion

On this put up, we demonstrated an end-to-end MLOps resolution on SageMaker. The method concerned fetching knowledge by connecting a SageMaker Processing job to a PrestoDB occasion, adopted by coaching, evaluating, and registering the mannequin. We authorized the most recent registered mannequin from the coaching pipeline and ran batch inference in opposition to it utilizing batch knowledge queried from PrestoDB and saved in Amazon S3. Lastly, we deployed the most recent authorized mannequin as a real-time SageMaker endpoint to run inferences.

The rise of generative AI will increase the demand for coaching, deploying, and working ML fashions, and consequently, using knowledge. By integrating SageMaker Processing jobs with PrestoDB, you possibly can seamlessly migrate your workloads to SageMaker pipelines with out further knowledge preparation, storage, or accessibility burdens. You possibly can construct, practice, consider, run batch inferences, and deploy fashions as real-time endpoints whereas utilizing your present knowledge engineering pipelines with minimal or no code adjustments.

Discover SageMaker Pipelines and open supply knowledge querying engines like PrestoDB, and construct an answer utilizing the pattern implementation offered.

Get began at this time by referring to the GitHub repository.

For extra data and tutorials on SageMaker Pipelines, discuss with the SageMaker Pipelines documentation.

In regards to the Authors

Madhur Prashant is an AI and ML Options Architect at Amazon Net Companies. He’s passionate in regards to the intersection of human considering and generative AI. His pursuits lie in generative AI, particularly constructing options which can be useful and innocent, and most of all optimum for patrons. Exterior of labor, he loves doing yoga, mountain climbing, spending time along with his twin, and enjoying the guitar.

Madhur Prashant is an AI and ML Options Architect at Amazon Net Companies. He’s passionate in regards to the intersection of human considering and generative AI. His pursuits lie in generative AI, particularly constructing options which can be useful and innocent, and most of all optimum for patrons. Exterior of labor, he loves doing yoga, mountain climbing, spending time along with his twin, and enjoying the guitar.

Amit Arora is an AI and ML Specialist Architect at Amazon Net Companies, serving to enterprise prospects use cloud-based machine studying companies to quickly scale their improvements. He’s additionally an adjunct lecturer within the MS knowledge science and analytics program at Georgetown College in Washington D.C.

Amit Arora is an AI and ML Specialist Architect at Amazon Net Companies, serving to enterprise prospects use cloud-based machine studying companies to quickly scale their improvements. He’s additionally an adjunct lecturer within the MS knowledge science and analytics program at Georgetown College in Washington D.C.

Antara Raisa is an AI and ML Options Architect at Amazon Net Companies supporting strategic prospects based mostly out of Dallas, Texas. She additionally has expertise working with massive enterprise companions at AWS, the place she labored as a Associate Success Options Architect for digital-centered prospects.

Antara Raisa is an AI and ML Options Architect at Amazon Net Companies supporting strategic prospects based mostly out of Dallas, Texas. She additionally has expertise working with massive enterprise companions at AWS, the place she labored as a Associate Success Options Architect for digital-centered prospects.

Johnny Chivers is a Senior Options Architect working throughout the Strategic Accounts crew at AWS. With over 10 years of expertise serving to prospects undertake new applied sciences, he guides them by architecting end-to-end options spanning infrastructure, huge knowledge, and AI.

Johnny Chivers is a Senior Options Architect working throughout the Strategic Accounts crew at AWS. With over 10 years of expertise serving to prospects undertake new applied sciences, he guides them by architecting end-to-end options spanning infrastructure, huge knowledge, and AI.

Shamik Ray is a Senior Engineering Supervisor at Twilio, main the Information Science and ML crew. With 12 years of expertise in software program engineering and knowledge science, he excels in overseeing complicated machine studying tasks and guaranteeing profitable end-to-end execution and supply.

Shamik Ray is a Senior Engineering Supervisor at Twilio, main the Information Science and ML crew. With 12 years of expertise in software program engineering and knowledge science, he excels in overseeing complicated machine studying tasks and guaranteeing profitable end-to-end execution and supply.

Srivyshnav Okay S is a Senior Machine Studying Engineer at Twilio with over 5 years of expertise. His experience lies in leveraging statistical and machine studying methods to develop superior fashions for detecting patterns and anomalies. He’s adept at constructing tasks end-to-end.

Srivyshnav Okay S is a Senior Machine Studying Engineer at Twilio with over 5 years of expertise. His experience lies in leveraging statistical and machine studying methods to develop superior fashions for detecting patterns and anomalies. He’s adept at constructing tasks end-to-end.

Jagmohan Dhiman is a Senior Information Scientist with 7 years of expertise in machine studying options. He has intensive experience in constructing end-to-end options, encompassing knowledge evaluation, ML-based utility growth, structure design, and MLOps pipelines for managing the mannequin lifecycle.

Jagmohan Dhiman is a Senior Information Scientist with 7 years of expertise in machine studying options. He has intensive experience in constructing end-to-end options, encompassing knowledge evaluation, ML-based utility growth, structure design, and MLOps pipelines for managing the mannequin lifecycle.

Soumya Kundu is a Senior Information Engineer with virtually 10 years of expertise in Cloud and Huge Information applied sciences. He specialises in AI/ML based mostly massive scale Information Processing programs and an avid IoT fanatic in his spare time.

Soumya Kundu is a Senior Information Engineer with virtually 10 years of expertise in Cloud and Huge Information applied sciences. He specialises in AI/ML based mostly massive scale Information Processing programs and an avid IoT fanatic in his spare time.