The fast development of deep studying algorithms and generative fashions has enabled the automated manufacturing of more and more hanging AI-generated inventive content material. Most of this AI-generated artwork, nonetheless, is created by algorithms and computational fashions, somewhat than by bodily robots.

Researchers at Universidad Complutense de Madrid (UCM) and Universidad Carlos III de Madrid (UC3M) lately developed a deep learning-based mannequin that enables a humanoid robotic to sketch photos, equally to how a human artist would. Their paper, printed in Cognitive Methods Analysis, presents a exceptional demonstration of how robots might actively have interaction in artistic processes.

“Our thought was to suggest a robotic software that would appeal to the scientific group and most people,” Raúl Fernandez-Fernandez, co-author of the paper, advised Tech Xplore. “We considered a activity that may very well be stunning to see a robotic performing, and that was how the idea of doing artwork with a humanoid robotic got here to us.”

Most present robotic programs designed to provide sketches or work primarily work like printers, reproducing pictures that have been beforehand generated by an algorithm. Fernandez-Fernandez and his colleagues, then again, wished to create a robotic that leverages deep reinforcement studying methods to create sketches stroke by stroke, much like how people would draw them.

“The purpose of our examine was to not make a portray robotic software that would generate complicated work, however somewhat to create a strong bodily robotic painter,” Fernandez-Fernandez stated. “We needed to enhance on the robotic management stage of portray robotic purposes.”

Previously few years, Fernandez-Fernandez and his colleagues have been attempting to plan superior and environment friendly algorithms to plan the actions of artistic robots. Their new paper builds on these current analysis efforts, combining approaches that they discovered to be significantly promising.

“This work was impressed from two key earlier works,” Fernandez-Fernandez stated. “The primary of those is one in all our earlier analysis efforts, the place we explored the potential of the Fast Draw! Dataset works for coaching robotic painters. The second work launched Deep-Q-Studying as a technique to carry out complicated trajectories that would embrace complicated options like feelings.”

The brand new robotic sketching system introduced by the researchers is predicated on a Deep-Q-Studying framework first launched in a earlier paper by Zhou and colleagues posted to arXiv. Fernandez-Fernandez and his colleagues improved this framework to rigorously plan the actions of robots, permitting them to finish complicated guide duties in a variety of environments.

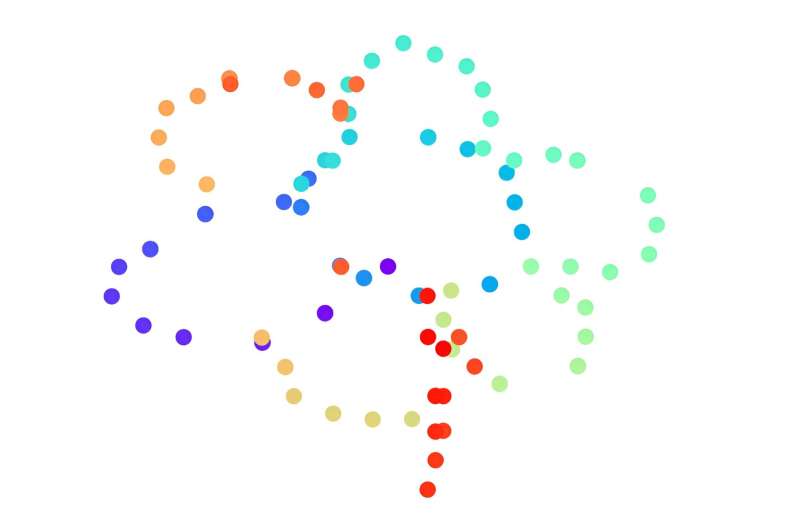

“The neural community is split in three components that may be seen as three completely different networks interconnected,” Fernandez-Fernandez defined. “The worldwide community extracts the high-level options of the complete canvas. The native community extracts low degree options across the portray place. The output community takes as enter the options extracted by the convolutional layers (from the worldwide and native networks) to generate the subsequent portray positions.”

Fernandez-Fernandez and his collaborators additionally knowledgeable their mannequin through two further channels that present distance-related and portray instrument info (i.e., the place of the instrument with respect the canvas). Collectively, all these options guided the coaching of their community, enhancing its sketching expertise. To additional enhance their system’s human-like portray expertise, the researchers additionally launched a pre-training step primarily based on a so-called random stroke generator.

“We use double Q-learning to keep away from the overestimation downside and a customized reward operate for its coaching,” Fernandez-Fernandez stated. “Along with this, we launched a further sketch classification community to extract the high-level options of the sketch and use its output because the reward within the final steps of a portray epoch. This community gives some flexibility to the portray for the reason that reward generated by it doesn’t depend upon the reference canvas however the class.”

As they have been attempting to automate sketching utilizing a bodily robotic, the researchers needed to additionally devise a method to translate the distances and positions noticed in AI-generated pictures right into a canvas in the true world. To realize this, they generated a discretized digital area throughout the bodily canvas, through which the robotic might transfer and straight translate the portray positions supplied by the mannequin.

“I feel probably the most related achievement of this work is the introduction of superior management algorithms inside an actual robotic portray software,” Fernandez-Fernandez stated. “With this work, we’ve got demonstrated that the management step of portray robotic purposes could be improved with the introduction of those algorithms. We imagine that DQN frameworks have the aptitude and degree of abstraction to attain unique and high-level purposes out of the scope of classical issues.”

The current work by this crew of researchers is an interesting instance of how robots might create artwork in the true world, through actions that extra carefully resemble these of human artists. Fernandez-Fernandez and his colleagues hope that the deep learning-based mannequin they developed will encourage additional research, probably contributing to the introduction of management insurance policies that permit robots to deal with more and more complicated duties.

“On this line of labor, we’ve got developed a framework utilizing Deep Q-Studying to extract the feelings of a human demonstrator and switch it to a robotic,” Fernandez-Fernandez added. “On this current paper, we reap the benefits of the function extraction capabilities of DQN networks to deal with feelings as a function that may be optimized and outlined throughout the reward of a typical robotic activity and outcomes are fairly spectacular.

“In future works, we purpose to introduce comparable concepts that improve robotic management purposes past classical robotic management issues.”

Extra info:

Raul Fernandez-Fernandez et al, Deep Robotic Sketching: An software of Deep Q-Studying Networks for human-like sketching, Cognitive Methods Analysis (2023). DOI: 10.1016/j.cogsys.2023.05.004

arXiv

© 2024 Science X Community

Quotation:

Human-like real-time sketching by a humanoid robotic (2024, February 24)

retrieved 24 February 2024

from https://techxplore.com/information/2024-02-human-real-humanoid-robot.html

This doc is topic to copyright. Other than any honest dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is supplied for info functions solely.