Hearken to this text

A Tesla automotive demonstrates self-driving mode. Supply: Tesla

In Musk, Walter Isaacson’s biography of Elon Musk, we realized how Tesla deliberate to make use of synthetic intelligence in its autos to supply its long-awaited full self-driving mode. 12 months after yr, the corporate has promised its house owners Full Self Driving, however FSD stays in beta. That doesn’t cease Tesla from charging $199 per thirty days for it, although.

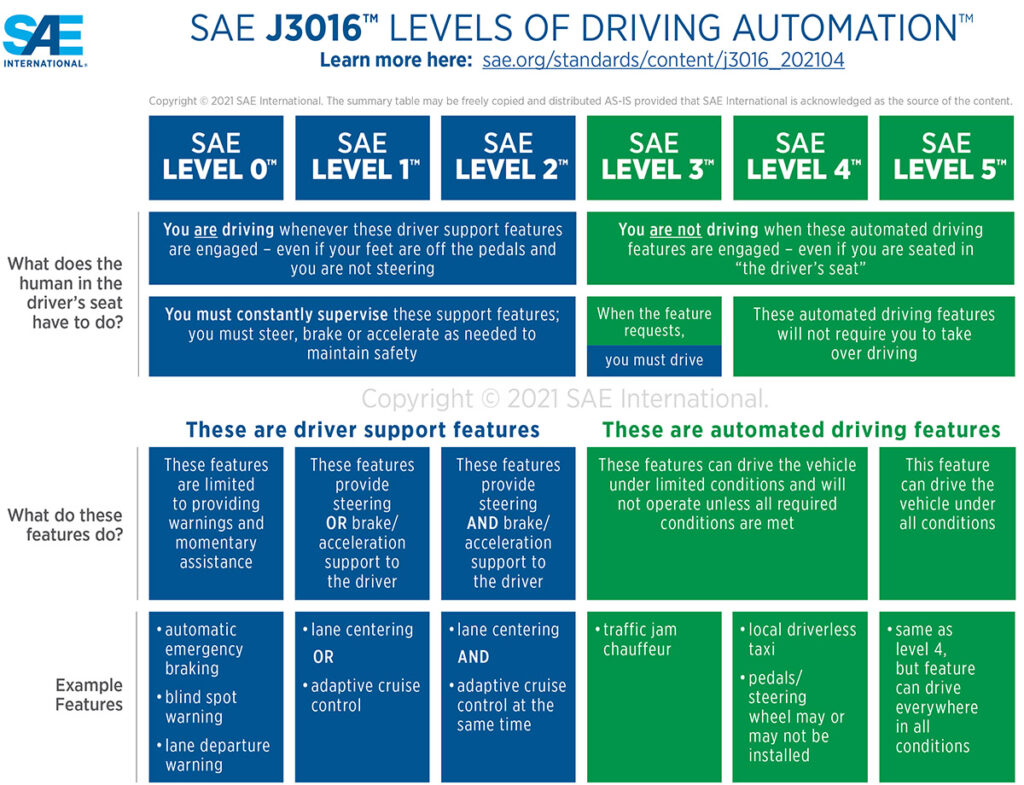

SAE Worldwide (previously the Society of Automotive Engineers) defines Stage 4 autonomy as a hands-off-the-steering-wheel mode by which a car drives itself from Level A to Level B.

The one factor extra magical, L5, is not any steering wheel. No gasoline or brake pedal, both. L5 has been achieved by a number of corporations however just for shuttles. One instance was Olli, a 3D-printed electrical car (EV) that was a spotlight of IMTS 2016, the most important manufacturing present within the U.S.

Nonetheless, Olli’s producer, Native Motors, ran out of cash and closed its doorways in January 2022, a month after one among its autos that was being examined in Toronto ran right into a tree.

SAE J3016 ranges of autonomous driving. Click on right here to enlarge. Supply: SAE Worldwide

Edge circumstances uncover challenges

The normal method to L4 self-driving vehicles has been to program for each conceivable visitors state of affairs with an “If this, then that” nested algorithm. For instance, if a automotive turns in entrance of the car, then drive round—if the speeds permit it. If not, cease.

Programmers have created libraries of 1000’s upon 1000’s of conditions/responses … solely to have “edge circumstances,” as unlucky and typically disastrous occasions hold maddeningly arising.

Teslas, and different self-driving autos, notably robotaxis, have come below rising scrutiny. The Nationwide Freeway Visitors Security Administration (NHTSA) investigated Tesla for its function in 16 crashes with security autos when the corporate‘s autos have been in Autopilot or Full Self Driving mode.

In March 2018, an Uber robotaxi with an inattentive human behind the wheel bumped into an individual strolling their bike throughout a avenue in Tempe, Ariz., and killed her. Lately, a Cruise robotaxi ran right into a pedestrian and dragged her 20 ft.

Self-driving automotive corporations makes an attempt to downplay such incidents, suggesting that they’re few and much between and that autonomous autos (AVs) are a secure various to people who kill 40,000 yearly within the U.S. alone, have been unsuccessful. It’s not truthful, say the technologists. It’s zero tolerance, says the general public.

Musk claims to have a greater means

Elon Musk is hardly one to just accept a standard method, such because the state of affairs/response library. A creator of the “transfer quick and break issues” motion, now the mantra of each wannabe disruptor startup, stated he had a greater means.

The higher means was studying how the very best drivers drove after which utilizing AI to use their conduct within the Tesla’s Full Self Driving mode. For this, Tesla had a transparent benefit over its opponents. For the reason that first Tesla rolled into use, the autos have been sending movies to the corporate.

In “The Radical Scope of Tesla’s Information Hoard,” IEEE Spectrum reported on the information Tesla autos have been amassing. Whereas many fashionable autos are bought with black bins that report pre-crash information, Tesla autos goes the additional mile, amassing and preserving prolonged route information.

This got here to gentle when Tesla used the prolonged information to exonerate itself in a civil lawsuit. Tesla was additionally suspected of storing tens of millions of hours of video—petabytes of information. This was revealed in Musk’s biography, which stated he realized that the video might function a studying library for Tesla’s AI, particularly its neural networks.

From this large information lake, Tesla staff recognized the very best drivers. From there, it was easy: Prepare the AI to drive like the great drivers. Like a superb human driver, Teslas would then have the ability to deal with any state of affairs, not simply these within the state of affairs/response libraries.

Is Tesla’s self-driving mission doable?

Whether or not it’s doable for AI to switch a human behind the wheel nonetheless stays to be seen. Tesla nonetheless fees 1000’s a yr for Full Self Driving however has didn’t ship the know-how.

Tesla has been handed by Mercedes, which attained Stage 3 autonomy with its totally electrical EQS autos final yr.

In the meantime, opponents of AVs and AI develop stronger and louder. In San Francisco, Cruise was virtually drummed out of city after an October incident by which it allegedly failed to indicate the video from one among its autos dragging a pedestrian who was pinned beneath the automotive for 20 ft.

Even some stalwart technologists have crossed to the aspect of security. Musk himself, regardless of his use of AI in Tesla, has condemned AI publicly and forcefully, saying it should result in the destruction of civilization. What do you anticipate from a sci-fi fan, as Musk admits to being, however from a revered engineering publication?

In IEEE Spectrum, former fighter pilot turned AI watchdog Mary L. “Missy” Cummings warned of the hazards of utilizing AI in self-driving autos. In “What Self-Driving Vehicles Inform Us About AI Dangers,” she beneficial tips for AI improvement, utilizing examples of AVs.

Whether or not state of affairs/response programming constitutes AI in the best way the time period is used could be debated, so allow us to give Cummings slightly room.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

Submit your nominations for innovation awards within the 2024 RBR50 awards.

Self-driving autos have a tough cease

No matter your interpretation of AI, autonomous autos function examples of machines below the affect of software program that may behave badly—badly sufficient to trigger injury or harm folks. The examples vary from comprehensible to inexcusable.

An instance of inexcusable is an autonomous car operating into something forward of it. That ought to by no means occur. Irrespective of if the system misidentifies a risk or obstruction, or fails to establish it altogether and, subsequently, can’t predict its conduct, if a mass is detected forward and the car’s current pace would trigger a collision, it should slam on the brakes.

No brakes have been slammed on when one AV bumped into the again of an articulated bus as a result of the system had recognized it as a “regular” — that’s, shorter — bus.

Phantom braking, nonetheless, is completely comprehensible—and an ideal instance of how AI not solely fails to guard us but in addition really throws the occupants of AVs into hurt’s means, argued Cummings.

“One failure mode not beforehand anticipated is phantom braking,” she wrote. “For no apparent cause, a self-driving automotive will all of the sudden brake onerous, maybe inflicting a rear-end collision with the car simply behind it and different autos additional again. Phantom braking has been seen within the self-driving vehicles of many alternative producers and in ADAS [advanced driver-assistance systems]-equipped vehicles as effectively.”

To again up her declare, Cummings cited a NHSTA report that stated rear-end collisions occur precisely twice as usually with autonomous autos (56%) than with all autos (28%).

“The reason for such occasions remains to be a thriller,” she stated. “Consultants initially attributed it to human drivers following the self-driving automotive too carefully (usually accompanying their assessments by citing the deceptive 94% statistic about driver error).”

“Nonetheless, an rising variety of these crashes have been reported to NHTSA,” famous Cummings. “In Might 2022, for example, the NHTSA despatched a letter to Tesla noting that the company had obtained 758 complaints about phantom braking in Mannequin 3 and Y vehicles. This previous Might, the German publication Handelsblatt reported on 1,500 complaints of braking points with Tesla autos, in addition to 2,400 complaints of sudden acceleration.”