Easy and efficient interplay between human and quadrupedal robots paves the best way in the direction of creating clever and succesful helper robots, forging a future the place expertise enhances our lives in methods past our creativeness. Key to such human-robot interplay methods is enabling quadrupedal robots to answer pure language directions. Latest developments in giant language fashions (LLMs) have demonstrated the potential to carry out high-level planning. But, it stays a problem for LLMs to grasp low-level instructions, resembling joint angle targets or motor torques, particularly for inherently unstable legged robots, necessitating high-frequency management alerts. Consequently, most present work presumes the supply of high-level APIs for LLMs to dictate robotic habits, inherently limiting the system’s expressive capabilities.

In “SayTap: Language to Quadrupedal Locomotion”, we suggest an method that makes use of foot contact patterns (which consult with the sequence and method by which a four-legged agent locations its ft on the bottom whereas transferring) as an interface to bridge human instructions in pure language and a locomotion controller that outputs low-level instructions. This leads to an interactive quadrupedal robotic system that permits customers to flexibly craft numerous locomotion behaviors (e.g., a consumer can ask the robotic to stroll, run, soar or make different actions utilizing easy language). We contribute an LLM immediate design, a reward perform, and a technique to show the SayTap controller to the possible distribution of contact patterns. We show that SayTap is a controller able to attaining numerous locomotion patterns that may be transferred to actual robotic {hardware}.

SayTap methodology

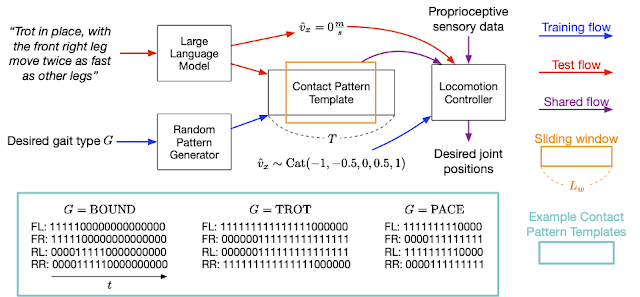

The SayTap method makes use of a contact sample template, which is a 4 X T matrix of 0s and 1s, with 0s representing an agent’s ft within the air and 1s for ft on the bottom. From prime to backside, every row within the matrix provides the foot contact patterns of the entrance left (FL), entrance proper (FR), rear left (RL) and rear proper (RR) ft. SayTap’s management frequency is 50 Hz, so every 0 or 1 lasts 0.02 seconds. On this work, a desired foot contact sample is outlined by a cyclic sliding window of dimension Lw and of form 4 X Lw. The sliding window extracts from the contact sample template 4 foot floor contact flags, which point out if a foot is on the bottom or within the air between t + 1 and t + Lw. The determine beneath gives an outline of the SayTap methodology.

SayTap introduces these desired foot contact patterns as a brand new interface between pure language consumer instructions and the locomotion controller. The locomotion controller is used to finish the principle process (e.g., following specified velocities) and to put the robotic’s ft on the bottom on the specified time, such that the realized foot contact patterns are as near the specified contact patterns as doable. To realize this, the locomotion controller takes the specified foot contact sample at every time step as its enter along with the robotic’s proprioceptive sensory information (e.g., joint positions and velocities) and task-related inputs (e.g., user-specified velocity instructions). We use deep reinforcement studying to coach the locomotion controller and characterize it as a deep neural community. Throughout controller coaching, a random generator samples the specified foot contact patterns, the coverage is then optimized to output low-level robotic actions to attain the specified foot contact sample. Then at check time a LLM interprets consumer instructions into foot contact patterns.

SayTap method overview.

SayTap method overview.

SayTap makes use of foot contact patterns (e.g., 0 and 1 sequences for every foot within the inset, the place 0s are foot within the air and 1s are foot on the bottom) as an interface that bridges pure language consumer instructions and low-level management instructions. With a reinforcement learning-based locomotion controller that’s educated to appreciate the specified contact patterns, SayTap permits a quadrupedal robotic to take each easy and direct directions (e.g., “Trot ahead slowly.”) in addition to imprecise consumer instructions (e.g., “Excellent news, we’re going to a picnic this weekend!”) and react accordingly.

We show that the LLM is able to precisely mapping consumer instructions into foot contact sample templates in specified codecs when given correctly designed prompts, even in circumstances when the instructions are unstructured or imprecise. In coaching, we use a random sample generator to supply contact sample templates which can be of varied sample lengths T, foot-ground contact ratios inside a cycle based mostly on a given gait kind G, in order that the locomotion controller will get to be taught on a large distribution of actions main to higher generalization. See the paper for extra particulars.

Outcomes

With a easy immediate that comprises solely three in-context examples of generally seen foot contact patterns, an LLM can translate numerous human instructions precisely into contact patterns and even generalize to those who don’t explicitly specify how the robotic ought to react.

SayTap prompts are concise and consist of 4 parts: (1) normal instruction that describes the duties the LLM ought to accomplish; (2) gait definition that reminds the LLM of primary data about quadrupedal gaits and the way they are often associated to feelings; (3) output format definition; and (4) examples that give the LLM possibilities to be taught in-context. We additionally specify 5 velocities that permit a robotic to maneuver ahead or backward, quick or gradual, or stay nonetheless.

Normal instruction block

You’re a canine foot contact sample knowledgeable.

Your job is to offer a velocity and a foot contact sample based mostly on the enter.

You’ll at all times give the output within the appropriate format it doesn’t matter what the enter is.

Gait definition block

The next are description about gaits:

1. Trotting is a gait the place two diagonally reverse legs strike the bottom on the similar time.

2. Pacing is a gait the place the 2 legs on the left/proper aspect of the physique strike the bottom on the similar time.

3. Bounding is a gait the place the 2 entrance/rear legs strike the bottom on the similar time. It has an extended suspension part the place all ft are off the bottom, for instance, for a minimum of 25% of the cycle size. This gait additionally provides a cheerful feeling.

Output format definition block

The next are guidelines for describing the rate and foot contact patterns:

1. You must first output the rate, then the foot contact sample.

2. There are 5 velocities to select from: [-1.0, -0.5, 0.0, 0.5, 1.0].

3. A sample has 4 strains, every of which represents the foot contact sample of a leg.

4. Every line has a label. “FL” is entrance left leg, “FR” is entrance proper leg, “RL” is rear left leg, and “RR” is rear proper leg.

5. In every line, “0” represents foot within the air, “1” represents foot on the bottom.

Instance block

Enter: Trot slowly

Output: 0.5

FL: 11111111111111111000000000

FR: 00000000011111111111111111

RL: 00000000011111111111111111

RR: 11111111111111111000000000

Enter: Sure in place

Output: 0.0

FL: 11111111111100000000000000

FR: 11111111111100000000000000

RL: 00000011111111111100000000

RR: 00000011111111111100000000

Enter: Tempo backward quick

Output: -1.0

FL: 11111111100001111111110000

FR: 00001111111110000111111111

RL: 11111111100001111111110000

RR: 00001111111110000111111111

Enter:

SayTap immediate to the LLM. Texts in blue are used for illustration and are usually not enter to LLM.

Following easy and direct instructions

We show within the movies beneath that the SayTap system can efficiently carry out duties the place the instructions are direct and clear. Though some instructions are usually not lined by the three in-context examples, we’re in a position to information the LLM to specific its inner data from the pre-training part by way of the “Gait definition block” (see the second block in our immediate above) within the immediate.

Following unstructured or imprecise instructions

However what’s extra attention-grabbing is SayTap’s skill to course of unstructured and imprecise directions. With solely a bit trace within the immediate to attach sure gaits with normal impressions of feelings, the robotic bounds up and down when listening to thrilling messages, like “We’re going to a picnic!” Moreover, it additionally presents the scenes precisely (e.g., transferring shortly with its ft barely touching the bottom when advised the bottom could be very scorching).

Conclusion and future work

We current SayTap, an interactive system for quadrupedal robots that permits customers to flexibly craft numerous locomotion behaviors. SayTap introduces desired foot contact patterns as a brand new interface between pure language and the low-level controller. This new interface is simple and versatile, furthermore, it permits a robotic to comply with each direct directions and instructions that don’t explicitly state how the robotic ought to react.

One attention-grabbing course for future work is to check if instructions that suggest a particular feeling will permit the LLM to output a desired gait. Within the gait definition block proven within the outcomes part above, we offer a sentence that connects a cheerful temper with bounding gaits. We imagine that offering extra info can increase the LLM’s interpretations (e.g., implied emotions). In our analysis, the connection between a cheerful feeling and a bounding gait led the robotic to behave vividly when following imprecise human instructions. One other attention-grabbing course for future work is to introduce multi-modal inputs, resembling movies and audio. Foot contact patterns translated from these alerts will, in principle, nonetheless work with our pipeline and can unlock many extra attention-grabbing use circumstances.

Acknowledgements

Yujin Tang, Wenhao Yu, Jie Tan, Heiga Zen, Aleksandra Faust and Tatsuya Harada carried out this analysis. This work was conceived and carried out whereas the workforce was in Google Analysis and will likely be continued at Google DeepMind. The authors wish to thank Tingnan Zhang, Linda Luu, Kuang-Huei Lee, Vincent Vanhoucke and Douglas Eck for his or her precious discussions and technical help within the experiments.