Evaluating fashions in federated networks is difficult because of components reminiscent of shopper subsampling, knowledge heterogeneity, and privateness. These components introduce noise that may have an effect on hyperparameter tuning algorithms and result in suboptimal mannequin choice.

Hyperparameter tuning is vital to the success of cross-device federated studying purposes. Sadly, federated networks face problems with scale, heterogeneity, and privateness, which introduce noise within the tuning course of and make it tough to faithfully consider the efficiency of varied hyperparameters. Our work (MLSys’23) explores key sources of noise and surprisingly reveals that even small quantities of noise can have a major influence on tuning strategies—lowering the efficiency of state-of-the-art approaches to that of naive baselines. To handle noisy analysis in such situations, we suggest a easy and efficient method that leverages public proxy knowledge to spice up the analysis sign. Our work establishes basic challenges, baselines, and finest practices for future work in federated hyperparameter tuning.

Federated Studying: An Overview

Cross-device federated studying (FL) is a machine studying setting that considers coaching a mannequin over a big heterogeneous community of units reminiscent of cell phones or wearables. Three key components differentiate FL from conventional centralized studying and distributed studying:

Scale. Cross-device refers to FL settings with many consumers with doubtlessly restricted native sources e.g. coaching a language mannequin throughout a whole lot to tens of millions of cell phones. These units have numerous useful resource constraints, reminiscent of restricted add velocity, variety of native examples, or computational functionality.

Heterogeneity. Conventional distributed ML assumes every employee/shopper has a random (identically distributed) pattern of the coaching knowledge. In distinction, in FL shopper datasets could also be non-identically distributed, with every person’s knowledge being generated by a definite underlying distribution.

Privateness. FL gives a baseline degree of privateness since uncooked person knowledge stays native on every shopper. Nonetheless, FL remains to be weak to post-hoc assaults the place the general public output of the FL algorithm (e.g. a mannequin or its hyperparameters) might be reverse-engineered and leak personal person data. A typical method to mitigate such vulnerabilities is to make use of differential privateness, which goals to masks the contribution of every shopper. Nonetheless, differential privateness introduces noise within the combination analysis sign, which might make it tough to successfully choose fashions.

Federated Hyperparameter Tuning

Appropriately choosing hyperparameters (HPs) is vital to coaching high quality fashions in FL. Hyperparameters are user-specified parameters that dictate the method of mannequin coaching reminiscent of the educational price, native batch dimension, and variety of purchasers sampled at every spherical. The issue of tuning HPs is basic to machine studying (not simply FL). Given an HP search house and search funds, HP tuning strategies intention to discover a configuration within the search house that optimizes some measure of high quality inside a constrained funds.

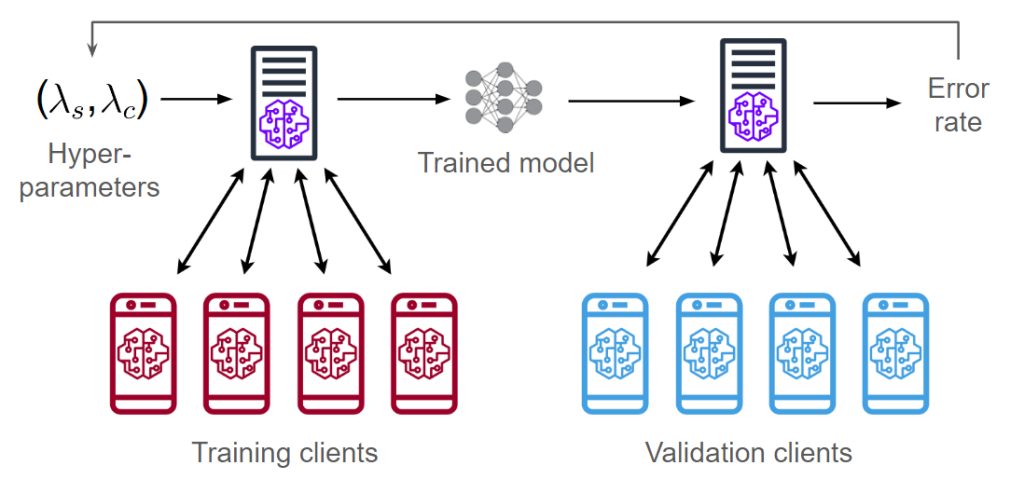

Let’s first have a look at an end-to-end FL pipeline that considers each the processes of coaching and hyperparameter tuning. In cross-device FL, we break up the purchasers into two swimming pools for coaching and validation. Given a hyperparameter configuration ((lambda_s, lambda_c)), we prepare a mannequin utilizing the coaching purchasers (defined in part “FL Coaching”). We then consider this mannequin on the validation purchasers, acquiring an error price/accuracy metric. We will then use the error price to regulate the hyperparameters and prepare a brand new mannequin.

The diagram above reveals two vectors of hyperparameters (lambda_s, lambda_c). These correspond to the hyperparameters of two optimizers: one is server-side and the opposite is client-side. Subsequent, we describe how these hyperparameters are used throughout FL coaching.

FL Coaching

A typical FL algorithm consists of a number of rounds of coaching the place every shopper performs native coaching adopted by aggregation of the shopper updates. In our work, we experiment with a basic framework known as FedOPT which was introduced in Adaptive Federated Optimization (Reddi et al. 2021). We define the per-round process of FedOPT beneath:

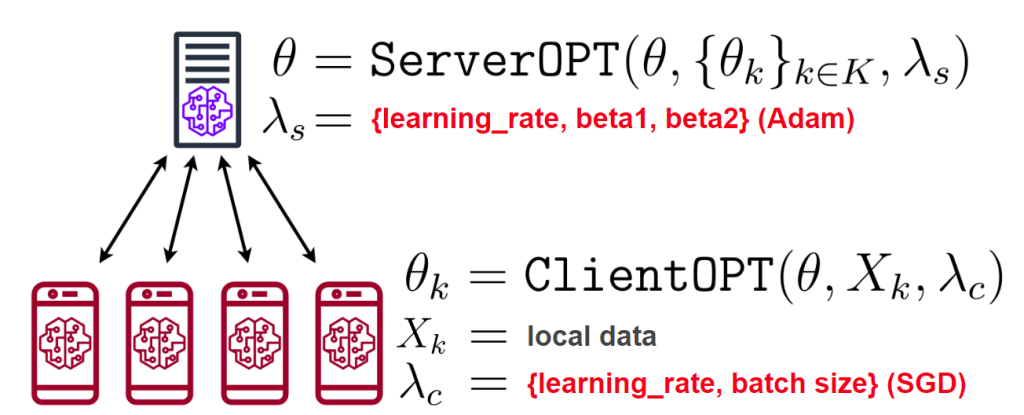

The server broadcasts the mannequin (theta) to a sampled subset of (Okay) purchasers.Every shopper (in parallel) trains (theta) on their native knowledge (X_k) utilizing ClientOPT and obtains an up to date mannequin (theta_k).Every shopper sends (theta_k) again to the server.The server averages all of the acquired fashions (theta’ = frac{1}{Okay} sum_k p_ktheta_k).To replace (theta), the server computes the distinction (theta – theta’) and feeds it as a pseudo-gradient into ServerOPT (fairly than computing a gradient w.r.t. some loss operate).

Steps 2 and 5 of FedOPT every require a gradient-based optimization algorithm (known as ClientOPT and ServerOPT) which specify easy methods to replace (theta) given some replace vector. In our work, we give attention to an instantiation of FedOPT known as FedAdam, which makes use of Adam (Kingma and Ba 2014) as ServerOPT and SGD as ClientOPT. We give attention to tuning 5 FedAdam hyperparameters: two for shopper coaching (SGD’s studying price and batch dimension) and three for server aggregation (Adam’s studying price, 1st-moment decay, and 2nd-moment decay).

FL Analysis

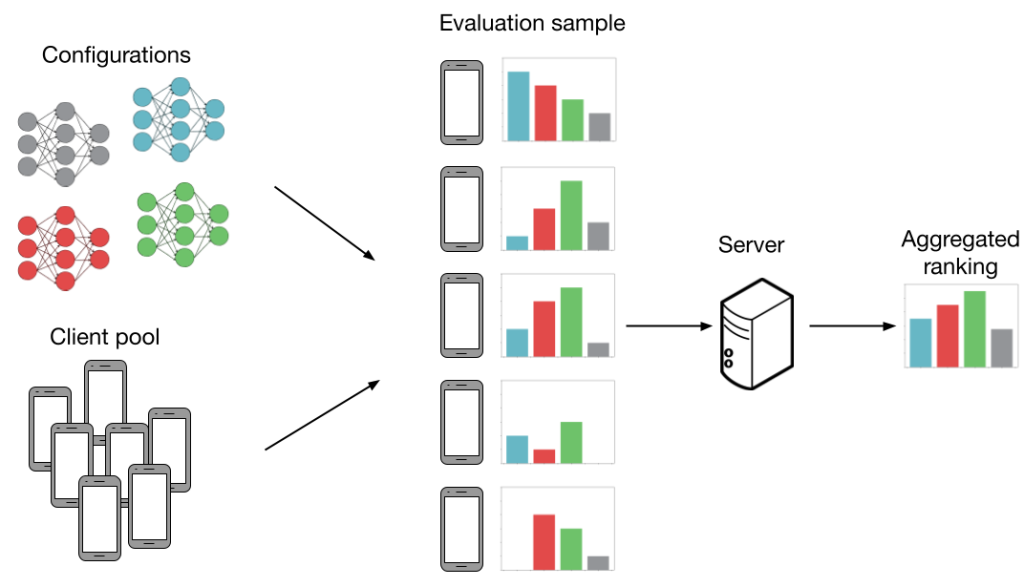

Now, we focus on how FL settings introduce noise to mannequin analysis. Take into account the next instance beneath. Now we have (Okay=4) configurations (gray, blue, crimson, inexperienced) and we wish to work out which configuration has the very best common accuracy throughout (N=5) purchasers. Extra particularly, every “configuration” is a set of HP values (studying price, batch dimension, and so on.) which are fed into an FL coaching algorithm (extra particulars within the subsequent part). This produces a mannequin we will consider. If we will consider each mannequin on each shopper then our analysis is noiseless. On this case, we’d be capable to precisely decide that the inexperienced mannequin performs the very best. Nonetheless, producing all of the evaluations as proven beneath isn’t sensible, as analysis prices scale with each the variety of configurations and purchasers.

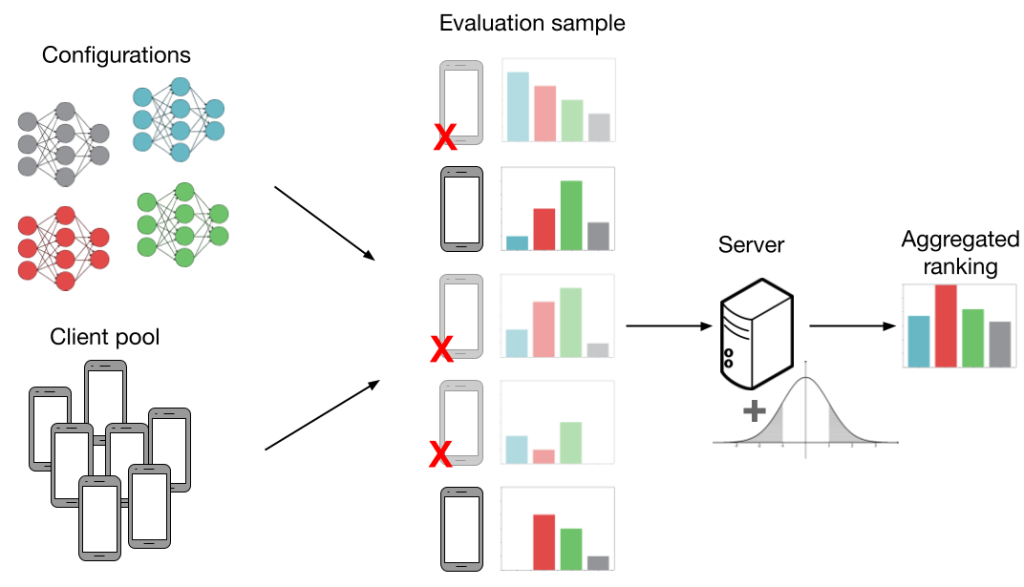

Under, we present an analysis process that’s extra real looking in FL. As the first problem in cross-device FL is scale, we consider fashions utilizing solely a random subsample of purchasers. That is proven within the determine by crimson ‘X’s and shaded-out telephones. We cowl three further sources of noise in FL which might negatively work together with subsampling and introduce much more noise into the analysis process:

Knowledge heterogeneity. FL purchasers could have non-identically distributed knowledge, which means that the evaluations on numerous fashions can differ between purchasers. That is proven by the completely different histograms subsequent to every shopper. Knowledge heterogeneity is intrinsic to FL and is vital for our observations on noisy analysis; if all purchasers had equivalent datasets, there could be no have to pattern multiple shopper.

Methods heterogeneity. Along with knowledge heterogeneity, purchasers could have heterogeneous system capabilities. For instance, some purchasers have higher community reception and computational {hardware}, which permits them to take part in coaching and analysis extra often. This biases efficiency in the direction of these purchasers, resulting in a poor total mannequin.

Differential privateness. Utilizing the analysis output (i.e. the top-performing mannequin), a malicious celebration can infer whether or not or not a specific shopper participated within the FL process. At a excessive degree, differential privateness goals to masks person contributions by including noise to the combination analysis metric. Nonetheless, this extra noise could make it tough to faithfully consider HP configurations.

Within the determine above, evaluations can result in suboptimal mannequin choice once we take into account shopper subsampling, knowledge heterogeneity, and differential privateness. The mix of all these components leads us to incorrectly select the crimson mannequin over the inexperienced one.

Experimental Outcomes

The primary aim of our work is to research the influence of 4 sources of noisy analysis that we outlined within the part “FL Analysis”. In additional element, these are our analysis questions:

How does subsampling validation purchasers have an effect on HP tuning efficiency?How do the next components work together with/exacerbate problems with subsampling?knowledge heterogeneity (shuffling validation purchasers’ datasets) programs heterogeneity (biased shopper subsampling) privateness (including Laplace noise to the combination analysis)In noisy settings, how do SOTA strategies examine to easy baselines?

Surprisingly, we present that state-of-the-art HP tuning strategies can carry out catastrophically poorly, even worse than easy baselines (e.g., random search). Whereas we solely present outcomes for CIFAR10, outcomes on three different datasets (FEMNIST, StackOverflow, and Reddit) might be present in our paper. CIFAR10 is partitioned such that every shopper has at most two out of the ten whole labels.

Noise hurts random search

This part investigates questions 1 and a couple of utilizing random search (RS) because the hyperparameter tuning methodology. RS is a straightforward baseline that randomly samples a number of HP configurations, trains a mannequin for each, and returns the highest-performing mannequin (i.e. the instance in “FL Analysis”, if the configurations had been sampled independently from the identical distribution). Usually, every hyperparameter worth is sampled from a (log) uniform or regular distribution.

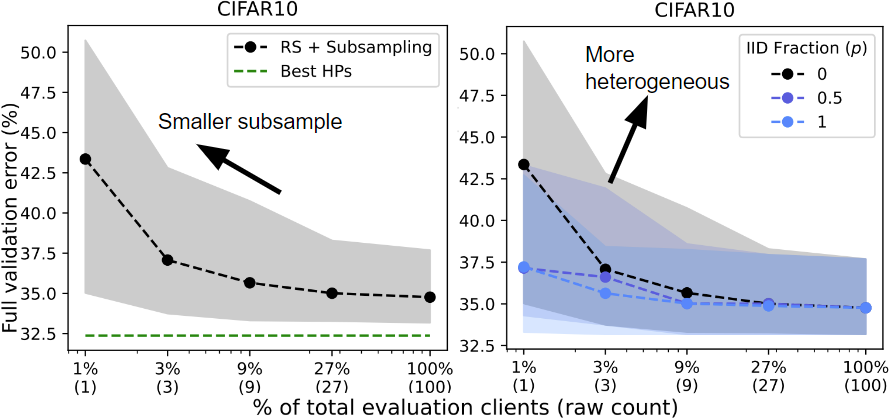

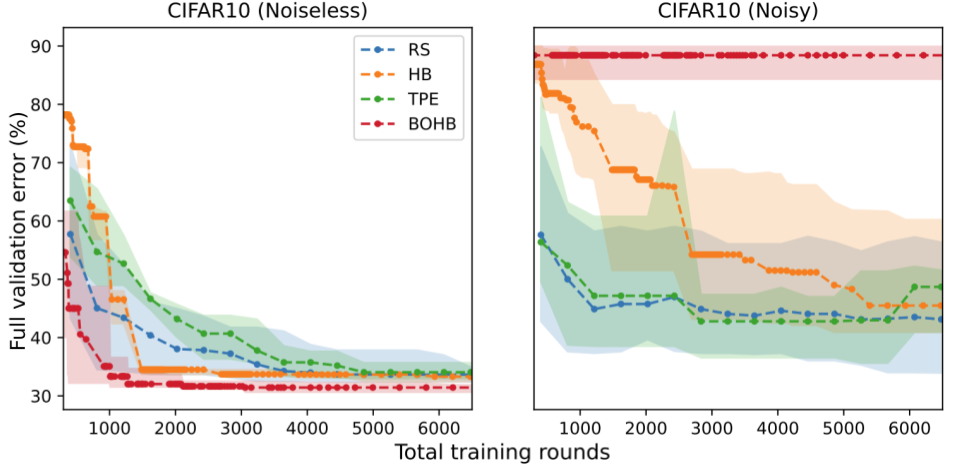

Shopper subsampling. We run RS whereas various the shopper subsampling price from a single shopper to the total validation shopper pool. “Greatest HPs” signifies the very best HPs discovered throughout all trials of RS. As we subsample much less purchasers (left), random search performs worse (larger error price).

Knowledge heterogeneity. We run RS on three separate validation partitions with various levels of knowledge heterogeneity based mostly on the label distributions on every shopper. Shopper subsampling typically harms efficiency however has a larger influence on efficiency when the info is heterogeneous (IID Fraction = 0 vs. 1).

Methods heterogeneity. We run RS and bias the shopper sampling to replicate 4 levels of programs heterogeneity. Based mostly on the mannequin that’s at present being evaluated, we assign the next chance of sampling purchasers who carry out nicely on this mannequin. Sampling bias results in worse efficiency because the biased evaluations are overly optimistic and don’t replicate efficiency over the whole validation pool.

Privateness. We run RS with 5 completely different analysis privateness budgets (varepsilon). We add noise sampled from (textual content{Lap}(M/(varepsilon |S|))) to the combination analysis, the place (M) is the variety of evaluations (16), (varepsilon) is the privateness funds (every curve), and (|S|) is the variety of purchasers sampled for an analysis (x-axis). A smaller privateness funds requires sampling a bigger uncooked variety of purchasers to realize affordable efficiency.

Noise hurts complicated strategies greater than RS

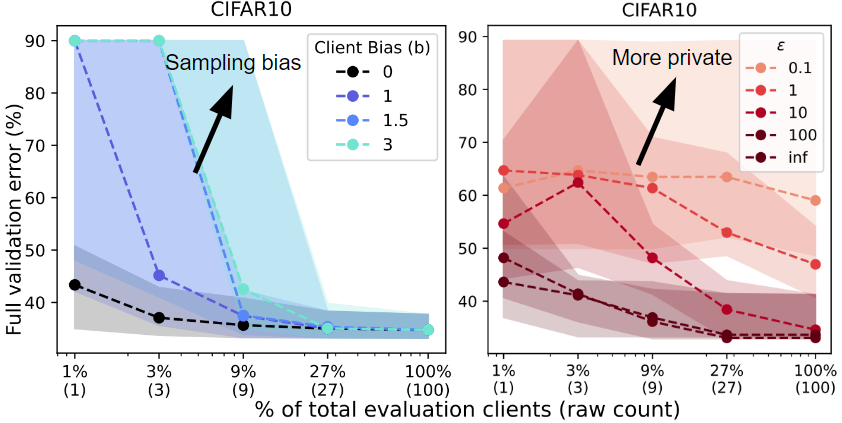

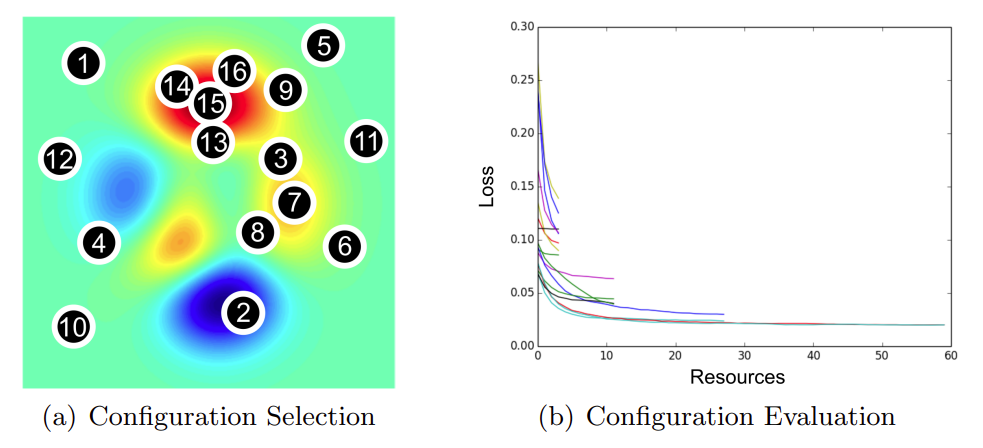

Seeing that noise adversely impacts random search, we now give attention to query 3: Do the identical observations maintain for extra complicated tuning strategies? Within the subsequent experiment, we examine 4 consultant HP tuning strategies.

Random Search (RS) is a naive baseline.Tree-Structured Parzen Estimator (TPE) is a selection-based methodology. These strategies construct a surrogate mannequin that predicts the efficiency of varied hyperparameters fairly than predictions for the duty at hand (e.g. picture or language knowledge).Hyperband (HB) is an allocation-based methodology. These strategies allocate extra sources to essentially the most promising configurations. Hyperband initially samples numerous configurations however stops coaching most of them after the primary few rounds.Bayesian Optimization + Hyperband (BOHB) is a mixed methodology that makes use of each the sampling technique of TPE and the partial evaluations of HB.

We report the error price of every HP tuning methodology (y-axis) at a given funds of rounds (x-axis). Surprisingly, we discover that the relative rating of those strategies might be reversed when the analysis is noisy. With noise, the efficiency of all strategies degrades, however the degradation is especially excessive for HB and BOHB. Intuitively, it’s because these two strategies already inject noise into the HP tuning process by way of early stopping which interacts poorly with further sources of noise. Subsequently, these outcomes point out a necessity for HP tuning strategies which are specialised for FL, as most of the guiding rules for conventional hyperparameter tuning might not be efficient at dealing with noisy analysis in FL.

Proxy analysis outperforms noisy analysis

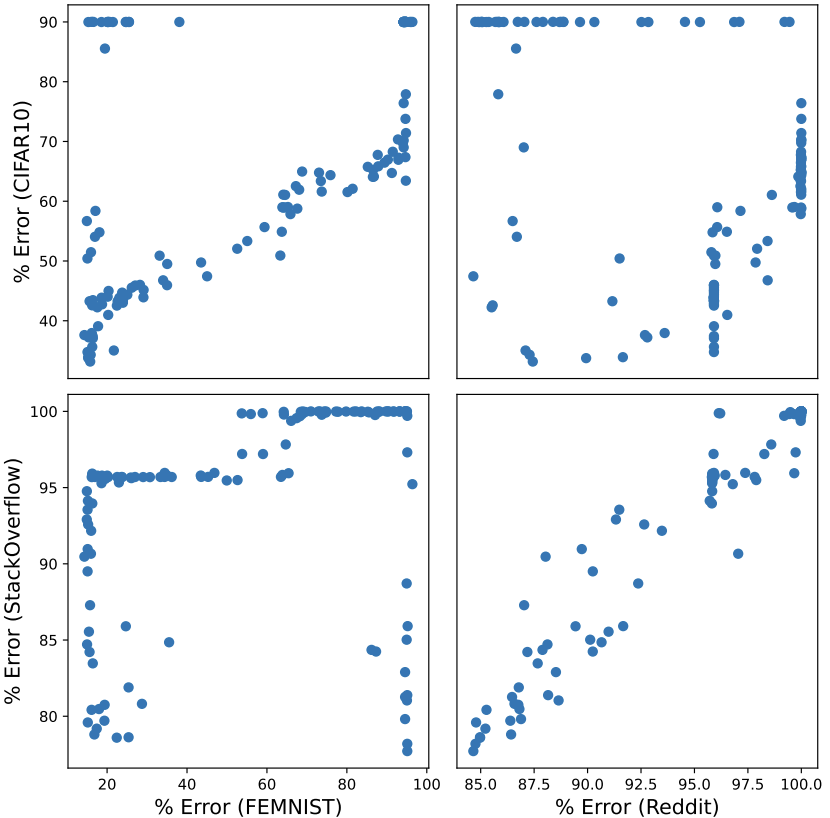

In sensible FL settings, a practitioner could have entry to public proxy knowledge which can be utilized to coach fashions and choose hyperparameters. Nonetheless, given two distinct datasets, it’s unclear how nicely hyperparameters can switch between them. First, we discover the effectiveness of hyperparameter switch between 4 datasets. Under, we see that the CIFAR10-FEMNIST and StackOverflow-Reddit pairs (high left, backside proper) present the clearest switch between the 2 datasets. One seemingly cause for that is that these job pairs use the identical mannequin structure: CIFAR10 and FEMNIST are each picture classification duties whereas StackOverflow and Reddit are next-word prediction duties.

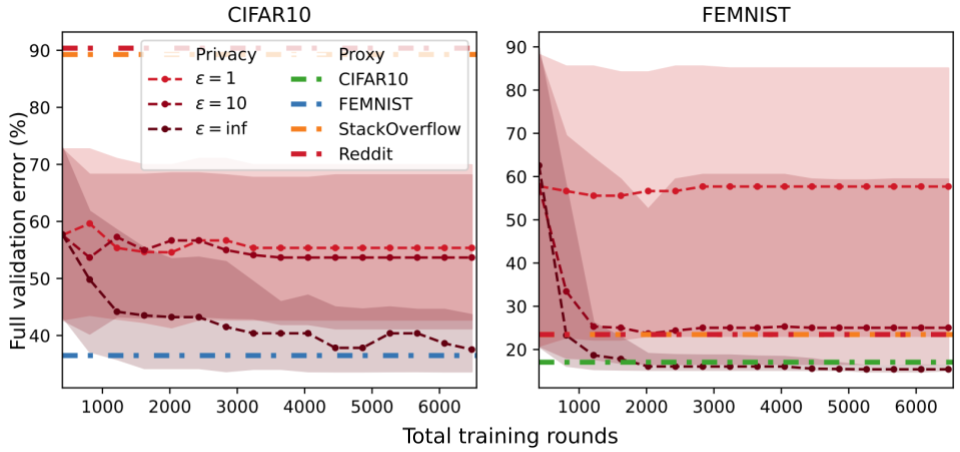

Given the suitable proxy dataset, we present {that a} easy methodology known as one-shot proxy random search can carry out extraordinarily nicely. The algorithm has two steps:

Run a random search utilizing the proxy knowledge to each prepare and consider HPs. We assume the proxy knowledge is each public and server-side, so we will all the time consider HPs with out subsampling purchasers or including privateness noise.The output configuration from 1. is used to coach a mannequin on the coaching shopper knowledge. Since we move solely a single configuration to this step, validation shopper knowledge doesn’t have an effect on hyperparameter choice in any respect.

In every experiment, we select considered one of these datasets to be partitioned among the many purchasers and use the opposite three datasets as server-side proxy datasets. Our outcomes present that proxy knowledge might be an efficient resolution. Even when the proxy dataset isn’t a great match for the general public knowledge, it might be the one out there resolution underneath a strict privateness funds. That is proven within the FEMNIST plot the place the orange/crimson strains (textual content datasets) carry out equally to the (varepsilon=10) curve.

Conclusion

In conclusion, our examine suggests a number of finest practices for federated HP tuning:

Use easy HP tuning strategies.Pattern a sufficiently giant variety of validation purchasers.Consider a consultant set of purchasers.If out there, proxy knowledge might be an efficient resolution.

Moreover, we determine a number of instructions for future work in federated HP tuning:

Tailoring HP tuning strategies for differential privateness and FL. Early stopping strategies are inherently noisy/biased and the big variety of evaluations they use is at odds with privateness. One other helpful course is to research HP strategies particular to noisy analysis.Extra detailed value analysis. In our work, we solely thought of the variety of coaching rounds as our useful resource funds. Nonetheless, sensible FL settings take into account all kinds of prices, reminiscent of whole communication, quantity of native coaching, or whole time to coach a mannequin. Combining proxy and shopper knowledge for HP tuning. A key concern of utilizing public proxy knowledge for HP tuning is that the very best proxy dataset isn’t identified upfront. One course to handle that is to design strategies that mix private and non-private evaluations to mitigate bias from proxy knowledge and noise from personal knowledge. One other promising course is to depend on the abundance of public knowledge and design a technique that may choose the very best proxy dataset.