If instructed to “Place a cooled apple into the microwave,” how would a robotic reply? Initially, the robotic would want to find an apple, choose it up, discover the fridge, open its door, and place the apple inside. Subsequently, it could shut the fridge door, reopen it to retrieve the cooled apple, choose up the apple once more, and shut the door. Following this, the robotic would want to find the microwave, open its door, place the apple inside, after which shut the microwave door.

Evaluating how nicely these steps are executed exemplifies the essence of benchmarking activity planning AI applied sciences. It measures how successfully a robotic can reply to instructions and cling to the required procedures.

An Electronics and Telecommunications Analysis Institute (ETRI) analysis staff has developed a expertise that routinely evaluates the efficiency of activity plans generated by Massive Language Fashions (LLMs) and paves the way in which for quick and goal evaluation of activity planning AIs.

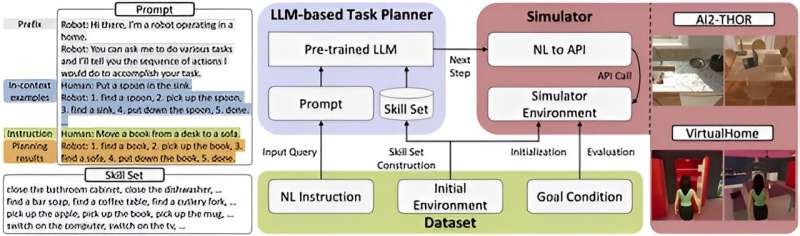

ETRI has introduced the event of LoTa-Benchmark (LoTa-Bench), which allows the automated analysis of language-based activity planners. A language-based activity planner understands the verbal instruction from a human person, plans a sequence of operations, and autonomously executes the designated operations to meet the objective of the instruction.

The analysis staff revealed a paper on the Worldwide Convention on Studying Representations (ICLR), and shared the analysis outcomes for a complete of 33 massive language fashions by GitHub.

Lately, LLMs have demonstrated outstanding efficiency not solely in language processing, dialog, fixing mathematical issues, and logic proof but in addition in understanding human instructions, autonomously deciding on sub-tasks, and sequentially executing them to realize targets. Consequently, there was a widespread effort to use massive language fashions in robotics functions and repair implementation.

Beforehand, the absence of benchmark expertise able to routinely evaluating activity planning efficiency necessitated guide assessments, which had been labor-intensive. For example, in current analysis, together with Google’s SayCan, the strategy adopted concerned a number of people straight observing the outcomes of duties being executed after which voting on their success or failure.

This method not solely required a major quantity of effort and time for efficiency analysis, making it cumbersome but in addition launched the issue of subjective judgment influencing the outcomes.

The LoTa-Bench expertise developed by ETRI automates the analysis course of by really executing activity plans generated by massive language fashions based mostly on person instructions and routinely compares the outcomes to the supposed outcomes of the instructions to find out whether or not the plans had been profitable or not. This method considerably reduces analysis time and prices in addition to ensures that the analysis outcomes are goal.

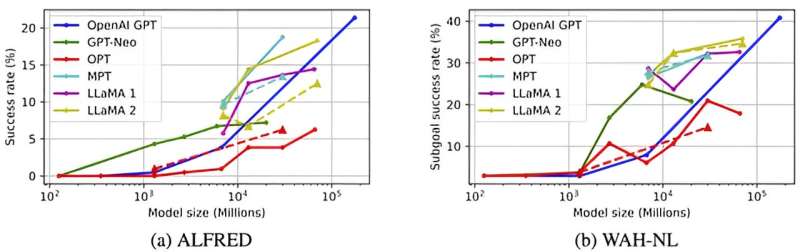

ETRI revealed benchmark outcomes for various massive language fashions, indicating that OpenAI’s GPT-3 achieved successful charge of 21.36%, GPT-4 exhibited 40.38%, Meta’s LLaMA 2-70B mannequin confirmed 18.27%, and MosaicML’s MPT-30B mannequin recorded 18.75%.

It was famous that bigger fashions are inclined to have superior activity planning capabilities. Successful charge of 20% implies that out of 100 directions, 20 plans had been profitable in fulfilling the objective of the directions.

In LoTa-Bench, efficiency analysis is performed in digital simulation environments developed by the Allen Institute for AI (AI2-THOR) and the Massachusetts Institute of Expertise (MIT’s VirtualHome) geared toward analysis and improvement of robotics and embodied agent intelligence. The analysis utilized the ALFRED dataset that included on a regular basis family activity directions similar to “Place a cooled apple within the microwave” and so on.

Leveraging the advantages of the LoTa-Bench expertise for simple and speedy verification of latest activity planning strategies, the analysis staff found two methods for enhancing activity planning efficiency by data-driven coaching: In-Context Instance Choice and Suggestions-Based mostly Replanning. Additionally they confirmed that fine-tuning successfully enhances the efficiency of language-based activity planning.

Minsu Jang, a principal researcher at ETRI’s Social Robotics Lab, acknowledged, “LoTa-Bench marks step one within the improvement of activity planning AI. We plan to analysis and develop applied sciences that may predict activity failures in unsure conditions or enhance activity era intelligence by asking for and receiving assist from people. This expertise is important for realizing the period of 1 robotic per family.”

Jaehong Kim, the director of ETRI’s Social Robotics Analysis Part, introduced, “ETRI is devoted to advancing robotic intelligence utilizing basis fashions to appreciate robots able to producing and executing varied mission plans in the true world.”

By releasing the software program as open supply, the ETRI researchers anticipate that firms and academic establishments will be capable of freely make the most of this expertise, thereby accelerating the development of associated applied sciences.

Extra data:

Choi et al, LoTa-Bench: Benchmarking Language-oriented Job Planners for Embodied Brokers, ICLR (Worldwide Convention on Studying Representations (2024)

Nationwide Analysis Council of Science and Expertise

Quotation:

Researchers develop an automatic benchmark for language-based activity planners (2024, April 26)

retrieved 26 April 2024

from https://techxplore.com/information/2024-04-automated-benchmark-language-based-task.html

This doc is topic to copyright. Aside from any truthful dealing for the aim of personal examine or analysis, no

half could also be reproduced with out the written permission. The content material is offered for data functions solely.