The combination and software of enormous language fashions (LLMs) in drugs and healthcare has been a subject of great curiosity and growth.

As famous within the Healthcare Info Administration and Techniques Society world convention and different notable occasions, firms like Google are main the cost in exploring the potential of generative AI inside healthcare. Their initiatives, akin to Med-PaLM 2, spotlight the evolving panorama of AI-driven healthcare options, significantly in areas like diagnostics, affected person care, and administrative effectivity.

Google’s Med-PaLM 2, a pioneering LLM within the healthcare area, has demonstrated spectacular capabilities, notably reaching an “skilled” stage in U.S. Medical Licensing Examination-style questions. This mannequin, and others prefer it, promise to revolutionize the way in which healthcare professionals entry and make the most of info, doubtlessly enhancing diagnostic accuracy and affected person care effectivity.

Nonetheless, alongside these developments, issues in regards to the practicality and security of those applied sciences in scientific settings have been raised. As an illustration, the reliance on huge web information sources for mannequin coaching, whereas helpful in some contexts, might not all the time be applicable or dependable for medical functions. As Nigam Shah, PhD, MBBS, Chief Knowledge Scientist for Stanford Well being Care, factors out, the essential inquiries to ask are in regards to the efficiency of those fashions in real-world medical settings and their precise influence on affected person care and healthcare effectivity.

Dr. Shah’s perspective underscores the necessity for a extra tailor-made strategy to using LLMs in drugs. As a substitute of general-purpose fashions educated on broad web information, he suggests a extra targeted technique the place fashions are educated on particular, related medical information. This strategy resembles coaching a medical intern – offering them with particular duties, supervising their efficiency, and progressively permitting for extra autonomy as they show competence.

According to this, the event of Meditron by EPFL researchers presents an attention-grabbing development within the discipline. Meditron, an open-source LLM particularly tailor-made for medical functions, represents a major step ahead. Skilled on curated medical information from respected sources like PubMed and scientific pointers, Meditron presents a extra targeted and doubtlessly extra dependable device for medical practitioners. Its open-source nature not solely promotes transparency and collaboration but in addition permits for steady enchancment and stress testing by the broader analysis group.

MEDITRON-70B-achieves-an-accuracy-of-70.2-on-USMLE-style-questions-in-the-MedQA-4-options-dataset

The event of instruments like Meditron, Med-PaLM 2, and others displays a rising recognition of the distinctive necessities of the healthcare sector relating to AI functions. The emphasis on coaching these fashions on related, high-quality medical information, and making certain their security and reliability in scientific settings, could be very essential.

Furthermore, the inclusion of various datasets, akin to these from humanitarian contexts just like the Worldwide Committee of the Purple Cross, demonstrates a sensitivity to the various wants and challenges in world healthcare. This strategy aligns with the broader mission of many AI analysis facilities, which purpose to create AI instruments that aren’t solely technologically superior but in addition socially accountable and helpful.

The paper titled “Massive language fashions encode scientific data” just lately printed in Nature, explores how giant language fashions (LLMs) may be successfully utilized in scientific settings. The analysis presents groundbreaking insights and methodologies, shedding gentle on the capabilities and limitations of LLMs within the medical area.

The medical area is characterised by its complexity, with an unlimited array of signs, ailments, and coverings which might be always evolving. LLMs should not solely perceive this complexity but in addition sustain with the newest medical data and pointers.

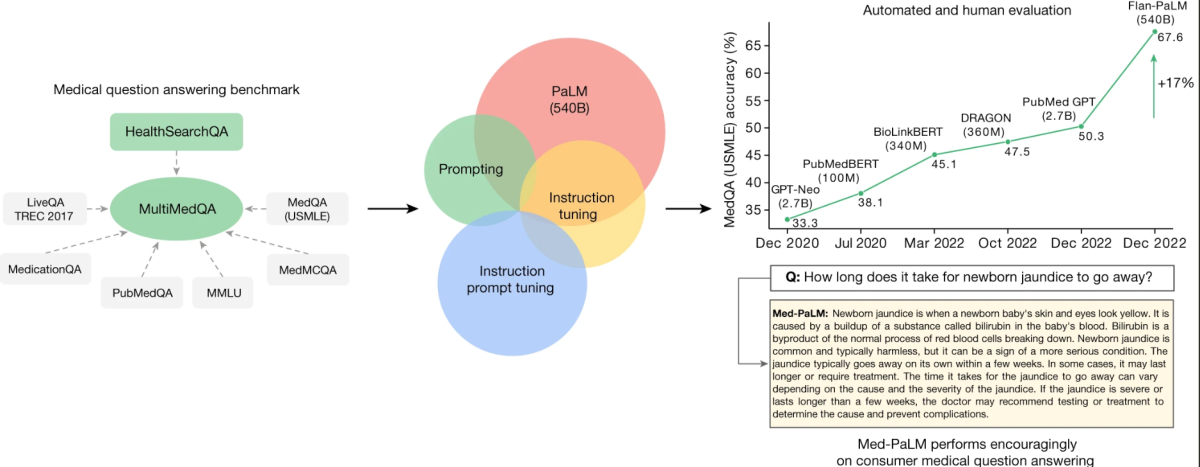

The core of this analysis revolves round a newly curated benchmark known as MultiMedQA. This benchmark amalgamates six present medical question-answering datasets with a brand new dataset, HealthSearchQA, which includes medical questions steadily searched on-line. This complete strategy goals to guage LLMs throughout varied dimensions, together with factuality, comprehension, reasoning, potential hurt, and bias, thereby addressing the constraints of earlier automated evaluations that relied on restricted benchmarks.

MultiMedQA, a benchmark for answering medical questions spanning medical examination

Key to the research is the analysis of the Pathways Language Mannequin (PaLM), a 540-billion parameter LLM, and its instruction-tuned variant, Flan-PaLM, on the MultiMedQA. Remarkably, Flan-PaLM achieves state-of-the-art accuracy on all of the multiple-choice datasets inside MultiMedQA, together with a 67.6% accuracy on MedQA, which includes US Medical Licensing Examination-style questions. This efficiency marks a major enchancment over earlier fashions, surpassing the prior cutting-edge by greater than 17%.

MedQA

Format: query and reply (Q + A), a number of selection, open area.

Instance query: A 65-year-old man with hypertension involves the doctor for a routine well being upkeep examination. Present drugs embody atenolol, lisinopril, and atorvastatin. His pulse is 86 min−1, respirations are 18 min−1, and blood strain is 145/95 mmHg. Cardiac examination reveals finish diastolic murmur. Which of the next is the more than likely reason for this bodily examination?

Solutions (appropriate reply in daring): (A) Decreased compliance of the left ventricle, (B) Myxomatous degeneration of the mitral valve (C) Irritation of the pericardium (D) Dilation of the aortic root (E) Thickening of the mitral valve leaflets.

The research additionally identifies vital gaps within the mannequin’s efficiency, particularly in answering client medical questions. To handle these points, the researchers introduce a way referred to as instruction immediate tuning. This method effectively aligns LLMs to new domains utilizing just a few exemplars, ensuing within the creation of Med-PaLM. The Med-PaLM mannequin, although it performs encouragingly and exhibits enchancment in comprehension, data recall, and reasoning, nonetheless falls quick in comparison with clinicians.

A notable facet of this analysis is the detailed human analysis framework. This framework assesses the fashions’ solutions for settlement with scientific consensus and potential dangerous outcomes. As an illustration, whereas solely 61.9% of Flan-PaLM’s long-form solutions aligned with scientific consensus, this determine rose to 92.6% for Med-PaLM, corresponding to clinician-generated solutions. Equally, the potential for dangerous outcomes was considerably diminished in Med-PaLM’s responses in comparison with Flan-PaLM.

The human analysis of Med-PaLM’s responses highlighted its proficiency in a number of areas, aligning carefully with clinician-generated solutions. This underscores Med-PaLM’s potential as a supportive device in scientific settings.

The analysis mentioned above delves into the intricacies of enhancing Massive Language Fashions (LLMs) for medical functions. The strategies and observations from this research may be generalized to enhance LLM capabilities throughout varied domains. Let’s discover these key elements:

Instruction Tuning Improves Efficiency

Generalized Utility: Instruction tuning, which entails fine-tuning LLMs with particular directions or pointers, has proven to considerably enhance efficiency throughout varied domains. This method may very well be utilized to different fields akin to authorized, monetary, or academic domains to reinforce the accuracy and relevance of LLM outputs.

Scaling Mannequin Measurement

Broader Implications: The commentary that scaling the mannequin dimension improves efficiency just isn’t restricted to medical query answering. Bigger fashions, with extra parameters, have the capability to course of and generate extra nuanced and sophisticated responses. This scaling may be helpful in domains like customer support, inventive writing, and technical help, the place nuanced understanding and response era are essential.

Chain of Thought (COT) Prompting

Numerous Domains Utilization: The usage of COT prompting, though not all the time enhancing efficiency in medical datasets, may be priceless in different domains the place advanced problem-solving is required. As an illustration, in technical troubleshooting or advanced decision-making situations, COT prompting can information LLMs to course of info step-by-step, resulting in extra correct and reasoned outputs.

Self-Consistency for Enhanced Accuracy

Wider Purposes: The strategy of self-consistency, the place a number of outputs are generated and essentially the most constant reply is chosen, can considerably improve efficiency in varied fields. In domains like finance or authorized the place accuracy is paramount, this technique can be utilized to cross-verify the generated outputs for greater reliability.

Uncertainty and Selective Prediction

Cross-Area Relevance: Speaking uncertainty estimates is essential in fields the place misinformation can have severe penalties, like healthcare and legislation. Utilizing LLMs’ means to precise uncertainty and selectively defer predictions when confidence is low generally is a essential device in these domains to stop the dissemination of inaccurate info.

The actual-world software of those fashions extends past answering questions. They can be utilized for affected person training, aiding in diagnostic processes, and even in coaching medical college students. Nonetheless, their deployment should be rigorously managed to keep away from reliance on AI with out correct human oversight.

As medical data evolves, LLMs should additionally adapt and study. This requires mechanisms for steady studying and updating, making certain that the fashions stay related and correct over time.