Hearken to this text

The Robotic Report just lately spoke with Ph.D. scholar Cheng Chi about his analysis at Stanford College and up to date publications about utilizing diffusion AI fashions for robotics purposes. He additionally mentioned the current common manipulation interface, or UMI gripper, mission, which demonstrates the capabilities of diffusion mannequin robotics.

The UMI gripper was a part of his Ph.D. thesis work, and he has open-sourced the gripper design and all the code in order that others can proceed to assist evolve the AI diffusion coverage work.

AI innovation accelerates

How did you get your begin in robotics?

Stanford researcher Cheng Chi. | Credit score: Huy Ha

I labored within the robotics trade for some time, beginning on the autonomous automobile firm Nuro, the place I used to be doing localization and mapping.

After which I utilized for my Ph.D. program and ended up with my advisor Shuran Track. We had been each at Columbia College after I began my Ph.D., after which final 12 months, she moved to Stanford to change into full-time school, and I moved [to Stanford] along with her.

For my Ph.D. analysis, I began as a classical robotics researcher, and I began working with machine studying, particularly for notion. Then in early 2022, diffusion fashions began to work for picture era, that’s when DALL-E 2 got here out, and that’s additionally when Secure Diffusion got here out.

I noticed the particular methods which diffusion fashions may very well be formulated to resolve a few actually huge issues for robotics, by way of end-to-end studying and within the precise illustration for robotics.

So, I wrote one of many first papers that introduced the diffusion mannequin into robotics, which known as diffusion coverage. That’s my paper for my earlier mission earlier than the UMI mission. And I feel that’s the muse of why the UMI gripper works. There’s a paradigm shift occurring, my mission was considered one of them, however there are additionally different robotics analysis tasks which can be additionally beginning to work.

So much has modified up to now few years. Is synthetic intelligence innovation is accelerating?

Sure, precisely. I skilled it firsthand in academia. Imitation studying was the dumbest factor attainable you may do for machine studying with robotics. It’s like, you teleoperate the robotic to gather knowledge, the info is paired with photos and the corresponding actions.

In school, we’re taught that individuals proved that on this paradigm of imitation studying or conduct, cloning doesn’t work. Individuals proved that errors develop exponentially. And that’s why you want reinforcement studying and all the opposite strategies that may tackle these limitations.

However thankfully, I wasn’t paying an excessive amount of consideration in school. So I simply went to the lab and tried it, and it labored surprisingly nicely. I wrote the code, I utilized the diffusion mannequin to this and for my first job; it simply labored. I stated, “That’s too straightforward. That’s not price a paper.”

I stored including extra duties like on-line benchmarks, making an attempt to interrupt the algorithm in order that I may discover a good angle that I may enhance on this dumb concept that may give me a paper, however I simply stored including increasingly issues, and it simply refused to interrupt.

So there are simulation benchmarks on-line. I used 4 completely different benchmarks and simply tried to seek out an angle to interrupt it in order that I may write a greater paper, however it simply didn’t break. Our baseline efficiency was 50% to 60%. And after making use of the diffusion mannequin to that, it was like 95%. So it was a soar by way of these. And that’s the second I noticed, perhaps there’s one thing huge occurring right here.

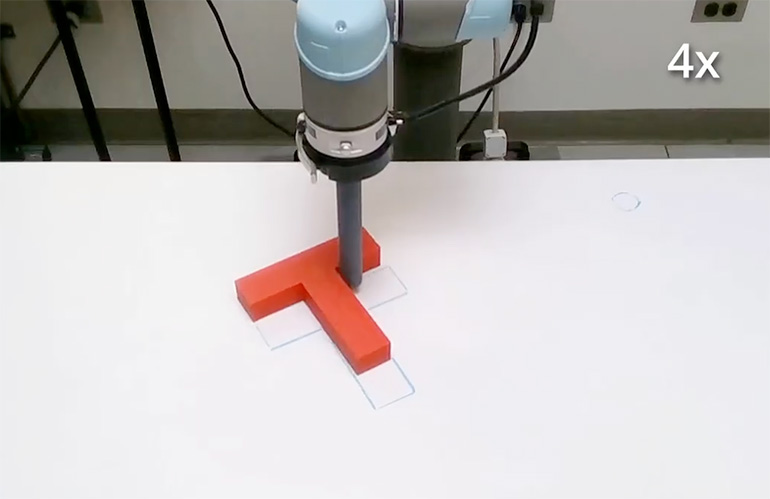

The primary diffusion coverage analysis at Columbia was to push a T into place on a desk. | Credit score: Cheng Chi

How did these findings result in revealed analysis?

That summer time, I interned at Toyota Analysis Institute, and that’s the place I began doing real-world experiments utilizing a UR5 [cobot] to push a block right into a location. It turned out that this labored rather well on the primary strive.

Usually, you want quite a lot of tuning to get one thing to work. However this was completely different. After I tried to perturb the system, it simply stored pushing it again to its authentic place.

And in order that paper obtained revealed, and I feel that’s my proudest work, I made the paper open-source, and I open-sourced all of the code as a result of the outcomes had been so good, I used to be frightened that individuals weren’t going to consider it. Because it turned out, it’s not a coincidence, and different folks can reproduce my outcomes and likewise get superb efficiency.

I noticed that now there’s a paradigm shift. Earlier than [this UMI Gripper research], I wanted to engineer a separate notion system, planning system, after which a management system. However now I can mix all of them with a single neural community.

An important factor is that it’s agnostic to duties. With the identical robotic, I can simply accumulate a distinct knowledge set and prepare a mannequin with a distinct knowledge set, and it’ll simply do the completely different duties.

Clearly, gathering the info set half is painful, as I have to do it 100 to 300 occasions for one setting to get it to work. However in fact, it’s perhaps one afternoon’s price of labor. In comparison with tuning a sim-to-real switch algorithm takes me just a few months, so this can be a huge enchancment.

Submit your presentation concept now.

Submit your presentation concept now.

UMI Gripper coaching ‘all in regards to the knowledge’

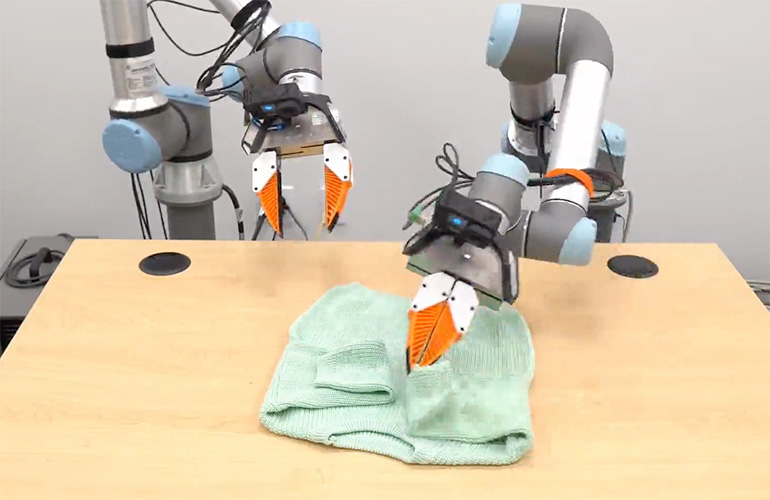

While you’re coaching the system for the UMI Gripper, you’re simply utilizing the imaginative and prescient suggestions and nothing else?

Simply the cameras and the top effector pose of the robotic — that’s it. We had two cameras: one facet digital camera that was mounted onto the desk, and the opposite one on the wrist.

That was the unique algorithm on the time, and I may change to a different job and use the identical algorithm, and it might simply work. This was a giant, huge distinction. Beforehand, we may solely afford one or two duties per paper as a result of it was so time-consuming to arrange a brand new job.

However with this paradigm, I can pump out a brand new job in just a few days. It’s a extremely huge distinction. That’s additionally the second I noticed that the important thing pattern is that it’s all about knowledge now. I noticed after coaching extra duties, that my code hadn’t been modified for just a few months.

The one factor that modified was the info, and every time the robotic doesn’t work, it’s not the code, it’s the info. So after I simply add extra knowledge, it really works higher.

And that prompted me to assume, that we’re into this paradigm of different AI fields as nicely. For instance, giant language fashions and imaginative and prescient fashions began with a small knowledge regime in 2015, however now with an enormous quantity of web knowledge, it really works like magic.

The algorithm doesn’t change that a lot. The one factor that modified is the dimensions of coaching, and perhaps the scale of the fashions, and makes me really feel like perhaps robotics is about to enter that that regime quickly.

Two UR cobots geared up with UMI grippers reveal the folding of a shirt. | Credit score: Cheng Chi video

Can these completely different AI fashions be stacked like Lego constructing blocks to construct extra refined programs?

I consider in huge fashions, however I feel they won’t be the identical factor as you think about, like Lego blocks. I believe that the best way you construct AI for robotics will probably be that you just take no matter duties you need to do, you accumulate a complete bunch of information for the duty, run that via a mannequin, and then you definately get one thing you should use.

In case you have a complete bunch of those several types of knowledge units, you may mix them, to coach a good larger mannequin. You’ll be able to name {that a} basis mannequin, and you may adapt it to no matter use case. You’re utilizing knowledge, not constructing blocks, and never code. That’s my expectation of how this can evolve.

However concurrently, there’s a there’s an issue right here. I feel the robotics trade was tailor-made towards the belief that robots are exact, repeatable, and predictable. However they’re not adaptable. So your complete robotics trade is geared in the direction of vertical end-use circumstances optimized for these properties.

Whereas robots powered by AI can have completely different units of properties, they usually gained’t be good at being exact. They gained’t be good at being dependable, they gained’t be good at being repeatable. However they are going to be good at generalizing to unseen environments. So you have to discover particular use circumstances the place it’s okay in case you fail perhaps 0.1% of the time.

Security versus generalization

Robots in trade should be secure 100% of the time. What do you assume the answer is to this requirement?

I feel if you wish to deploy robots in use circumstances the place security is vital, you both have to have a classical system or a shell that protects the AI system in order that it ensures that when one thing unhealthy occurs, a minimum of there’s a worst-case situation to ensure that one thing unhealthy doesn’t really occur.

Otherwise you design the {hardware} such that the {hardware} is [inherently] secure. {Hardware} is easy. Industrial robots for instance don’t rely that a lot on notion. They’ve costly motors, gearboxes, and harmonic drives to make a extremely exact and really stiff mechanism.

When you’ve got a robotic with a digital camera, it is vitally straightforward to implement imaginative and prescient servoing and make changes for imprecise robots. So robots don’t should be exact anymore. Compliance might be constructed into the robotic mechanism itself, and this could make it safer. However all of this is dependent upon discovering the verticals and use circumstances the place these properties are acceptable.