Giant language fashions (LLMs), akin to GPT-3 and PaLM, have proven spectacular progress lately, which have been pushed by scaling up fashions and coaching knowledge sizes. Nonetheless, a protracted standing debate has been whether or not LLMs can purpose symbolically (i.e., manipulating symbols primarily based on logical guidelines). For instance, LLMs are capable of carry out easy arithmetic operations when numbers are small, however wrestle to carry out with massive numbers. This means that LLMs haven’t realized the underlying guidelines wanted to carry out these arithmetic operations.

Whereas neural networks have highly effective sample matching capabilities, they’re vulnerable to overfitting to spurious statistical patterns within the knowledge. This doesn’t hinder good efficiency when the coaching knowledge is massive and numerous and the analysis is in-distribution. Nevertheless, for duties that require rule-based reasoning (akin to addition), LLMs wrestle with out-of-distribution generalization as spurious correlations within the coaching knowledge are sometimes a lot simpler to use than the true rule-based resolution. Because of this, regardless of vital progress in a wide range of pure language processing duties, efficiency on easy arithmetic duties like addition has remained a problem. Even with modest enchancment of GPT-4 on the MATH dataset, errors are nonetheless largely on account of arithmetic and calculation errors. Thus, an essential query is whether or not LLMs are able to algorithmic reasoning, which includes fixing a job by making use of a set of summary guidelines that outline the algorithm.

In “Educating Algorithmic Reasoning by way of In-Context Studying”, we describe an strategy that leverages in-context studying to allow algorithmic reasoning capabilities in LLMs. In-context studying refers to a mannequin’s skill to carry out a job after seeing just a few examples of it inside the context of the mannequin. The duty is specified to the mannequin utilizing a immediate, with out the necessity for weight updates. We additionally current a novel algorithmic prompting method that permits normal goal language fashions to attain sturdy generalization on arithmetic issues which might be harder than these seen within the immediate. Lastly, we exhibit {that a} mannequin can reliably execute algorithms on out-of-distribution examples with an acceptable selection of prompting technique.

By offering algorithmic prompts, we are able to train a mannequin the foundations of arithmetic by way of in-context studying. On this instance, the LLM (phrase predictor) outputs the right reply when prompted with a straightforward addition query (e.g., 267+197), however fails when requested the same addition query with longer digits. Nevertheless, when the harder query is appended with an algorithmic immediate for addition (blue field with white + proven beneath the phrase predictor), the mannequin is ready to reply appropriately. Furthermore, the mannequin is able to simulating the multiplication algorithm (X) by composing a collection of addition calculations.

By offering algorithmic prompts, we are able to train a mannequin the foundations of arithmetic by way of in-context studying. On this instance, the LLM (phrase predictor) outputs the right reply when prompted with a straightforward addition query (e.g., 267+197), however fails when requested the same addition query with longer digits. Nevertheless, when the harder query is appended with an algorithmic immediate for addition (blue field with white + proven beneath the phrase predictor), the mannequin is ready to reply appropriately. Furthermore, the mannequin is able to simulating the multiplication algorithm (X) by composing a collection of addition calculations.

Educating an algorithm as a ability

In an effort to train a mannequin an algorithm as a ability, we develop algorithmic prompting, which builds upon different rationale-augmented approaches (e.g., scratchpad and chain-of-thought). Algorithmic prompting extracts algorithmic reasoning talents from LLMs, and has two notable distinctions in comparison with different prompting approaches: (1) it solves duties by outputting the steps wanted for an algorithmic resolution, and (2) it explains every algorithmic step with enough element so there isn’t any room for misinterpretation by the LLM.

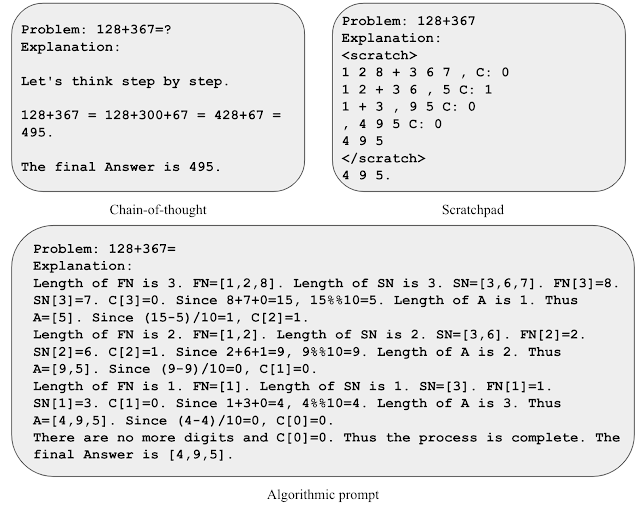

To realize instinct for algorithmic prompting, let’s think about the duty of two-number addition. In a scratchpad-style immediate, we course of every digit from proper to left and maintain observe of the carry worth (i.e., we add a 1 to the subsequent digit if the present digit is bigger than 9) at every step. Nevertheless, the rule of carry is ambiguous after seeing only some examples of carry values. We discover that together with express equations to explain the rule of carry helps the mannequin give attention to the related particulars and interpret the immediate extra precisely. We use this perception to develop an algorithmic immediate for two-number addition, the place we offer express equations for every step of computation and describe numerous indexing operations in non-ambiguous codecs.

Illustration of assorted immediate methods for addition.

Illustration of assorted immediate methods for addition.

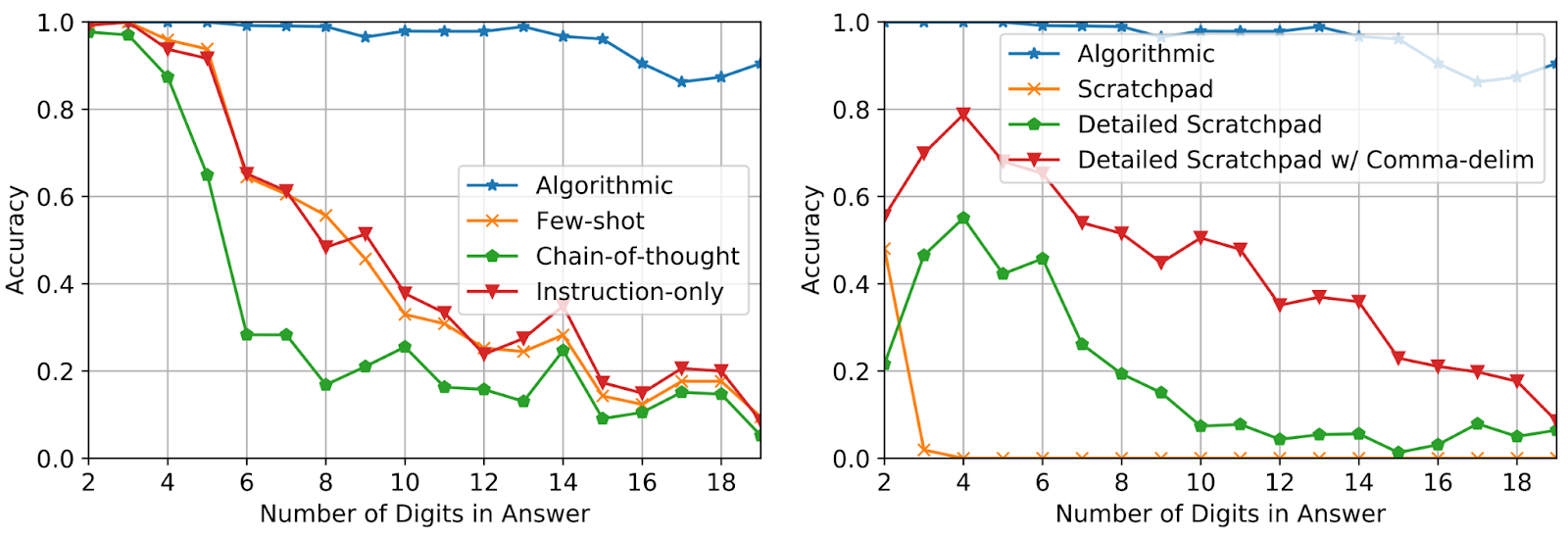

Utilizing solely three immediate examples of addition with reply size as much as 5 digits, we consider efficiency on additions of as much as 19 digits. Accuracy is measured over 2,000 complete examples sampled uniformly over the size of the reply. As proven beneath, the usage of algorithmic prompts maintains excessive accuracy for questions considerably longer than what’s seen within the immediate, which demonstrates that the mannequin is certainly fixing the duty by executing an input-agnostic algorithm.

Take a look at accuracy on addition questions of accelerating size for various prompting strategies.

Take a look at accuracy on addition questions of accelerating size for various prompting strategies.

Leveraging algorithmic abilities as software use

To judge if the mannequin can leverage algorithmic reasoning in a broader reasoning course of, we consider efficiency utilizing grade faculty math phrase issues (GSM8k). We particularly try to interchange addition calculations from GSM8k with an algorithmic resolution.

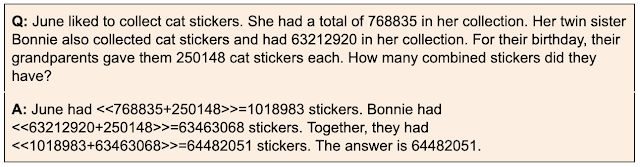

Motivated by context size limitations and attainable interference between totally different algorithms, we discover a technique the place differently-prompted fashions work together with each other to resolve complicated duties. Within the context of GSM8k, now we have one mannequin that makes a speciality of casual mathematical reasoning utilizing chain-of-thought prompting, and a second mannequin that makes a speciality of addition utilizing algorithmic prompting. The casual mathematical reasoning mannequin is prompted to output specialised tokens with the intention to name on the addition-prompted mannequin to carry out the arithmetic steps. We extract the queries between tokens, ship them to the addition-model and return the reply to the primary mannequin, after which the primary mannequin continues its output. We consider our strategy utilizing a troublesome drawback from the GSM8k (GSM8k-Arduous), the place we randomly choose 50 addition-only questions and enhance the numerical values within the questions.

An instance from the GSM8k-Arduous dataset. The chain-of-thought immediate is augmented with brackets to point when an algorithmic name must be carried out.

An instance from the GSM8k-Arduous dataset. The chain-of-thought immediate is augmented with brackets to point when an algorithmic name must be carried out.

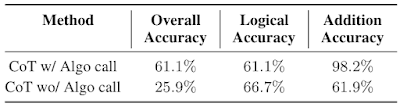

We discover that utilizing separate contexts and fashions with specialised prompts is an efficient method to sort out GSM8k-Arduous. Under, we observe that the efficiency of the mannequin with algorithmic name for addition is 2.3x the chain-of-thought baseline. Lastly, this technique presents an instance of fixing complicated duties by facilitating interactions between LLMs specialised to totally different abilities by way of in-context studying.

Chain-of-thought (CoT) efficiency on GSM8k-Arduous with or with out algorithmic name.

Chain-of-thought (CoT) efficiency on GSM8k-Arduous with or with out algorithmic name.

Conclusion

We current an strategy that leverages in-context studying and a novel algorithmic prompting method to unlock algorithmic reasoning talents in LLMs. Our outcomes recommend that it might be attainable to rework longer context into higher reasoning efficiency by offering extra detailed explanations. Thus, these findings level to the power of utilizing or in any other case simulating lengthy contexts and producing extra informative rationales as promising analysis instructions.

Acknowledgements

We thank our co-authors Behnam Neyshabur, Azade Nova, Hugo Larochelle and Aaron Courville for his or her worthwhile contributions to the paper and nice suggestions on the weblog. We thank Tom Small for creating the animations on this publish. This work was accomplished throughout Hattie Zhou’s internship at Google Analysis.