Current advances in giant language fashions (LLMs) are very promising as mirrored of their functionality for normal problem-solving in few-shot and zero-shot setups, even with out specific coaching on these duties. That is spectacular as a result of within the few-shot setup, LLMs are offered with just a few question-answer demonstrations previous to being given a take a look at query. Much more difficult is the zero-shot setup, the place the LLM is straight prompted with the take a look at query solely.

Despite the fact that the few-shot setup has dramatically lowered the quantity of information required to adapt a mannequin for a particular use-case, there are nonetheless instances the place producing pattern prompts might be difficult. For instance, handcrafting even a small variety of demos for the broad vary of duties coated by general-purpose fashions might be troublesome or, for unseen duties, unimaginable. For instance, for duties like summarization of lengthy articles or people who require area data (e.g., medical query answering), it may be difficult to generate pattern solutions. In such conditions, fashions with excessive zero-shot efficiency are helpful since no guide immediate technology is required. Nevertheless, zero-shot efficiency is usually weaker because the LLM isn’t offered with steerage and thus is vulnerable to spurious output.

In “Higher Zero-shot Reasoning with Self-Adaptive Prompting”, revealed at ACL 2023, we suggest Consistency-Primarily based Self-Adaptive Prompting (COSP) to handle this dilemma. COSP is a zero-shot automated prompting methodology for reasoning issues that fastidiously selects and constructs pseudo-demonstrations for LLMs utilizing solely unlabeled samples (which might be sometimes straightforward to acquire) and the fashions’ personal predictions. With COSP, we largely shut the efficiency hole between zero-shot and few-shot whereas retaining the fascinating generality of zero-shot prompting. We comply with this with “Common Self-Adaptive Prompting“ (USP), accepted at EMNLP 2023, during which we lengthen the thought to a variety of normal pure language understanding (NLU) and pure language technology (NLG) duties and display its effectiveness.

Prompting LLMs with their very own outputs

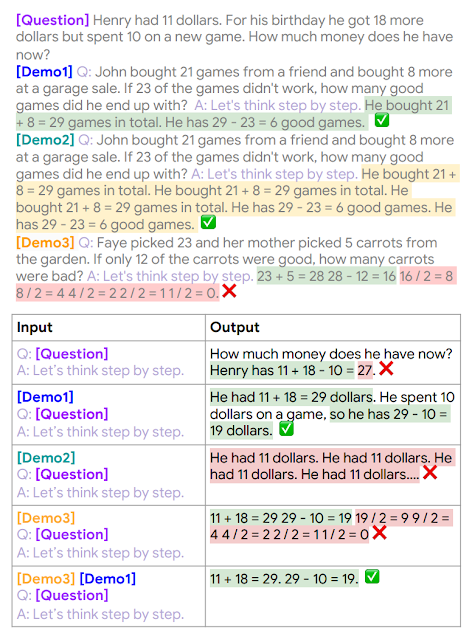

Figuring out that LLMs profit from demonstrations and have no less than some zero-shot skills, we puzzled whether or not the mannequin’s zero-shot outputs may function demonstrations for the mannequin to immediate itself. The problem is that zero-shot options are imperfect, and we danger giving LLMs poor high quality demonstrations, which could possibly be worse than no demonstrations in any respect. Certainly, the determine under exhibits that including an accurate demonstration to a query can result in an accurate resolution of the take a look at query (Demo1 with query), whereas including an incorrect demonstration (Demo 2 + questions, Demo 3 with questions) results in incorrect solutions. Due to this fact, we have to choose dependable self-generated demonstrations.

Instance inputs & outputs for reasoning duties, which illustrates the necessity for fastidiously designed choice process for in-context demonstrations (MultiArith dataset & PaLM-62B mannequin): (1) zero-shot chain-of-thought with no demo: appropriate logic however flawed reply; (2) appropriate demo (Demo1) and proper reply; (3) appropriate however repetitive demo (Demo2) results in repetitive outputs; (4) inaccurate demo (Demo3) results in a flawed reply; however (5) combining Demo3 and Demo1 once more results in an accurate reply.

Instance inputs & outputs for reasoning duties, which illustrates the necessity for fastidiously designed choice process for in-context demonstrations (MultiArith dataset & PaLM-62B mannequin): (1) zero-shot chain-of-thought with no demo: appropriate logic however flawed reply; (2) appropriate demo (Demo1) and proper reply; (3) appropriate however repetitive demo (Demo2) results in repetitive outputs; (4) inaccurate demo (Demo3) results in a flawed reply; however (5) combining Demo3 and Demo1 once more results in an accurate reply.

COSP leverages a key remark of LLMs: that assured and constant predictions are extra doubtless appropriate. This remark, in fact, is dependent upon how good the uncertainty estimate of the LLM is. Fortunately, in giant fashions, earlier works counsel that the uncertainty estimates are sturdy. Since measuring confidence requires solely mannequin predictions, not labels, we suggest to make use of this as a zero-shot proxy of correctness. The high-confidence outputs and their inputs are then used as pseudo-demonstrations.

With this as our beginning premise, we estimate the mannequin’s confidence in its output based mostly on its self-consistency and use this measure to pick out sturdy self-generated demonstrations. We ask LLMs the identical query a number of occasions with zero-shot chain-of-thought (CoT) prompting. To information the mannequin to generate a spread of doable rationales and ultimate solutions, we embrace randomness managed by a “temperature” hyperparameter. In an excessive case, if the mannequin is 100% sure, it ought to output equivalent ultimate solutions every time. We then compute the entropy of the solutions to gauge the uncertainty — the solutions which have excessive self-consistency and for which the LLM is extra sure, are more likely to be appropriate and can be chosen.

Assuming that we’re offered with a set of unlabeled questions, the COSP methodology is:

Enter every unlabeled query into an LLM, acquiring a number of rationales and solutions by sampling the mannequin a number of occasions. Essentially the most frequent solutions are highlighted, adopted by a rating that measures consistency of solutions throughout a number of sampled outputs (increased is healthier). Along with favoring extra constant solutions, we additionally penalize repetition inside a response (i.e., with repeated phrases or phrases) and encourage variety of chosen demonstrations. We encode the choice in the direction of constant, un-repetitive and various outputs within the type of a scoring operate that consists of a weighted sum of the three scores for collection of the self-generated pseudo-demonstrations.

We concatenate the pseudo-demonstrations into take a look at questions, feed them to the LLM, and procure a ultimate predicted reply.

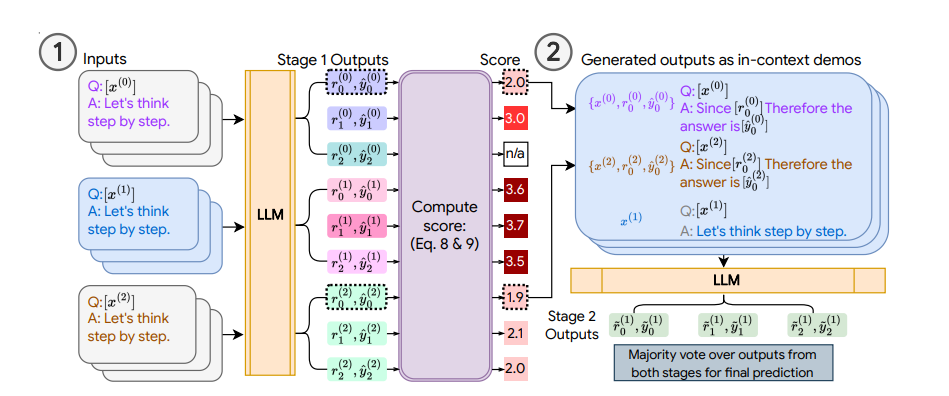

Illustration of COSP: In Stage 1 (left), we run zero-shot CoT a number of occasions to generate a pool of demonstrations (every consisting of the query, generated rationale and prediction) and assign a rating. In Stage 2 (proper), we increase the present take a look at query with pseudo-demos (blue containers) and question the LLM once more. A majority vote over outputs from each phases varieties the ultimate prediction.

Illustration of COSP: In Stage 1 (left), we run zero-shot CoT a number of occasions to generate a pool of demonstrations (every consisting of the query, generated rationale and prediction) and assign a rating. In Stage 2 (proper), we increase the present take a look at query with pseudo-demos (blue containers) and question the LLM once more. A majority vote over outputs from each phases varieties the ultimate prediction.

COSP focuses on question-answering duties with CoT prompting for which it’s straightforward to measure self-consistency because the questions have distinctive appropriate solutions. However this may be troublesome for different duties, equivalent to open-ended question-answering or generative duties that don’t have distinctive solutions (e.g., textual content summarization). To deal with this limitation, we introduce USP during which we generalize our method to different normal NLP duties:

Classification (CLS): Issues the place we are able to compute the chance of every class utilizing the neural community output logits of every class. On this means, we are able to measure the uncertainty with out a number of sampling by computing the entropy of the logit distribution.

Brief-form technology (SFG): Issues like query answering the place we are able to use the identical process talked about above for COSP, however, if obligatory, with out the rationale-generating step.

Lengthy-form technology (LFG): Issues like summarization and translation, the place the questions are sometimes open-ended and the outputs are unlikely to be equivalent, even when the LLM is definite. On this case, we use an overlap metric during which we compute the common of the pairwise ROUGE rating between the totally different outputs to the identical question.

Illustration of USP in exemplary duties (classification, QA and textual content summarization). Just like COSP, the LLM first generates predictions on an unlabeled dataset whose outputs are scored with logit entropy, consistency or alignment, relying on the duty kind, and pseudo-demonstrations are chosen from these input-output pairs. In Stage 2, the take a look at situations are augmented with pseudo-demos for prediction.

Illustration of USP in exemplary duties (classification, QA and textual content summarization). Just like COSP, the LLM first generates predictions on an unlabeled dataset whose outputs are scored with logit entropy, consistency or alignment, relying on the duty kind, and pseudo-demonstrations are chosen from these input-output pairs. In Stage 2, the take a look at situations are augmented with pseudo-demos for prediction.

We compute the related confidence scores relying on the kind of job on the aforementioned set of unlabeled take a look at samples. After scoring, much like COSP, we decide the assured, various and fewer repetitive solutions to type a model-generated pseudo-demonstration set. We lastly question the LLM once more in a few-shot format with these pseudo-demonstrations to acquire the ultimate predictions on your complete take a look at set.

Key Outcomes

For COSP, we deal with a set of six arithmetic and commonsense reasoning issues, and we evaluate towards 0-shot-CoT (i.e., “Let’s assume step-by-step“ solely). We use self-consistency in all baselines in order that they use roughly the identical quantity of computational sources as COSP. In contrast throughout three LLMs, we see that zero-shot COSP considerably outperforms the usual zero-shot baseline.

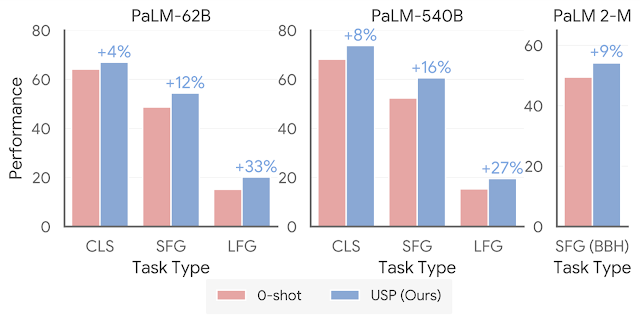

USP improves considerably on 0-shot efficiency. “CLS” is a mean of 15 classification duties; “SFG” is the common of 5 short-form technology duties; “LFG” is the common of two summarization duties. “SFG (BBH)” is a mean of all BIG-Bench Exhausting duties, the place every query is in SFG format.

USP improves considerably on 0-shot efficiency. “CLS” is a mean of 15 classification duties; “SFG” is the common of 5 short-form technology duties; “LFG” is the common of two summarization duties. “SFG (BBH)” is a mean of all BIG-Bench Exhausting duties, the place every query is in SFG format.

For USP, we broaden our evaluation to a a lot wider vary of duties, together with greater than 25 classifications, short-form technology, and long-form technology duties. Utilizing the state-of-the-art PaLM 2 fashions, we additionally take a look at towards the BIG-Bench Exhausting suite of duties the place LLMs have beforehand underperformed in comparison with folks. We present that in all instances, USP once more outperforms the baselines and is aggressive to prompting with golden examples.

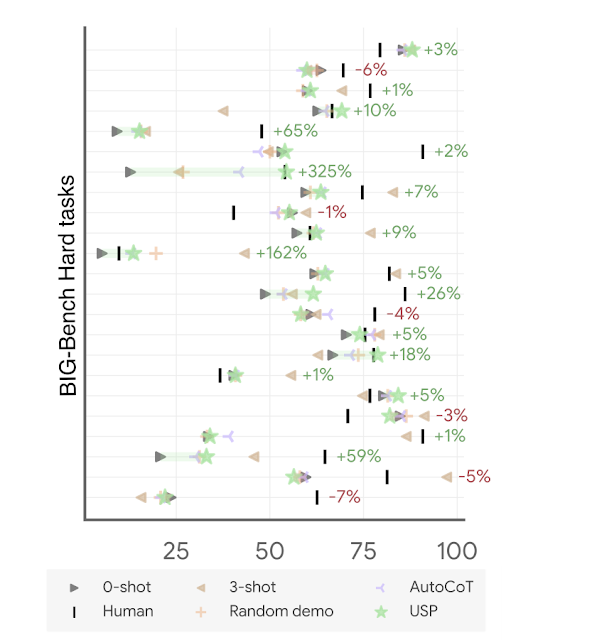

Accuracy on BIG-Bench Exhausting duties with PaLM 2-M (every line represents a job of the suite). The achieve/lack of USP (inexperienced stars) over normal 0-shot (inexperienced triangles) is proven in percentages. “Human” refers to common human efficiency; “AutoCoT” and “Random demo” are baselines we in contrast towards within the paper; and “3-shot” is the few-shot efficiency for 3 handcrafted demos in CoT format.

Accuracy on BIG-Bench Exhausting duties with PaLM 2-M (every line represents a job of the suite). The achieve/lack of USP (inexperienced stars) over normal 0-shot (inexperienced triangles) is proven in percentages. “Human” refers to common human efficiency; “AutoCoT” and “Random demo” are baselines we in contrast towards within the paper; and “3-shot” is the few-shot efficiency for 3 handcrafted demos in CoT format.

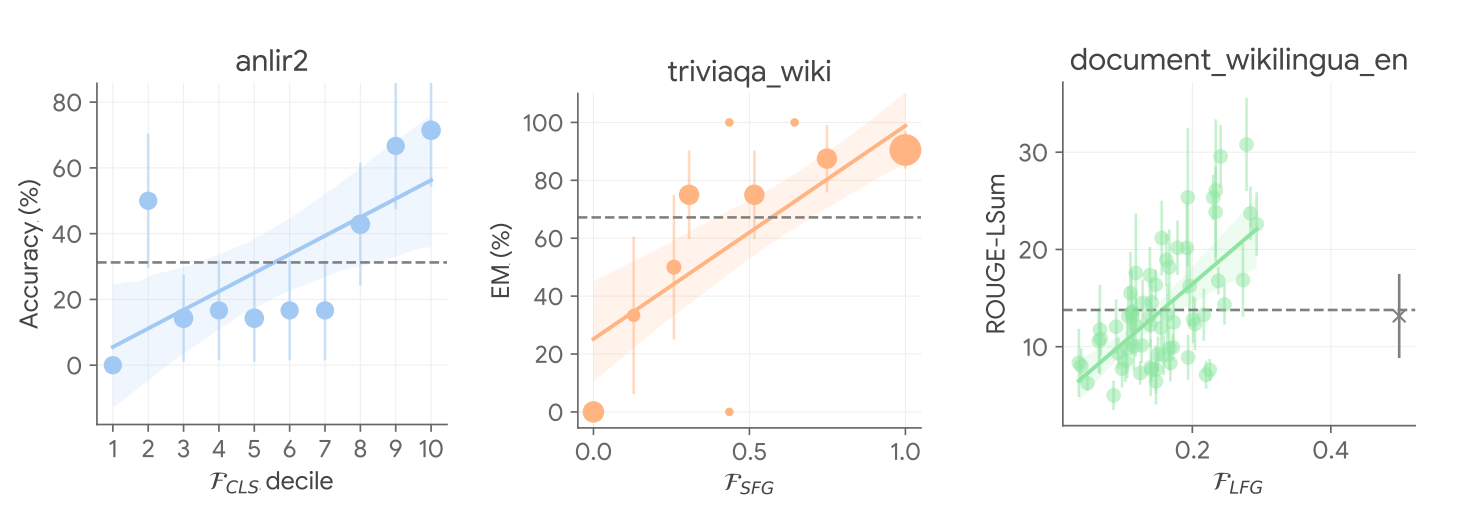

We additionally analyze the working mechanism of USP by validating the important thing remark above on the relation between confidence and correctness, and we discovered that in an amazing majority of the instances, USP picks assured predictions which might be extra doubtless higher in all job sorts thought of, as proven within the determine under.

USP picks assured predictions which might be extra doubtless higher. Floor-truth efficiency metrics towards USP confidence scores in chosen duties in numerous job sorts (blue: CLS, orange: SFG, inexperienced: LFG) with PaLM-540B.

USP picks assured predictions which might be extra doubtless higher. Floor-truth efficiency metrics towards USP confidence scores in chosen duties in numerous job sorts (blue: CLS, orange: SFG, inexperienced: LFG) with PaLM-540B.

Conclusion

Zero-shot inference is a extremely sought-after functionality of recent LLMs, but the success during which poses distinctive challenges. We suggest COSP and USP, a household of versatile, zero-shot automated prompting methods relevant to a variety of duties. We present giant enchancment over the state-of-the-art baselines over quite a few job and mannequin mixtures.

Acknowledgements

This work was carried out by Xingchen Wan, Ruoxi Solar, Hootan Nakhost, Hanjun Dai, Julian Martin Eisenschlos, Sercan Ö. Arık, and Tomas Pfister. We wish to thank Jinsung Yoon Xuezhi Wang for offering useful evaluations, and different colleagues at Google Cloud AI Analysis for his or her dialogue and suggestions.