Take heed to this text

ToF sensors present 3D info of the world round a cellular robotic, offering vital information to the robots notion algorithms. | Credit score: E-con Programs

Within the ever-evolving world of robotics, the seamless integration of applied sciences guarantees to revolutionize how people work together with machines. An instance of transformative innovation, the emergence of time-of-flight or ToF sensors is essential in enabling cellular robots to raised understand the world round them.

ToF sensors have the same utility to lidar expertise in that each use a number of sensors for creating depth maps. Nevertheless, the important thing distinction lies in these cameras‘ skill to supply depth pictures that may be processed quicker, and they are often constructed into techniques for numerous functions.

This maximizes the utility of ToF expertise in robotics. It has the potential to profit industries reliant on exact navigation and interplay.

Why cellular robots want 3D imaginative and prescient

Traditionally, RGB cameras had been the first sensor for industrial robots, capturing 2D pictures primarily based on shade info in a scene. These 2D cameras have been used for many years in industrial settings to information robotic arms in pick-and-pack functions.

Such 2D RGB cameras at all times require a camera-to-arm calibration sequence to map scene information to the robotic’s world coordinate system. 2D cameras are unable to gauge distances with out this calibration sequence, thus making them unusable as sensors for impediment avoidance and steering.

Autonomous cellular robots (AMRs) should precisely understand the altering world round them to keep away from obstacles and construct a world map whereas remaining localized inside that map. Time-of-flight sensors have been in existence because the late Nineteen Seventies and have advanced to turn into one of many main applied sciences for extracting depth information. It was pure to undertake ToF sensors to information AMRs round their environments.

Lidar was adopted as one of many early forms of ToF sensors to allow AMRs to sense the world round them. Lidar bounces a laser mild pulse off of surfaces and measures the gap from the sensor to the floor.

Nevertheless, the primary lidar sensors may solely understand a slice of the world across the robotic utilizing the flight path of a single laser line. These lidar items had been sometimes positioned between 4 and 12 in. above the bottom, and so they may solely see issues that broke by way of that aircraft of sunshine.

The following era of AMRs started to make use of 3D stereo RGB cameras that present 3D depth info information. These sensors use two stereo-mounted RGB cameras and a “mild dot projector” that allows the digital camera array to precisely view the projected mild on the science in entrance of the digital camera.

Corporations equivalent to Photoneo and Intel RealSense had been two of the early 3D RGB digital camera builders on this market. These cameras initially enabled industrial functions equivalent to figuring out and selecting particular person objects from bins.

Till the appearance of those sensors, bin selecting was generally known as a “holy grail” utility, one which the imaginative and prescient steering neighborhood knew can be tough to resolve.

The digital camera panorama evolves

A salient function is the cameras’ low-light efficiency which prioritizes human-eye security. The 6 m (19.6 ft.) vary in far mode facilitates optimum folks and object detection, whereas the close-range mode excels in quantity measurement and high quality inspection.

The cameras return the info within the type of a “level cloud.” On-camera processing functionality mitigates computational overhead and is probably helpful for functions like warehouse robots, service robots, robotic arms, autonomous guided autos (AGVs), people-counting techniques, 3D face recognition for anti-spoofing, and affected person care and monitoring.

Time-of-flight expertise is considerably extra inexpensive than different 3D-depth range-scanning applied sciences like structured-light digital camera/projector techniques.

As an example, ToF sensors facilitate the autonomous motion of outside supply robots by exactly measuring depth in actual time. This versatile utility of ToF cameras in robotics guarantees to serve industries reliant on exact navigation and interplay.

How ToF sensors take notion a step additional

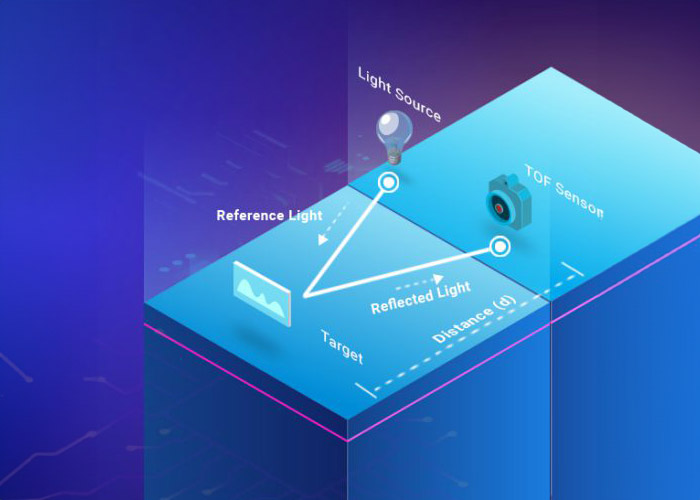

A basic distinction between time-of-flight and RGB cameras is their skill to understand depth. RGB cameras seize pictures primarily based on shade info, whereas ToF cameras measure the time taken for mild to bounce off an object and return, thus rendering intricate depth notion.

ToF sensors seize information to generate intricate 3D maps of environment with unparalleled precision, thus endowing cellular robots with an added dimension of depth notion.

Moreover, stereo imaginative and prescient expertise has additionally advanced. Utilizing an IR sample projector, it illuminates the scene and compares disparities of stereo pictures from two 2D sensors – making certain superior low-light efficiency.

Compared, ToF cameras use a sensor, a lighting unit, and a depth-processing unit. This permits AMRs to have full depth-perception capabilities out of the field with out additional calibration.

One key benefit of ToF cameras is that they work by extracting 3D pictures at excessive body charges — with the speedy division of the background and foreground. They’ll additionally operate in each mild and darkish lighting circumstances by way of the usage of lively lighting parts.

In abstract, in contrast with RGB cameras, ToF cameras can function in low-light functions and with out the necessity for calibration. ToF digital camera items will also be extra inexpensive than stereo RGB cameras or most lidar items.

One draw back for ToF cameras is that they have to be utilized in isolation, as their emitters can confuse close by cameras. ToF cameras additionally can’t be utilized in overly brilliant environments as a result of the ambient mild can wash out the emitted mild supply.

A ToF sensor is nothing however a sensor that makes use of time of flight to measure depth and distance. | Credit score: E-con Programs

Purposes of ToF sensors

ToF cameras are enabling a number of AMR/AGV functions in warehouses. These cameras present warehouse operations with depth notion intelligence that allows robots to see the world round them. This information allows the robots to make crucial enterprise selections with accuracy, comfort, and pace. These embody functionalities equivalent to:

Localization: This helps AMRs determine positions by scanning the environment to create a map and match the knowledge collected to recognized information

Mapping: It creates a map by utilizing the transit time of the sunshine mirrored from the goal object with the SLAM (simultaneous localization and mapping) algorithm

Navigation: Can transfer from Level A to Level B on a recognized map

With ToF expertise, AMRs can perceive their setting in 3D earlier than deciding the trail to be taken to keep away from obstacles.

Lastly, there’s odometry, the method of estimating any change within the place of the cellular robotic over a while by analyzing information from movement sensors. ToF expertise has proven that it may be fused with different sensors to enhance the accuracy of AMRs.

Concerning the creator

Maharajan Veerabahu has greater than 20 years of expertise in embedded software program and product improvement, and he’s a co-founder and vp of product improvement companies at e-con Programs, a outstanding OEM digital camera product and design companies firm. Veerabahu can be a co-founder of VisAi Labs, a pc imaginative and prescient and AI R&D unit that gives imaginative and prescient AI-based options for his or her digital camera prospects.